Writing a simple Compiler on my own - Abstract Syntax Tree Principle [C][Flex][Bison]

[Custom Thumbnail]

All the Code of the series can be found at the Github repository: https://github.com/drifter1/compiler

Introduction

Hello it's a me again @drifter1! Today we continue with my Compiler Series, a series where we implement a complete compiler for a simple C-like language by using the C-tools Flex and Bison. In this article I will give a brief introduction to Abstract Syntax Trees and why we use them in Compiler Design. This article will be completely theoretical and the actual implementation will come in the later articles.The topics that we will cover today are:

- Intermediate Code Generation

- IC Representations

- How to design an AST

Requirements:

Actually you need to read and understand all the topics that I covered in the series as a whole, as these articles will give you access to knowledge about:- What Compiler Design is (mainly the steps)

- For which exact Language the Compiler is build for (Tokens and Grammar)

- How to use Flex and Bison

- How to implement a lexer and parser for the language using those tools

- What the Symbol Table is and how we implement it

- How we combine Flex and Bison together

- How we can pass information from the Lexer to the Parser

- How we define operator priorities, precedencies and associativity

- What Semantic Analysis is (Attributes, SDT etc.)

- How we do the so called "Scope Resolution"

- How we declare types and check the type inter-compatibility for different cases, including function parameters

- How we check function calls later on using a "Revisit queue", as function declarations mostly happen after functions get used (in our Language)

Difficulty:

Talking about the series in general this series can be rated:- Intermediate to Advanced

- Medium

Actual Tutorial Content

Intermediate Code Generation

After checking if a program is lexically, grammatically and semantically correct, we can then generate our target code, which is mostly machine code. But, we mostly don't translate our source code directly into the target code, instead we first turn it into a so called Intermediate code form.There are some very important reasons for doing this. The most important of all might be that we don't have to write a completely new compiler for each new target language. Also, note that intermediate code can be improved and optimized much easier. So, creating an intermediate code we separate the program analysis from the code synthesis, making it much easier to setup different code synthesis code for different machines.Hmm, so yes we create an intermediate code and all that, but how exactly does such a code look like? Well, it's not actual code and there are also many many ways of representing it, where each of them has it's own benefits and drawbacks. We can write:

- High Level IR, which is close to the source language, meaning that source code modifications are easier, but target code optimizations not.

- Low Level IR, which is close to the target language and so good for machine-dependent optimizations.

- Language dependent and Language independent, which are self-explanatory.

As you might have already guessed, we try to create machine-independent intermediate code, so that the only machine-dependent procedure of a compiler is the target code generation. Doing that we of course achieve portability! Source code optimizations can be done directly on the intermediate code, having target code generation and optimizations be completely independent from them.

Intermediate Code Representations

Let's now get into commonly used intermediate code representations..Three-Address Code

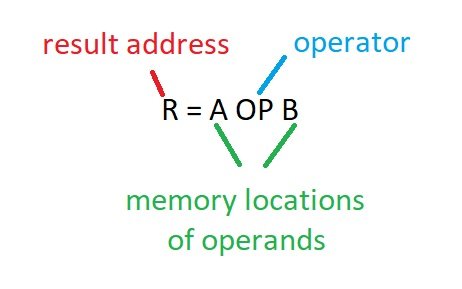

Statements mostly involve three references, where two are the operands and one is the result. Such a statement is known as a three-address statement and mostly called three-address code. Of course most assembly languages work exactly like that, having registers or memory addresses/locations in the place of the operands and result.The general form of such a statement is:

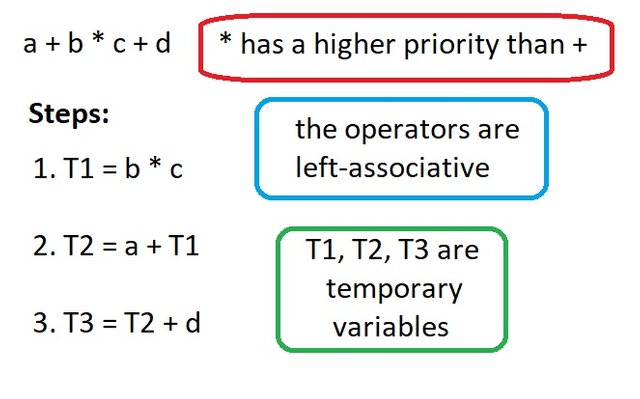

Talking about more complex expressions we of course have to include temporary variables for in-between results. Let's get into the Example of Reference 2 to understand this better:

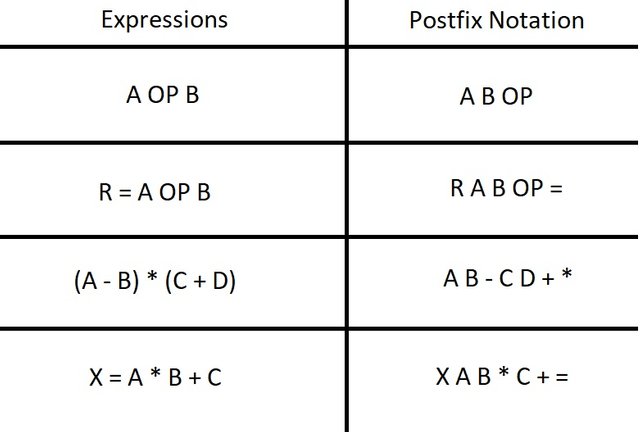

Postfix Notation

Another very interesting way of representing IC is using a Postfix notation. So, instead of writing the operator "infix" and so in-between of the operands you put it right after the operators (postfix). Here are some example of expressions and their corresponding postfix notation:

Let's analyze these cases a little bit more, so that you understand the concept even better:

- The first case is very simple, you can see that we just put the operator after the two operands.

- In the second case something similar happens. Forget about the two operands that follow each other. Applying the "OP" operator we will get some result and let's call it T. So, in the end we have "R T =", which is of course exactly what we expected to see!

- In the same way the third case has 3 operators. "AB-" and "CD+" will give some temporary result. Let's call these results S and T. So, in the end we only have "S T *".

- The last case is similar. "AB *" will give some temporary result T. So, then we have "X T C + =". Applying the operator +, we will get some other result, let's call it S. This shows us that in the end what remains is simply: "X S =".

Abstract Syntax Tree

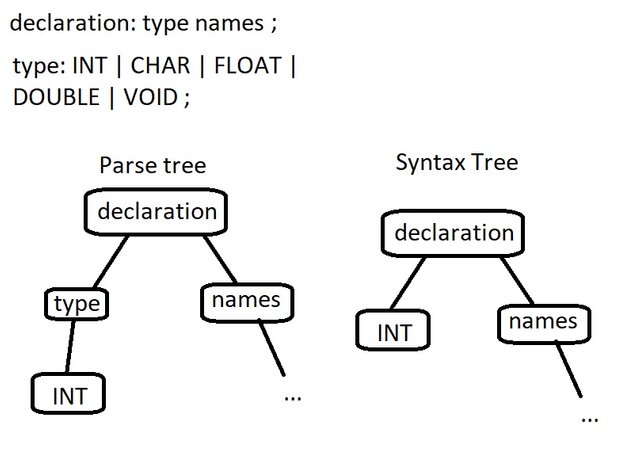

When parsing using Bison we create a Parse Tree. This Parse Tree is complex and contains child nodes that are useless, considering that we can move their information to a parent node with ease. So, what do we do? Well, we simply create a condensed and abstract form of the parse tree, which is mostly called a Syntax Tree (or Abstract Syntax Tree). So, operators and keyword nodes will be moved to parents, by completely removing chains of single productions.For example, let's think about declarations, so that we get a little bit closer to our language. A declaration consists of a type and names that follow it. Having multiple types, what comes out from the type-rule is a node "type" with a child that gives the actual type information being INT, CHAR etc. So, the "type"-part of a parse and syntax tree for a integer declaration could be:

I guess it is even clearer for mathematical expressions, where each internal node is an operator, whilst the child nodes are operands. Great visual examples around that can be found at the references 2 and 4.

So, an abstract syntax tree is a way of representing the syntax of a programming language as a tree-like structure, which is similar to the parse tree, but a whole lot smaller. By tree-like I refer to the optimizations that might turn it into a DAG (Directed acyclic graph) later on, taking care of duplicate nodes and node-groups. Focusing only on the important syntactical elements of a language, it's much easier to work with this form of intermediate code. Of course being tree-like means that we can traverse this tree using pre-order traversal, which of course will give us a Prefix Notation of the whole program! This prefix notation can then be easily converted to three-address code, which is what most assembly languages look like. I guess you can see that we will use all three types of IC, but only the AST is of great importance for now, as it is what we will be using now!

How to design an AST

Based on the reference articles, we can say that the AST design is closely linked to the actual design of a compiler, and which exact feature we want and expect it to have. So, an AST has to do the following:- Preserve variable types and their location in source code (something that we already do using the Symbol Table)

- Keep the correct execution order of statements, having it explicitly represented and well defined

- Left and right operands of operators (in expressions) have to be stored and identified correctly

- The assigned values of identifiers must be stored for assignment statements

- Of course not all operations require two elements and so we will have to allow an arbitrarily large number of children. Something like that happens in the "names"-rule of our language, inside of declarations for example. We can have any number of names of a specific type!

- Different operations don't necessarily have to be stored in different types of nodes. We have to keep some node hierarchy

- Traversing the AST should be a simple process, so that the intermediate code in form of a Prefix notation, can be gotten fast

- We should be able to get back to source code form, while un-parsing the AST

The AST will be used in Semantic Analysis, as semantics can be passed and checked much easier using such a structure, then when having to deal with the Parse Tree that comes out of Bison. So, expect me to get into an implementation from the next article on, getting the compiler to a much better state than it is now :)

RESOURCES

References:

The following helped me write today's article:- https://www.tutorialspoint.com/compiler_design/compiler_design_intermediate_code_generations.htm

- https://www.geeksforgeeks.org/intermediate-code-generation-in-compiler-design/

- https://www.javatpoint.com/intermediate-code

- https://www.javatpoint.com/parse-tree-and-syntax-tree

- https://www.techopedia.com/definition/22431/abstract-syntax-tree-ast

- https://en.wikipedia.org/wiki/Abstract_syntax_tree

Images:

All of the images are custom-made!Previous parts of the series

- Introduction -> What is a compiler, what you have to know and what you will learn

- A simple C Language -> Simplified C, comparison with C, tokens, basic structure

- Lexical Analysis using Flex -> Theory, Regular Expressions, Flex, Lexer

- Symbol Table (basic structure) ->Why Symbol Tables, Basic Implementation

- Using Symbol Table in the Lexer -> Flex and Symbol Table combination

- Syntax Analysis Theory -> Syntax Analysis, grammar types and parsing

- Bison basics -> Bison tutorial actually

- Creating a grammar for our Language -> Grammar and first Parser

- Combine Flex and Bison -> lexer and parser combined

- Passing information from Lexer to Parser -> Bug Fix, yylval variable, YYSTYPE union, Setting up the Parser, Passing information "directly" and through the symbol table (special case) with examples.

- Finishing Off The Grammar/Parser (part 1) -> Adding the missing grammar rules, small fixes

- Finishing Off The Grammar/Parser (part 2) -> Operator priorities, precedencies and associativity, complete example file (for testing), grammar rule visualization, finish off grammar/parser

- Semantic Analysis Theory -> What is Semantic Analysis about, CSG and CSL, Attribute Grammars, Syntax Directed Translations (SDT)

- Semantics Examples -> Visualization of Semantics for different rules/cases, needed attributes, what we have to implement from now on

- Scope Resolution using the Symbol Table -> What we have now, scope resolution, integration, compiler output

- Type Declaration and Checking -> Type declaration and type checking code, "simple" code integration

- Function Semantics (part 1) -> Code for parameter definitions, function declarations and parameter compatibility checking

- Function Semantics (part 2) -> Revisit queue concept, basic implementation, inserting undeclared identifiers

Final words | Next up on the project

And this is actually it for today's post! I hope that I explained everything as much as I needed to, meaning that you learned something out of it.Next up on this series are:

- Semantic analysis (using semantic rules in Bison)

- Intermediate Code generation (Abstract Syntax Tree Implementation)

- Machine Code generation (MIPS Assembly)

So, see ya next time!

GitHub Account:

https://github.com/drifter1

Keep on drifting! ;)

As always, we enjoy reading your tutorials.

Your high level theoretical overview and preparation for what's to come next in the implementation is well-crafted. Illustrations are well done!

We can't wait!

Your contribution has been evaluated according to Utopian policies and guidelines, as well as a predefined set of questions pertaining to the category.

To view those questions and the relevant answers related to your post, click here.

Need help? Write a ticket on https://support.utopian.io/.

Chat with us on Discord.

[utopian-moderator]

Thank you for your review, @mcfarhat! Keep up the good work!

Hello! Your post has been resteemed and upvoted by @ilovecoding because we love coding! Keep up good work! Consider upvoting this comment to support the @ilovecoding and increase your future rewards! ^_^ Steem On!

Reply !stop to disable the comment. Thanks!

This post has been voted on by the SteemSTEM curation team and voting trail in collaboration with @curie.

If you appreciate the work we are doing then consider voting both projects for witness by selecting stem.witness and curie!

For additional information please join us on the SteemSTEM discord and to get to know the rest of the community!

Hey, @drifter1!

Thanks for contributing on Utopian.

Congratulations! Your contribution was Staff Picked to receive a maximum vote for the tutorials category on Utopian for being of significant value to the project and the open source community.

We’re already looking forward to your next contribution!

Get higher incentives and support Utopian.io!

Simply set @utopian.pay as a 5% (or higher) payout beneficiary on your contribution post (via SteemPlus or Steeditor).

Want to chat? Join us on Discord https://discord.gg/h52nFrV.

Vote for Utopian Witness!

Hi @drifter1!

Your post was upvoted by @steem-ua, new Steem dApp, using UserAuthority for algorithmic post curation!

Your post is eligible for our upvote, thanks to our collaboration with @utopian-io!

Feel free to join our @steem-ua Discord server

As a follower of @followforupvotes this post has been randomly selected and upvoted! Enjoy your upvote and have a great day!