A guide to project selection and earnings estimation for Gridcoin

Link to corresponding reddit thread here.

So over the past few months, we've definitely seen a measurable increase in people signing up to the Gridcoin network, and r/gridcoin recently hit 4000 subscribers, so I thought I'd do a writeup on how I go about choosing projects for my hardware and also how to estimate earnings for particular hardware. I’m also going to add the disclaimer that this may not be the best way to do these things, it’s just how I do it and seems fairly effective from my own application. If anyone has any suggestions/improvements, feel free to leave a comment.

Introduction

Firstly, to get a solid overview, I'll point to Dutch's "Hardware and Project Selection" three part miniseries. Part one gives a comparison between GPUs and CPUs so you can get a better idea of how they work. Part two goes into depth with GPUs, and part three explores CPUs.

So now that we’re past that, let’s get to it. When choosing a project, you’ll initially want to have mainly two things on your mind. These are:

- How scientifically relevant do I want my contributions to be?

- How much of a reward (in terms of GRC) am I looking for?

This is because (generally) the more scientifically relevant a project’s work is, the more popular it is, the less GRC you will earn for your computational contribution. For example, a graphics card like the NVIDIA GTX 1060 running SETI@home will earn significantly less GRC than a GTX 1060 running Collatz Conjecture. This can be partially attributed to the fact that SETI has four times as many Gridcoin members as Collatz Conjecture, so the magnitude allocated to SETI has to split across significantly more people. Thus we can see that there is a negative correlation between “scientific usefulness” and earnings potential. What I’m going to do next is order all the projects from least users to most users, and allocate each project a broad category starting with projects with only CPU work units.

- ODLK1 (aka Latin Squares) – Mathematics

- Sourcefinder (has been out of work units for some time) – Astronomy

- SRBase – Mathematics

- YAFU - Mathematics

- TN-Grid - Biology

- VGTU Project – Civil Engineering

- DrugDiscovery@home - Biology

- Numberfields@home - Mathematics

- NFS@home - Mathematics

- Yoyo@home - Multiple applications (mainly mathematics)

- theSkyNet POGS - Astronomy

- Universe@home - Astronomy

- Citizen Science Grid - Multiple applications

- Cosmology@home - Astronomy

- World Community Grid - Multiple applications (lots of real world applications)

- LHC@home – Physics

- Asteroids@home - Astrophysics

- Rosetta@home - Biology

Now the reason I’ve done separated the CPU-only projects from GPU projects is because, if you care the slightest bit about earnings, you’ll want to put your CPU on CPU-only projects. GPUs have significantly more brute horsepower than CPUs, which means one GPU could output the equivalent RAC of 100 CPUs. What this means for you is that if you run your CPU on a GPU project, you might get as little as one-tenth as much (or even less) GRC than if you were running a CPU-only project.

Sidenote: If you're crunching with an ARM device, such as an Android device or Raspberry Pi, you'll want to be careful about which CPU project you crunch. Not all CPU projects support ARM processors. A good way to find out if your ARM device is supported is to check grcpool's website here and use their list to check compatibility with your device. You can also cross reference this with the BOINC website's project list as well, found here.

Now here’s the projects with GPU work units ordered from least users to most users.

- Amicable Numbers – Mathematics

- Collatz Conjecture - Mathematics

- Moo Wrapper - Cryptography

- Enigma@home - Cryptography

- PrimeGrid - Mathematics

- GPUGrid (NVIDIA GPUs only) – Biology

- Einstein@home - Astrophysics

- Milkyway@home (1/8th ratio FP64 or better GPU strongly recommended) - Astronomy

- SETI@home - Astrophysics

So, there’s obviously a reason why I’ve ordered them from most users to least users. This is because, as I mentioned previously, generally, the less users a project has the more earnings it will produce. Now you’ll notice that it’s mostly mathematics on the upper half for both lists, and (subjectively) more useful stuff on the bottom half, like astronomy and physics. The mathematical projects tend to have less real world benefits than something like Rosetta or Einstein, but they still have some value, and hence they tend to give the best earnings. So that’s all well and good, but how can you really tell which project is better than another? Well we gotta do some maths first.

Earnings estimation

So I’ll go through the process I use to get a rough idea of how much recent average credit (henceforth “RAC”) a particular set up is going to get me, and hence GRC earnings. It’s not very difficult, just tedious, with lots of flicking between tabs. So you start off with what hardware you’ve got that you wanna compare earnings between projects for. The example I’m going to use is a GTX 1080, you can substitute any GPU or CPU for this example, but generally more recent and popular GPU/CPUs are gunna be easier to work with.

First I just wanna mention that this is all very rough and isn’t going to provide you with a pinpoint accurate measure of your max RAC, merely an approximation. Now with that out of the way, we start by looking at how much RAC corresponds to one unit of magnitude for a given project. The three projects I’m going to compare are Collatz Conjecture, GPUGrid and SETI. Pretty much you just pick a random user’s RAC and divide by their magnitude to get RAC/Mag, so I’ll put this into a table below:

| Project | Random User's RAC | Random User's Mag | RAC/Mag |

|---|---|---|---|

| Collatz | 7,997,355 | 96.48 | ~82,891 |

| GPUGrid | 4,595,053 | 212.14 | ~21,660 |

| SETI | 1,266,852 | 775.74 | ~1,633 |

I hope this also gives people an appreciation that one project’s RAC is definitely not equal to another project’s RAC for Gridcoin purposes.

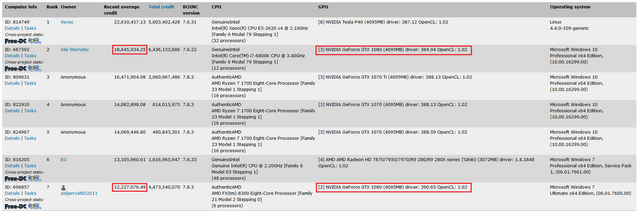

Once we have this information, we now head to each project’s website and try to find their “top computers” (or hosts) link. Most of the projects use a very similar template, so most of the time it isn’t difficult to find. For example for Collatz, you just need to scroll down to “Statistics”, click on that and then click on “Top computers” under “Statistics for Collatz Conjecture”. You’ll want to do this for every project you’re comparing. Continuing to use Collatz as our primary example, we’ll want to look through the list for hosts with GTX 1080’s. Looks like we’ve got two hosts with multiple 1080’s in them that seem to follow a trend. Take a look at the image below.

The second and seventh ranked hosts have multiple 1080’s, and if we do some quick math, it looks like each 1080 is outputting roughly 6 million RAC for these hosts. So we can add that to our table. Repeat the same process for the other projects. I’m going to put the results in another table below.

| Project | RAC/Mag | One 1080's RAC | Mag per 1080 |

|---|---|---|---|

| Collatz | 82,891 | ~6,000,000 | ~72.38 |

| GPUGrid | 21,660 | ~700,000 | ~32.32 |

| SETI | 1,633 | ~40,000 | ~24.49 |

You can see I’m making very rough generalisations with the RAC per GTX 1080, because there’s not much point to being too precise with these sorts of calculations as there’s a billion other factors that can influence what your max RAC is. These include:

- Amount of time spent crunching

- Any overclocking applied on the GPUs

- CPU bottlenecking (i.e. not enough CPU resources available for GPU tasks)

- Other GPU limitations such as thermals, power and voltage

- What GPU driver version you’re using

- The list goes on…

So what conclusions can we draw from this data? Collatz is clearly the superior choice for pure earnings, but (subjectively) its research may not be as useful as GPUGrid’s or SETI’s. Which project you choose will depend on those two factors I mentioned earlier in the article.

So I’ll close up this article with a couple of notes that might be useful for you.

Some notes

Hotbit published an article about the mathematics behind the RAC calculation and how you can estimate how much of your max RAC you’ll have at various points in time. Here’s a link to the article in full, but the numbers that are most interesting to me are:

- ~10 % of your max RAC after 24 hours (extrapolated from graph, not explicitly stated in article)

- 50 % of your max RAC after 7 days

- 75 % of your max RAC after 14 days

- 88 % of your max RAC after 21 days

- 94 % of your max RAC after 28 days

- 97 % of your max RAC after 35 days

So you can see it really takes a while for your RAC (and therefore earnings) to build up. So don’t be concerned if you’re only making a few GRC a day after crunching for a couple of days. As you can see, it takes roughly a month before you get close to your max RAC.

If you're looking for a bit more of an in-depth series on GPU crunching, I'd recommend Vortac's series of articles regarding his experiences with GPUs and various projects. Links to parts: one, two, three, four, five, six, seven and eight.

Speaking of GPUs, I’ve compiled a spreadsheet that has the theoretical floating point operations per second (FLOPS) for various GPUs for single precision and double precision compute. This is important for a few scenarios. Milkyway@home is a big example, which I briefly noted in the project list beforehand, in that Milkyway uses double precision compute for its GPU work units. This is why you’ll find GTX Titans and 280X’s topping the “top computers” list for Milkyway, as they have relatively high double precision compute. For example, an R9 280X has more FP64 (double precision) compute than a GTX 1080 Ti! So this is why I mentioned that generally GPUs with 1/8 ratio FP64 or better are suited for Milkyway, and anything else is a waste. You’ll also find double precision compute on one of Primegrid’s prime number searches. All of the other projects mainly rely on single precision, so you can pick between any of them for most GPUs. Here’s a link to the spreadsheet with columns sorted by FP64 and FP32 (single precision) performance.

My personal picks for projects at the moment are:

- For CPU:

- ODLK1 currently, as it's just been whitelisted so there's very few users to compete with.

- Before ODLK1, it was VGTU, but you could pick any of the CPU projects that are in the lower half of users and you'd probably be fine.

- For GPU:

- For my double precision wielding R9 280X's I'm running Milkyway.

- For my single precision GTX 1080 Ti I'm running Enigma as a sort of middleground between earnings and 'usefulness'.

Alright well I think that about wraps it up, feel free to leave a comment and good luck with your crunching!

This is quite an interesting read, the content is good. Thanks.

When you repeat the same comment on multiple post you sound like a bot! If it walks like a bot, squawks like a bot, it may be flagged for being a bot!

Your Reputation Could be a Tasty Snack with the Wrong Comment!

Very worthy information,

thanks for sharing.

Keep on BOINCing!

If you're trying to predict RAC have a look at the gridcoin mining experiment. I have been running for many months. It's much more complex than it looks at first sight. Following the reasoning in this article would mean one of the early exponential curves (estimations). It didn't really follow that at all.

I have seen many of these 'predictions'. The thing is nobody really keeps track of the RAC to match those predictions. At this point in time something very interesting is happening. At least, I think, I will post about it shortly.

Low user projects are a bit weird in that they have fluctuation, magnitude can skyrocket or dip in a few days.I think it has to do with people who use their 48 core xeon and the like for their projects cashing in GRC when idle.

Congratulations @cautilus, you have decided to take the next big step with your first post! The Steem Network Team wishes you a great time among this awesome community.

The proven road to boost your personal success in this amazing Steem Network

Do you already know that awesome content will get great profits by following these simple steps, that have been worked out by experts?

Congratulations @cautilus! You have completed some achievement on Steemit and have been rewarded with new badge(s) :

Click on any badge to view your own Board of Honor on SteemitBoard.

For more information about SteemitBoard, click here

If you no longer want to receive notifications, reply to this comment with the word

STOPNice! One thing though, where can i find a random users RAC/MAG?

on the pools report page, there are only the top 100 mag for projects visible, and some projects are not visible in the top 100. for example odlk1 is not yet listed...

As this changes over time it would be nice to have a source for that information...

Use gridcoinstats.eu's project list here to look through the various members for different projects. At the moment the site appears to be on a fork so the data isn't 100% accurate.

Great! Thanks!

i'm in the process of writing a mining calculator based on this post. Do you know if the information on gridcoinstats.eu is available via an API? currently a i go with screen scraping, but an API would be much more convenient.

Additionaly are the statistics informations on the projects homepages available via an API as well? as with the other data i go with screen scraping currently and have realized that some projects do prevent screen scraping (yafu for example), and it's generally seen as offensive to do this.

Hi @holger-will.

I'm the developer of gridcoinstats.eu, known as startail in the community and going under the nick @sc-steemit here.

At present there are no public API access to the data on the page, but I have plans to make one.

Can you please specify what type of data you would like to be able to retrieve, either by posting a reply here or by sending me a message on http://steemit.chat, to the same nick as here.

Thanks for offering some help!

What i need is a random users RAC, MAG and GRC for each project, so i can calculate MAG per RAC for each project.

That's the information i get from your site. I store that information in an sql database, so won't need that information very often... maybe once per day or so. i don't know how often the MAG per RAC changes though...

Then i also need the projects statistics for rac by host. I don't know if you can provide that information or if it is only accessible on the projects homepage...

this is an example of the data i need from the projects homepage: https://boinc.thesonntags.com/collatz/top_hosts.php?sort_by=expavg_credit&offset=0