Hardware and Project Selection Part 1 - CPU vs GPU

As I spent more time browsing the Gridcoin subreddit, it becomes increasingly apparent that a lot of users don't actually know what hardware their computer is comprised of. This trend extends to a lot of other crypto-currency mining operations, where people become increasingly reliant on copy-pasta exes that make the most of your hardware with very limited user input. In response to this, and in lieu of answering the same hardware questions repeatedly with minimum detail, today I would like to examine the two most important pieces of mining hardware: the CPU (Central Processing Unit) and the GPU (Graphics Processing Unit).

NVIDIA affectionately describes the two components like this:

The CPU ... has often been called the brains of the PC. But increasingly, that brain is being enhanced by another part of the PC - the GPU, which it its soul.

The CPU

The CPU is comparable to the human brain, in that it literally is the central processing unit of your PC. At the most basic level, all a computer does is carry out incredible numbers of simple mathematical operations, and the CPU is the device that controls and carries out those operations.

To give an example, imagine that we are trying to add together two integers using your PC. You input the numbers into the computer using the keyboard, and the keyboard controller logs the numbers in the form of binary. This binary sequence is relayed to the CPU via the registry, at which point the CPU will use its integrated Arithmetical Logical Unit (ALU) to carry out the addition operation. Finally, the CPU transfers the result back to whatever output device needs it.

As you would have probably realised, adding two integers is an overly simple example, but it gets the point across. In exactly the same manner, your CPU is able to carry out all forms of work, including mining hashes or BOINC tasks.

The GPU

Every single PC on the market today will have a chip that is able to render the images you see on the monitor. However, there is a vast array of these chips. The most basic models are integrated with almost every motherboard and are only capable of low-res video. The GPU is a component in its own right that transcends this limited functionality, and is a programmable powerhouse that does so much more than just render video.

GPUs are optimised for taking collosal data sets and performing the exact same operation endlessly, running many threads simultaneously, and doing so very quickly. Such a problem is called 'embarrassingly parallel'. To understand why the GPU is so good at jobs such as this, we have to take a look at the architectural differences between the CPU and the GPU.

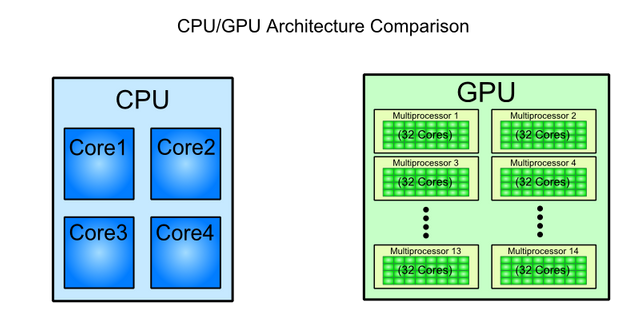

A CPU is composed of a few cores (the most powerful i9 processor is expected to boast 18) with a lot of cache memory that can handle a few parallel processes. On the other hand, GPUs can have many thousands of cores that allows them to handle huge numbers of parallel processes simultaneously. The ability of a GPU to run such a massive number of simultaneous threads means that for repetitive tasks such as mining, the GPU vastly outperforms even much newer CPUs.

You might be wondering at this stage why GPUs, which came into existence in their current form far later than CPUs, have outperformed their older brother. The reason is the gaming industry. The amount of money available from the gaming industry, combined with its endless demand for higher fidelity, has pushed GPU development into the fast lane. While this is great for most applications, there have been some unfortunate consequences as a result. Primarily, games do not require high precision logic, so single precision (FP32) GPUs have pushed double precision (FP64) GPUs out of the mainstream limelight and banished them to being research models. However, that is a discussion we can have another day.

The Take Home Message

If you remember nothing else from this article, remember that:

- The two key components in your PC that you can utilise for mining are the CPU and the GPU. Whether you are 'mining' by running BOINC or doing something more traditional, that still holds true.

- The CPU and GPU have to be put to work and optimised independently to get the most out of your machine

- GPUs are generally more power and cost efficient than CPUs for mining. There are exceptions.

Next time we touch base, we will be looking at how to select appropriate BOINC projects based on your CPU and GPU.

In the mean time, keep crunching!

Would it be at all practical to reverse engineer video cards to make multi-GPU boards to handle things like mining, or is that essentially what an ASIC does?

Do you mean having multiple GPUs fixed on the same motherboard? Sure, this is already done in a lot of mining rigs. However, if you had the money to invest another option for GPU mining would be to buy a server/research GPU. These GPUs have incredibly large numbers of cores. For example, the V100 GPU Accelerator from NVIDIA's Tesla range (An FP64 focussed card) has 5120 cores in the single GPU.

ASICs are a bit different, in that they are pieces of hardware designed to only do one job, and do it incredibly well. In terms of mining capabilities, ASICs are far superior to any GPU. However, the problem with such specialized hardware is that it can do pretty much nothing else - BTC ASICs mine BTC, and that is it. There are some rare exceptions, such as chips that mine BTC and LTC, but this is because the chip package effectively incorporates two ASICs, one for each coin. An ASIC could never, for example, render your display.

Ah I see ... I think something like the server gpu is what I was talking about, that way I could still mine pools and get a variety of payouts. I'm guessing the investment for something like that is obscene though and the ROI would be prohibitive?

We could do the math right now if you like!

The V100 GPU Accelerator is FP64 orientated, so lets look at the FP64 project MilkyWay@Home. Vortac is the top miner in that project, and it generates him an income of about 180GRC/day. He does this using 6 Radeon HD7970s, which supply 950 GFLOPS of FP64 processing per card. That means the return per day is about 0.03GRC per GFLOP per day.

As the V100 GPU Accelerator has 5120 GFLOPS of FP64, it would have a predicted yield of 162 GRC/day.

Despite those yields between the 6 HD7970s and the single V100 GPU Accelerator being similar, 6 HD7970s would set you back maybe USD$1000, while a single V100 GPU Accelerator is USD$15000. In terms of power consumption the V100 GPU Accelerator is far superior, but the difference in start-up capital required makes it unfeasible.

To get best ROI, especially in the FP64 GPU applications, older hardware is the way to go. Very specific pieces of older hardware, mind you. Research is required.

My Milkyway numbers are actually way down (at least 30%) because of the summer and the latest heat wave here. I had to severely downclock all my GPUs, plus I have to turn BOINC off during the day otherwise it's simply too hot and I have to run my AC at max power all the time.

There is even something between an GPU - which can theoretically run everything, as can Mincecraft ;) - and ASIC. THis is called FPGA (Field Programmable Gate Array)

As the name says those things can be programmed, but different then the CPUs (hard to explain).

The FPGAs can only do the task they are programmed to do, you cannot run 2 different programs without reprogramming. But you can run any program, so you could first mine BTC and then Ether.

Because of their structure they are likely to be more speedy then CPUs, maybe even a bit faster (or more energy efficient) then GPUs, but you would have to write a programming first, for every single different FPGA. So it is not worth the trouble.

An FPGA might be something useful for the pool I use (nicehash) because I could potentially program one to run Cryptonight and one to run Equihash thus increasing my payout for both.

It's less a matter of reverse engineering video cards but rather porting CPU work to GPU (or FPGA). If you can do this and realize performance gains then you could earn serious GRC.

I'm a bit too new to know what GRC stands for. I'm guessing it relates to H/s?

GRC stands for Gridcoin. It is the abbreviation used on the markets.

You could compare it to how we sometimes write United States Dollars as USD.

Got it. It's a currency I haven't done any research on. I've mostly been looking at ways to improve my payout from nicehash, and maybe get a piece of the Ethereum mining action. Is GRC easier to mine?

Once you are set up, it is exactly the same amount of effort as any other coin. Getting set up requires a little more work though, as Gridcoin pays out based on contributions to scientific research done through the BOINC platform. You can't 'mine' GRC in the traditional sense --> the compute power of the network is actually put to use here.

There are lots of great tutorials on getting set up, both written and videos. If you have a little patience, you could be fully set up mining in a pool in an hour or tow. To mine solo, give it maybe 2 days of intermittent activity.

Great write-up, thanks for the info

Great article. I just got started with Gridcoin, and I love it!

Great - and thank you for the feedback!

Sing out if you have any questions. =)