Hardware and Project Selection Part 2 - GPU Projects

For the second part of this GRC mining miniseries I would like to talk about getting the most out of your GPU in terms of the most meaningful mining metric - GRC/day. For most other cryptocurrencies, this takes the form of downloading the most recent miner (usually as suggested by the coin's wiki) and running the program. For Gridcoin, optimising yield is a little more complicated.

Before we begin, I would like to point out that this article will not address pet projects or what science is more worthwhile. There is definitely a range of projects from pure theory to directly applicable medical research. However, the more interesting projects tend to not have the best return on investment. A lot of BOINC veterans (myself included) get around this issue by spending part of our compute on the projects we believe in and support the most, and another part on making profit. In the end, it's the philanthropic approach that makes Gridcoin different and unique from the other 700+ Altcoins, and if you were 100% profit driven you would likely be mining something like ETH instead.

Understanding Your GPU

GPUs come in two distinct flavours - single precision focused (FP32) and double precision focused (FP64). That means literally what you think it means, as FP32 calculations use 32 bit floating point operations and FP64 calculations use 64 bit floating point operations. Which can your graphics card do? Well, both, in all likelihood.

All cards can carry out FP32 calculations at some base rate, and most can then carry out FP64 calculations in lieu of FP32 ones at between 1/32nd and 1/2nd the rate in GFLOPS. As a general rule of thumb, if the FP64 rate is 1/4 the FP32 rate or better, you will want to dedicate your card to an FP64 project. Otherwise, dedicate it to an FP32 project.

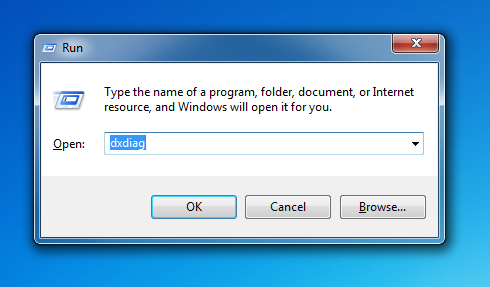

To find out how your particular GPU performs, find out the model and then look up the series on Wikipedia. If you are running Windows (which most of you are), the easiest way to do this is hitting start, typing 'run', and entering 'dxdiag'

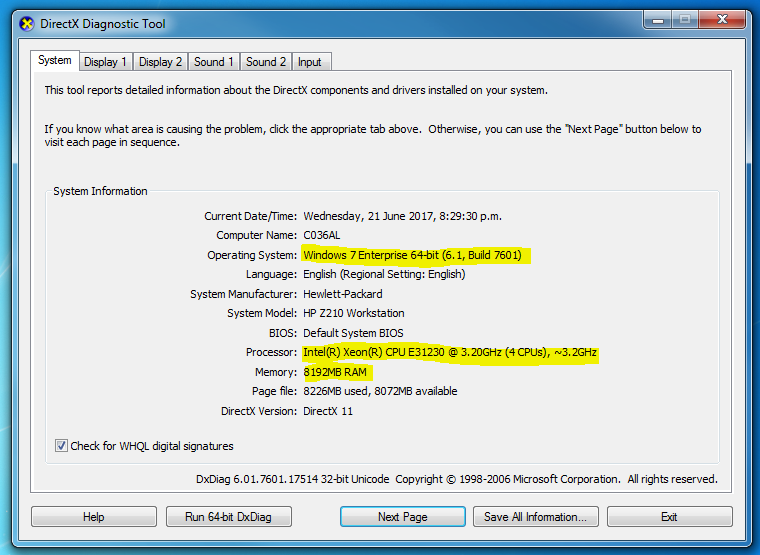

If you are asked whether or not you would like to check if your drivers are digitally signed, choose 'no'. You will now be presented with a screen like this:

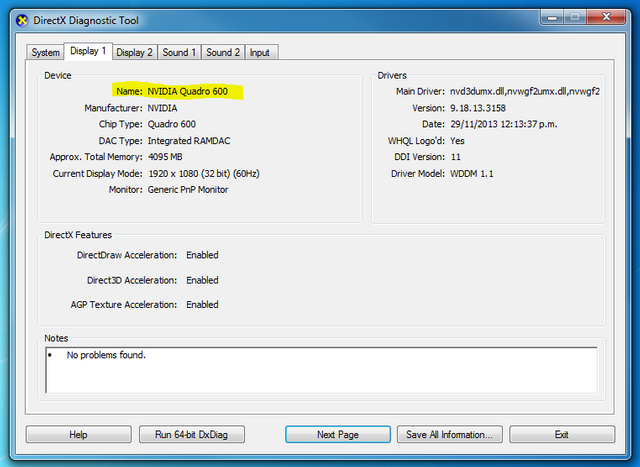

Note how this screen lists a lot of your PC's specs, such as the OS, CPU and RAM. Navigate to the second tab, marked 'Display 1' to find out what GPU your machine has installed:

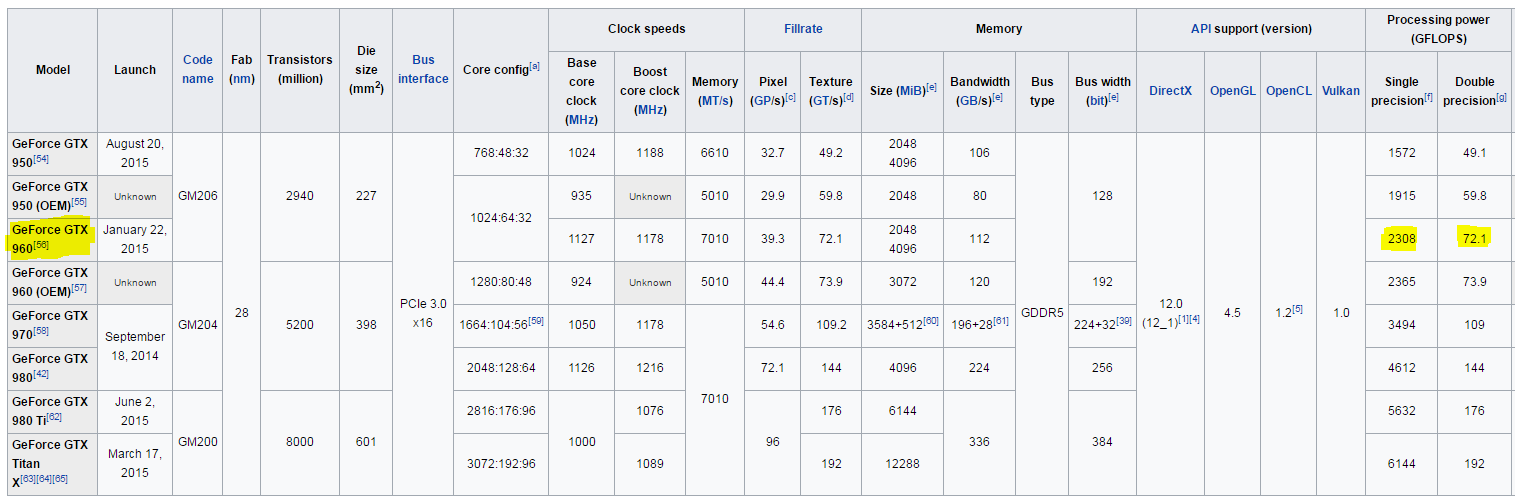

In my case the GPU is an NVIDIA Quadro 600, which is old and not much use anymore. From here on, lets pretend I had a relatively common gaming card installed - a GeForce GTX 960. This GPU comes from the GeForce 900 series, so lets look that up in Wikipedia and scroll down to the products summary:

Unless you have large screen you may have trouble reading those numbers, but click the image to go straight to the Wikipedia page. The numbers we are interested in are in the processing power columns. For the GeForce GTX 960 these show 2308 GFLOPS of FP32 and 72.1 GFLOPS of FP64. Therefore, we would want to task a GeForce GTX960 to a single precision project.

Picking The Project

Having found out whether to apply your GPU to a single or double precision project, you now need to select the specific project to crunch. In the case of FP64 projects, your choices are severely limited - MilkyWay@Home. In future, it is likely many more FP64 projects will be appearing on the BOINC scene as many modern modelling applications need FP64 accuracy.

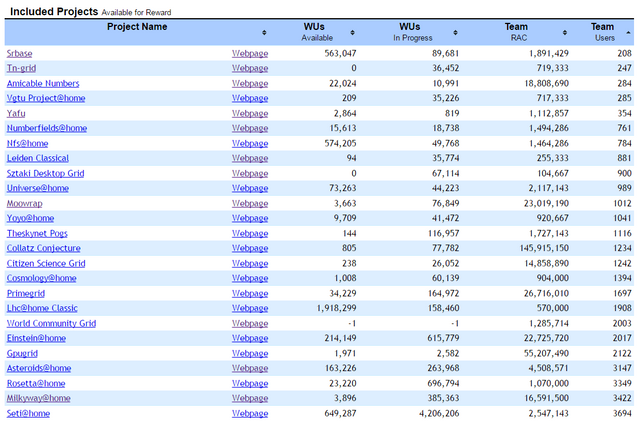

If you need to select an FP32 project, your first step is to go to the Gridcoin Whitelist and check what GPU projects are available. Then, go to the Gridcoinstats Website and sort all the whitelisted projects by number of hosts.

In general, less hosts means less competition and thus a higher payout for the work you did. This is because the total GRC mined each day is split evenly across all whitelisted projects. As a result, your aim will be to contribute the greatest percentage to the total compute of any project. Pick one of the least populated GPU projects from this list, and assign your GPU to that. Good choices at the time of writing are Primegrid and Amicable Numbers.

CPU Processes

Some projects supply both CPU and GPU jobs. Because GPUs vastly outperform CPU jobs, the CPU jobs in these projects will pay out very little and are not worth running. Make sure you elect not to receive such jobs through the project settings on your chosen project's home page.

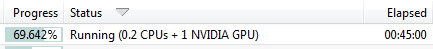

While we do not want to be running CPU only jobs in a GPU project, a job is never 100% GPU based. They always require some degree of CPU co-processing, which is why you will often see this in your BOINC manager:

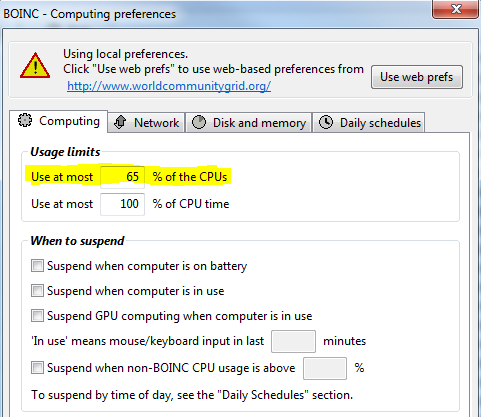

This co-processing is required for your GPU to do its job. As such, it is important to not fully load your CPU with another project, as this will starve the GPU of its co-processing and effectively throttle it. In your BOINC settings, under options --> computing preferences, reduce the % of CPUs used until your PC is not running at 100% CPU load:

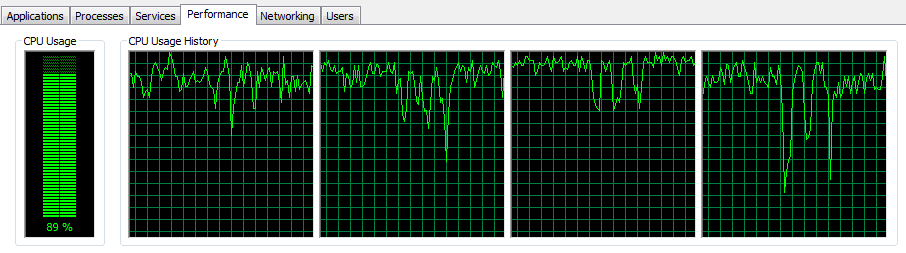

The goal is for your CPU load to remain high, but not repeatedly cap at 100%. It should look something like this:

Further GPU Optimisation

Once you have gone through the above steps you can further optimise your GPU performance by doing one or more of the following:

- Overclocking and overvolting your GPU

- Actively electing whether to run OpenCL or CUDA jobs

- Running multiple projects concurrently on each GPU to minimise downtime

However, these are all outside the scope I would like to go into here. If you have any questions about this, feel free to leave a comment below and I would be happy to help. If you are serious about getting the most mag from your card, I would recommend Vortac's series on GPU mining.

Good luck, and ask if you would like any more help!

The best for Ether will be the HBM2 cards. =)

GridCoin projects still benefit a lot more from NVIDIA. But soon that will change.

AMD is planning... now! In the past, it was trying to just make products get into the market. But now they have understood how to face Intel. Floating point bit-wise-dynamic registers will be something amazing on the new cards. I forsee versatility for mining and ultimately, very good performance in average, over time.

For those who are interested in maximum BOINC GPU performance, these two articles provide some extra tips and hints:

https://steemit.com/gridcoin/@vortac/gridcoin-gpu-mining-6-obtaining-the-maximum-performance-out-of-your-gpus

https://steemit.com/gridcoin/@vortac/gridcoin-gpu-mining-8-to-the-edge-and-beyond

These articles are fantastic, and probably the best source of information on earning the most GRC/day from your GPU.

Thoroughly recommended.

Excellent post @dutch!

And now, if you allow me, I present you the best mag-for-$ GPU available on the market today:

http://www.ebay.com/itm/AMD-HD-Radeon-7970-3gb-GPU/292157192833

Yes, it's the HD7970 selling for $70 on eBay! Deprecated in the eyes of gamers and Proof-of-Work junkies, but hidden gold for Gridcoin miners, yielding up to 1.2 TFLOPS in FP64 (when properly overclocked). Point this classic GPU to Milkyway@home (a FP64 project) and you'll get to mag 150 in no time. 0.5$ per 1 mag, I dare you to show me a better deal, with any other device.

But hurry, because competition in Milkyway is getting rather strong :)

Wow, that's really indeed a buck well-spent. I'm new Gridcoin and I'm currently reading up what it is. Do you have any quick tips for beginners please? :D

Sure. Follow any of the guides on getting set up and begin with just the hardware you already have. There is no need to invest in expensive equipment off the bat. Work on getting a hang of the platform and getting the most out of your current system.

You can earn GRC with a laptop if you wanted. You wouldn't earn much, but it's definitely feasible.

Ask away if you have any questions at all! =)

Hi @dutch, cool, thanks for that! :)

I'm still getting a hang of how it works but I was able to grasp about BOINC. I have a GeForce GTX 960. If I do a straight month of mining, do you have a rough estimate of how much GRC I'm going to mine? Thanks!

We can do a rough estimate based on a GTX 960 in my cluster. I have an NVIDIA GeForce GTX 960 (2048MB) which contributes 120,000 RAC (Recent Average Credit) of the total 775,000 RAC I have in 'Moo! Wrapper'. It is running with stock settings.

The total income I earn from this project is 38 GRC/day. That means the GTX960 is making about 6 GRC/day, or 180 GRC/month. I have only been running this card for about 2 weeks though, and RAC does not cap until about a month, so the GTX960 making 200GRC/month in Moo! Wrapper seems like a fair estimate.

You could probably make a bit more if you ran Primegrid or Amicable Numbers. You could also earn more if you set up your GPU to run OpenCL instead of CUDA jobs (CUDA is NVIDIA exclusive and less efficient in Moo! Wrapper). The latter is for advanced users though.

Ahh, I see. Thanks for that. I'm currently reading up how to set up so I can start BOINCing! :) Greatly appreciate your help. I'll keep this noted. :)

You're very welcome! Pop back and ask if you would like help with anything.

Cool, thanks for that. I'll keep that noted. :D

By "I have an NVIDIA GeForce GTX 960 (2048MB) which contributes 120,000 RAC", do you mean, one machine that has one NVIDIA GeForce GTX 960?

Correct. I actually have several machines with an NVIDIA GeForce GTX 960, but that number is for one card in one machine.

You found one for $70!? If I was in the USA, I would buy that. Petty shipping to NZ costs an arm and a leg. I can't think of any other hardware that even comes close to $0.50/mag...

Fantastic to see older hardware being put back to use instead of being sent off to the scrap heap.

I am not from the USA either, but you can find them for cheap everywhere. I stockpiled 9 of them already :)

Of course, when buying used hardware, one has to be extra careful. To obtain mag 150, BOINC will have to load it very hard - it's worth paying double, if the card was never overclocked or seldom used. If it's on its last legs, with "an artefact or two" better steer clear, cause BOINC will wreck it completely.

If it shows an artifact or two it is already broken, just hasn't realized it :D

"No, it's only when it's cold, you know, it's the power circuitry, it works best when it's warmed up or something like that. Anyways, I can play BF1 at 60fps with that puppy, it's a great card, it is... yes OK, 60 bucks then?"

You may be able to repair it by overheating it even more, the molten metal then connects the problematic pieces together again.

Yes, that is possible. I don't recommend trying it though ^^ I just know it from one type of card where the solder stuff (which flows at lower temps then the rest) could really re-flow and make cards work again.

The guy used a microwave I think.

I'm dying.

Is there another card that comes close? The HD7970s I could find used on ebay are €120+ here in Europe :(

Titan Black comes close, but they are much more expensive.

Oh hey, that's my card! I got it cheap off ebay from a former btc miner who was disappointed with his results. His loss is my gain.

Hey, I just found this series. Would have saved me a ton of time if I had had these available back when I started. I'm saving them and will refer people to them when pertinent questions are raised. Thanks!

You're very welcome! Good to have you around and I'm glad you got some use out of my writing. =)

@dutch Thank you so much for your articles. I just started mining GRC yesterday and your articles have been the best for understanding how to get everything going. I still have questions, but trying to post them in the appropriate articles.

Question: When it comes to picking a project, you mention look for one that has the lowest Team Users, but wouldn't you want to first choose one based off the Team RAC? For example, Amicable has 492 Team Users but a Team RAC of 38,882,070, pretty high competition. Seti has 4,297 Team Users, but a Team RAC of 3,571,429, a lot more users than Amicable, but way less competition. So wouldn't you be better of picking Seit?

Welcome to the team, and great to hear my articles have been useful! =)

You are right that project selection is actually more complex than just picking the lowest number of users:

RAC is a measure of the rate credits are earned. The problem with using this to compare project is that each project sets their own rate at which credits are earned. For example, SETI is notorious for giving out credits significantly more slowly than any other project. I went from 20 million RAC on MooWrapper to 800 thousand RAC on SETI, with the same hardware. Therefore, it is not a very good measure of what project has the best return for your hardware.

The number of users will give you a better idea of competition, but the problem here is that we have no easy way of telling how many of those users are still active 24/7.

Currently, the best GPU project is Enigma. The best CPU project is Yafu.

Thanks for the tip, I switched my gaming PC (i7-6700K, GTX 980 TI) over to Enigma and Yafu. I would love to learn more on how you do your research for which projects to pick. I also plan on hooking up my Raspberry Pi's I have laying around and possibly two old computers I have. I'm trying to learn as much as I can about Boinc, Gridcoin, and cryptocurrency so I can have a better understanding of everything, and not just blindly choose settings because XYZ says it's the best. I've really been enjoying your articles.

Feedback like yours makes it all worth it - very much appreciated! =)

That PC will net you a decent income, as it is very much near the top end of what hardware is available. Of course, every little bit helps so your small PCs and R-Pis will increase your yield somewhat. If you want to talk more about R-Pis, @scalextrix is your man.

There is a project in development that has not been released which gives real time yield statistics. I used to verify its accuracy, but now I just trust it's outputs blindly and it has never failed me. Expect a public release later this year.

I started mining grc last Friday and after googling, reading through tons of forum posts and even making posts on the gridcoin subreddit I still felt like I was in the dark about how to optimize the process. Your post has cleared up any confusion I had, thanks!

Thank you for the feedback. I really appreciate it.

And, of course, welcome to the family! =)

Congratulations @dutch! You have completed some achievement on Steemit and have been rewarded with new badge(s) :

Click on any badge to view your own Board of Honnor on SteemitBoard.

For more information about SteemitBoard, click here

If you no longer want to receive notifications, reply to this comment with the word

STOPBy upvoting this notification, you can help all Steemit users. Learn how here!

How to analyse the trade-off between the benefit of a fp64 project such as MilkyWay@Home and the fact that that project has a high number of contributors?

You can look at the top contributing members and their hosts (if they are not hidden) to get a relatively good idea of the payout for a specific piece of hardware.

Of course, that only matters if you are buying new hardware. If you already have an FP64 focussed card, MilkyWay@Home is just the best option.

So far I understood: There is FP32 "mining" and FP64 "mining", correct?

Most of the projects are FP32.

I looked up the 1080, which gets 8228 GFlops, the Titan X (non Pascal) gets 10157 GFlops. Both in FP32. FP64 don't look that good on those cards.

My question is: Shouldn't those cards be the "best" cards to "mine" Gridcoin? Or am I missing a really huge point?