Do I really need 64GB of RAM for my witness nodes?

TLDR: no.

32GB servers with fast storage like SSD or NVMe are still good enough to run low memory nodes. The trick here is to keep the shared file in RAM (tmpfs device) and SWAP located on a fast disk(s). This solution was also described by @gtg in his post Steem Pressure #3 which I highly recommend you to read.

From the configuration standpoint, we only need to be sure that tmpfs and SWAP volumes have enough room to hold the shared file. Linux kernel will take care of the rest and start paging if it's required, in short, the paging is the process of optimizing memory by saving data on a hard drive instead of RAM.

My setup

By default on the Linux, the size of /dev/shm (tmpfs) is a half of the available RAM, 32GB servers will have tmpfs of 16GB. During my tests, the shared file already crossed the 33GB, so before I run steemd I need to remount /dev/shm with the bigger size, and I will use 48GB for it and 32GB for the SWAP.

# mount -o remount,size=48G /dev/shm/

How long does it take to replay the 91GB of blockchain?

The replay time highly depends on the hardware, so please don't stick too much to the numbers below, but I hope it gives you at least some kind of reference.

I used 4 dedicated servers for my tests,

| HDD configuration | Memory | CPU | Replay time (s) |

|---|---|---|---|

| 1xSSD / SAMSUNG MZ7LN256HMJP | 32 GB | Atom C2750 | 35708 |

| 2xSSD (RAID-0) / Samsung SSD 850 EVO 250GB | 64 GB | Xeon D-1540 | 12762 |

| 2xNVM (RAID-0) / INTEL SSDPE2MX450G7 | 32 GB | Xeon E3-1245 v6 | 10234 |

| 4xHDD (RAID-0) / WDC WD10EZEX-00BN5A0 | 32 GB | i7-4790 | 21456 |

| HDD configuration | Disk read speed | Disk write speed | Passmark | Passmark single core |

|---|---|---|---|---|

| 1xSSD / SAMSUNG MZ7LN256HMJP | 271.61 MB/sec | 2.0 MB/s | 3805 | 582 |

| 2xSSD (RAID-0) / Samsung SSD 850 EVO 250GB | 925.36 MB/sec | 900 kB/s | 10573 | 1344 |

| 2xNVM (RAID-0) / INTEL SSDPE2MX450G7 | 1.4 GB/s | 43.2 MB/s | 10410 | 2191 |

| 4xHDD (RAID-0) / WDC WD10EZEX-00BN5A0 | 678.43 MB/sec | 126 kB/s | 9998 | 2285 |

No doubt the winner is the server with NVMe disks, the full replay finished in 3 hours, but I was also very positively surprised by the performance of the server with 4 HDD drives.

All data below comes from the weakest server 1xSSD/Atom C2750 ;-)

Memory, I/O and disk utilization

dstat running during replay process (1xSSD/Atom C2750)

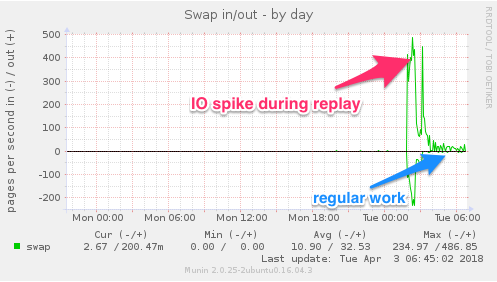

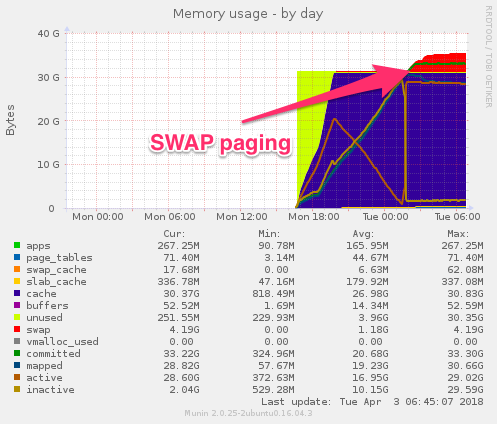

SWAP paging (1xSSD/Atom C2750)

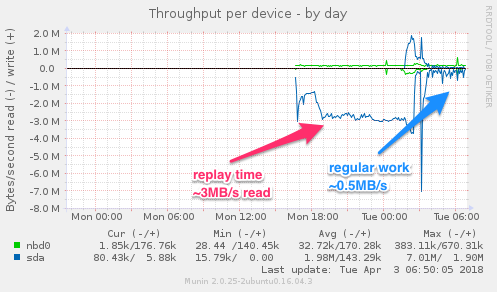

/dev/sda throughput (1xSSD/Atom C2750)

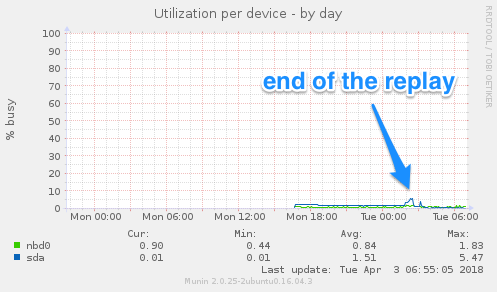

/dev/sda utilization (1xSSD/Atom C2750)

Beginning of the SWAP paging (1xSSD/Atom C2750)

$ free -m

total used free shared buff/cache available

Mem: 32094 329 305 29478 31459 1815

Swap: 30517 4288 26229

- during the replay, the READ/WRITE ratio is ~3:1 (read 91GB blockchain/write 33GB shared file)

- after the replay, the paging goes back to the minimal level

Conclusion

My biggest problem was to convince myself to let the servers use the SWAP memory because by many years I was learning how to optimize various systems, and SWAP paging was always something I don't want to see... but in this case I'm going to turn a blind eye and continue to use some of my 32GB nodes.

The data and graphs speak for themselves ;-)

If my contribution is valuable for you, please vote for me as witness.

May The Steem Be With You!

I have always kept shared-memory on disk. Not even an NVMe, just a regular SATA disk on RAID0. Disk usage never goes above 5%, though I'm sure there may be spikes. Never had any issues thus far.

My RAM usage has never exceeded 2 GB.

I do have a backup node with NVMe testing AppBase 0.19.4. Tested /dev/shm, couldn't think of a single benefit, so I'll stick with NVMe for now. After NVMe comes Optane. Using RAM seems a long, long way away.

Of course, it's a different story for a full node.

Yikes, I'm not sure it's reliable to run it on regular HDD. Is that your backup node? Check your latencies, I bet you're hitting very high numbers every once in a while (i.e. will cause missed blocks).

Short spikes are fine ;-) I hope someone with full node will share best practices ;-) I would love to read about the configuration, requirements and daily issues. ;-)

Do you remember how long is the replay when shared file is on a disk?

i would like to bring you witness to my w/(h) ea L/L (t/h) D https://steemit.com/g0fig/@xubrnt/ned-steemit

This post is yet another example which follows Betteridge's law of headlines (PL: Prawo nagłówków Betteridge’a)

:)

I didn’t know law like this even exist... ;-) thx, I learned sth new ;-)

Hi @jamzed, are you still hosted by OVH ? You are one of the few witnesses to never have missed a block (in 9 months, right) which is quite impressive, congrats.

Hey @lux-witness, thanks for the sweet words ;-) That's correct, my primary witness node is running by OVH, and secondary by MyLoc. I switch the keys between servers from time to time to be sure that both work as expected ;-) I believe the key is the monitoring and proactiveness. Thank you for your vote, you got mine as well ;-) Good luck ;-)

Thank you @jamzed ! That was unexpected !

I'm cutting to the chase here, no chit-chat, straight to the point: if the opportunity arose, would you potentially consider relocating in order to work with steem professionally ? I mean, if (and when) we needed a super-duper-crack system engineer to oversee some private steem nodes, would you consider a job offer (if it involved relocating) ?

Sorry for being so direct.

I could use a spare 8 gig for my mac mini, jk.

Out of subject since it's techie-friendly, why don't you have any community projects. It's mostly the main reason for minnows to vote, I know our votes doesn't mean much but that popularity may help in the end.

To the question in your title, my Magic 8-Ball says:

Hi! I'm a bot, and this answer was posted automatically. Check this post out for more information.

You can do a project, your article makes me see the intelligence. Very rewarding, give you a vote though it does not make sense

girl are u lost?

No, I'm trying to get to know people

do you know what room u are in? lol

Hi Jamzed, do you have Discord? I wanted to talk with you regarding your witness efforts and simply getting to know you since we are both witnesses.

@jamzed you were flagged by a worthless gang of trolls, so, I gave you an upvote to counteract it! Enjoy!!

Congratulations @jamzed! You have completed the following achievement on Steemit and have been rewarded with new badge(s) :

Click on the badge to view your Board of Honor.

If you no longer want to receive notifications, reply to this comment with the word

STOPDo not miss the last post from @steemitboard:

SteemitBoard World Cup Contest - The results, the winners and the prizes