In the Beginning, There Was DPoS

With recent events, DPoS (Delegated Proof of Stake) has received quite a bit of attention. It's become obvious to many people that voting for block producers is important.

Let's put on our blockchain designer hats and try to unravel some of the ideas behind DPoS. I'll also try to dive into blockchain design history, and share some of my own perspectives from that time, as others are doing.

A blockchain needs to decide, in a decentralized way, who will produce the next block. Bitcoin, the first blockchain, basically assigns it by a "lottery". The more computing power you have, the more lottery tickets you can print. This system is called Proof-of-Work. (There's also a difficulty adjustment system that controls the rate of winners over time, by decreasing/increasing the win probability of each individual ticket if there are more/fewer winners than the target rate.)

There's no reason we need to design our blockchain to base block production on computing power, though. We can use anything, as long as there's a way for a user to prove to the blockchain that they did some kind of activity. At the time BitShares was being designed, other chains (notably Peercoin) were already using proof-of-stake, where the thing you use is stake (specifically, holding coins over time). Proof-of-stake was much in vogue among blockchain designers as a superior consensus mechanism.

The idea of PoS, as it was conceived in early 2014, (or at least as I then understood it), was to actually transact your coins as part of producing a block.

This meant having an always-online wallet (not great security practice or UX).

It was also already becoming obvious that people wanted to centralize their block production in pools. This minimizes individual variance: It's much easier to run a Bitcoin mining business that reliably makes $2000 each month, rather than one that has a 4% chance of making $50,000 each month (and a 96% chance of making $0). Even though the average monthly EV is the same, most people would rather have their income be smooth than lumpy.

If you have to actually transact your coins to produce blocks, then in order to form a mining pool, users will have to give the mining pool operator custody of their coins.

So let's pretend it's early 2014, and put on our blockchain designer hats.

The hardest part of being a blockchain designer is predicting how users will behave.

Fortunately, we know something about how users will behave, by observing Bitcoin:

- We know that, if they have a reason to do so, users will let someone else hang on to their coins (for example to trade them for dollars on Mt Gox).

- We know that Mt Gox has just suspended its Bitcoin exchange and filed for bankruptcy.

- We know users will try to form mining pools. They're doing it in Bitcoin, and there are clear economic incentives to encourage this.

We may wish users behaved differently. We may wish they wouldn't form mining pools. We may wish users who hold coins that belong to others, for example exchanges, always behaved competently and ethically. But we have to design our blockchain around the facts of how users actually behave, not how we think they should, or wish they would.

We can't stop users from forming pools. That's what the users want to do. But we can stop users from giving away their coins to form pools. We simply need to provide a mechanism for the user to give the pool operator control of the coins' PoS block production power without also giving the pool operator the ability to transfer the coins.

This is the core idea of DPoS: Allowing a user to delegate their stake-based power to produce blocks.

This core idea was suggested in a March 18, 2014 forum post. In my opinion, the author of that post deserves credit for being at least a co-creator of DPoS.

My opinion is biased, of course. I wrote that 2014 forum post.

The DPoS system used in Steem adds quite a few additional features and design decisions to the basic primitive we've arrived at in this post. I want to revisit some of those decisions in my next project.

How about a post outlining some of the flaws of DPoS that we have witnessed since 2014 and maybe some ideas on how to address them.

More votes than top witnesses - Allowing a large stake to effectively centralise and control the blockchain (as we have witnessed here on STEEM)

Bribery / Corruption of Witnesses - Where witnesses offer a kickback to voters as a bribe to vote them in (or than relying on merit). We have seen this on other DPoS chains, leading to unreliable block producers being elected and then poor performance of the overall network.

I'm sure there is more....

I think the biggest problem related to any "voting-based" system is:

I did outline in a post of mine ideas how voting apathy could be combated. And, as soon as we got people participating in the democratic process, forcing the elected to be transparent becomes a solvable problem.

Maybe we should have downvotes available for block producers too?

Read my blogpost, it's not a solution either.

Make it so that you cannot post or comment until you voted all 30 witnesses (or whatever the number might be in the future)

Should be quite easy to code.

My idea was more in the lines of not giving people SP inflation unless they use at least 50% of their slots to vote for active witnesses.

I would agree with this idea as well. But I don't see why you should not force people to take part in the stability of the network. It benefits everyone and there is no downside.

I think it sets the entry barrier to high for new users. Someone who just arrived doesn't know anyone and has no stake to vote anyway. But with increasing stake there should be a financial incentive to vote for witnesses. An easy way to do this would be through the SP inflation.

Noted, it is an entry barrier. But if you accumulated or bought 1000 Steem and powered that up, I think those accounts should be able to form an opinion to set a voting proxy for 1 witness they like. Not everyone needs to make 30 vote decisions on their own. Witnesses and everyone with influence here should be more vocal about them being a possible voting proxy.

If you were allowed to vote infinite witnesses you could even set several proxies. That would make it more trustless. I might not trust 1 persons decisions, but I might trust the average among 5 people.

While I hate repeating the same reply in same thread, I'm going to do it:

Additionally, you are preparing for vote buying, via return incentives, much like it happens on TRON. You may end up centralizing more with forced voting in order to contribute.

I LIKE this ^

Fantastic idea. This solves the problem of "dormant" investors too by forcing them to participate.

That would just lead to people voting for 1-15, the quickest possible path to get theirs. Without caring about the actual importance of their vote.

So you are a co-creator of DPOS and you have seen the shitshow that is steem and how it morphed into what it is now.

You know the technicals and you understand what's been proven to be good and bad about DPOS.

With this knowledge, I will 100% check out your next project!

I also would like to hear your ideas to make a 51% attack impossible in a DPOS system with 20 consensus witnesses and 30 witness votes per account.

These numbers seem random to me. Can you explain why Dan Larimer chose 20/30 and what would be a safer choice? I am thinking about something like 25/9 (with the current distribution of steem in mind)...

However, the best way would be infinite witness votes to guarantee the highest overlap.

How many consensus nodes there are. It depends on the performance, less nodes > performance, more nodes < performance but potentially more security.

However, in DPOS the more nodes you have the more witnesses have to come to an agreement which gets more complicated the more there are (people are difficult).

So I honestly think 20 is a good number.

*You mean

3f +1total nodes to toleratef = [(n/3)-1]faulty nodes?I have looked a bit into the things you pointed out a few days ago. The dPOS in Steem (other than in Bitshares) is not a pure dPOS but a dPOS+BFT or dPOS-BFT or "pipelined dPOS" how it is called in Eos, which makes it actually a quorum. Even though there is no obvious direct each-to-each communication, there is still each-to-each communication.

In dPOS-BFT there is a leap of faith (Vertrauensvorsprung) like in Bitcoin, which makes it a retrospective protocol. After blocks are added (Now comes the quorum) --> the other witnesses have to verify the last irreversible Block (LIB) with at least

2/3. The consensus rule is: that no honest node will join a chain which is not build on the last LIB. Aka. the longest chain rule.People confuse the consensus mechanism (dPOS) with the oracle (PoS). Consensus is never made by the Oracle. Hash-Cash aka. Proof-of-Work is just an oracle - in fact a special form of proof-of-stake. The consensus is done by the set of consensus rules (fork-choice/longest chain/longest and heaviest chain)

This means it is a quorum by the longest chain rule! Now I understand why Vitalik pointed óut that dPOS-BFT other than dPOS (and I guess dBFT like in Neo) is subject to message complexity!

The

BFT 2/3+1 safety assumptioncomes with the use of the round-robin scheduling. When all active nodes add one block per round -> every 3 seconds (the quorum is synchronized by the fixed consortium), THEN a round is k times 3 seconds and the chain grows with --> 3 seconds per block.Any malicious set of nodes up to 1/3 can create a minority-fork but this malicious fork will only grow as fast as 9 seconds per block (note that the consortium is still one and of size k), ergo the honest chain will still grow with 6 seconds per block. Hence --> 6 seconds is the minimum honesty-decidebility-criterion.

When a malicious minority is bigger than 1/3, we have the equivalent of a 51%-attack vector (not exact the same since bitcoin is binary and this state is a undecidable "pending" state). Because

f=1/3 +1 means that there is no honest 2/3 and hence no 6 seconds. So the correctness is violated (even though the system still makes progress in this pending state)Yes, exactly. 3f+1 total nodes where f nodes are faulty means that we need 2f+1 honest nodes to guarantee progress in a classical BFT protocol.

I don't see where in DPOS the message complexity comes in though. It schedules round robin and after 2f+1 nodes added their block, the last block is "finalized" since every other node once agreed on a block that built on top of the block that is being finalized. This creates an implicit quorum. However, this doesn't create any messaging overhead.

Also, I'm not 100% sure if the chain would continue working if 49% of the top 20 witnesses would stop producing valid blocks or if that would cause a chain halt because 2/3 are necessary. I read a few things about synchrony assumptions and under those assumptions you can guarantee progress with f+1 out of 2f+1 (simple majority)

OK I try to order it, I was on the same track. Maybe you can validate.

What is Synchroinizity assumption?:

ALL distributed BFT-consensus systems are based on synchronicity assumption. All networks are theoretically asynchronous.

The problem: the

Ficher-Lynch-Peterson Impossibility Theoremproves-->Now what does it mean to be "synchronized"? Computers don´t have a concept of time, they only know order. In a closed distributed consensus system like in a spaceship e.g. the Falcon9 --> tightly coupled nodes have a central time oracle (an atomic clock or what ever). In global distributed systems, having a time oracle means >>who ever controls the service, controls the order of events<<...and even if honest, network delay and relativity lead to clock-drift.

Decentralized Clocks

So, for EVERY decentralized distributed consensus system you need a decentralized clock. In a closed or permitted system you can use network-latency as a clock. This is what we typically call synchronicity assumption. This is what made classical distributed BFT-consensus in global networks possible. But it only works when the number of validators is fixed and known (otherwise you cant calibrate). Research here was done by Lamport et al. (Lamport Clock, Logische Uhr and so on)

Bitcoin has simple majority because it uses a different clock

Bitcoin is an open ergo --> loosely coupled system, where nodes can join at any time. Calibration is not possible, you cant use network-latency as a distributed clock.

What nearly nobody knows: The Hashcash algorithm in Bitcoin is not only an oracle but also a distributed clock. As we know, having more hash-power does not mean that you are faster than other participants you only have a higher probability. Difficulty adjusted it always takes ~10 minutes. The SHA is a so called memoryless function. For every computational-cycle/iteration and for every participant the probability per worker is exactly the same. The entropy is universal. Even when your giant pool tries 1000 Trillion possibilities per second you are not faster...1000000 Trillion is practically zero compared to 2^128 or 2^256. Every human number is zero compared to a number space that big. With every cycle, every miner of your pool starts from zero. This is memoryless. This is how Nakamoto synchronized all the anonymous nodes. The clock here is information theoretical entropy aka unkown states/bits. Most people think he simply "assumed" synchronicity :D

the criterion is binary because work allows for a node-agnostic consensus system aka longest-and-heaviest-chain-rule. This is why there is >1/2 Safety assumption and a 51%-attack vector. So yes, synchronicity assumption are the very fundamental-physical reason for all those numbers. It is not simply "math" it is determined by physics.

now back to advanced dPOS of steem

The blockchain is just a data-structure it only exists in the epistemic domain, ontologically there is no blockchain. What physically is there is a replicated state machine. When you add a block to the "chain" you propagate through the network until it reaches all other nodes. Adding a block needs 3 seconds but really adding to the state machine needs 2N². Lets assume that N is 14 (2/3) those 14 add their block to the chain...since the chain does not exist as a central server, somebody needs to verify that 14 nodes have added their valid block and this SOMEBODY are those 14 Nodes. This is why it is N² and since it is bilateral communication it is 2N². Otherwise you need a central coordinator...

That's wrong, there are BFT algorithms without synchronicity assumptions:

Those are two recent ones, in the literature you'll find more.

Synchronization in the context I was talking about is making timing assumptions.

Making timing assumptions means that we can assume that a message from one server to another will have a "maximum" roundtrip.

Asynchronous protocols don't make any timing assumptions in this regard.

Now, if we know there is a maximum time, then we can ALWAYS detect if a faulty and thus, we can avoid a lot of the problems and can achieve safety and liveness with a lower percentage (51%).

Going back to DPoS. Yes, every block needs N^2 to be finalized. But if we add more witnesses this will spread out over a longer period of time as well so that will only increase the latency and not be a problem for throughput at all.

yes, thank you I completely forgot about the new "3.0 paradigm" (have not read into any of them yet (except for avalanche) but afaik the critique of Sirer on Hedera was that they are (maybe not timed) but calibrated. I will look into it.

Ok I get what you mean. Then one has clearly to differentiate timing (which involves clock time) and synchronicity without time. But I realy dont think that any of the protocols works with actual clock time, Because the 3 seconds are not a protocol parameter but a constant? When you use a BEOS node in the orbit it changes.

yeah but there is no known maximum time I would say. Whoms clock you take to measure time?

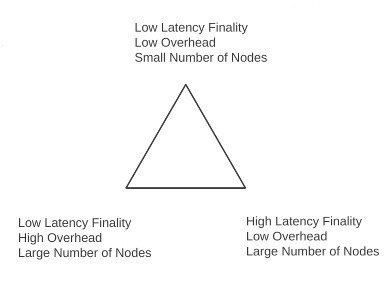

good point, now I guess I understand Dans Triangle

this scenario comes to the cost of finality-latency

Most of the truly asynchronous protocols are only probabilistic. (Similar to avalanche). However this concept is very old already(many papers from the 90s discuss it.

If we have some known max delays we can do this:

I am advocating a different system of governance, a real time Democrity, a bottom up multi-level decentralised governance plaform called Matrix-8.

I invite you to investigate this (as yet to be built) world changing system designed by John Huckel. I am working with him to gain support so we (anyone who cares to partake) can create this "owned by no-one, yet owned by all" revolutionary platform for positive world change.

This post i made today https://steempeak.com/hive-114105/@atma.love/reclaiming-our-sovereignty-during-these-chaotic-times

and this post John Huckel made yesterday https://steempeak.com/hive-153630/@matrix-8/welcome-to-new-age-dapps-a-solution-to-what-ails-us

will begin to give you an understanding of how Matrix-8 will work.

Your reply would be very much appreciated.

Namaste

Atma

Who am i?

Congratulations @theoretical! You have completed the following achievement on the Steem blockchain and have been rewarded with new badge(s) :

You can view your badges on your Steem Board and compare to others on the Steem Ranking

If you no longer want to receive notifications, reply to this comment with the word

STOPTo support your work, I also upvoted your post!

Do not miss the last post from @steemitboard:

Vote for @Steemitboard as a witness to get one more award and increased upvotes!

ok got it

@asimplemam if it's only "Proof of Stake" the witness can only vote himself (using the voting power of his account). When its Delegated Proof of Stake the witness account gets "delegated" voting power from other accounts, but those other accounts don't lose ownership of that voting power.

Thank you I posted the question on my mind before I had read WTF right!

You made that super easy to understand .

So what is the thinking in terms of the next move or direction ?

Is it just a false perception that a small number of stake holders has up until now controlled the top twenty witnesses?

Well the voting power is related to Steem Power, if SP is distributed in just a few hands the witnesses rank should reflect that. But also apathy from smal SP holders could contribute as well.

Yes apathy is part of it and another part is a lack of trust.

We need the home grown witnesses to put forth a new deal that will grow our user base and put an end to their narrow views about how we can use Steem

lol do not be a quiter

@theoretical please have a look at the REDPOS protocol concept.

Hi