Digital Images | Image Acquisition

Computers are rapidly advancing and assisting us in achieving huge benefits in each aspect of life. Be it medical, accountancy, engineering, science, arts or what so ever. I don't want to dwell on the advantages of computer, so let's move forward to today's topic.

Previously, I had been talking about how computers perceive natural language and respond to our commands accordingly. My previous posts covered that.

Today, I would like to talk about how these intelligent-beings (computers) perceive visual input/images and deliver us useful information. Computerized processes on the acquired images are performed to enhance and improve them by making noise reduction and then these images can be used as per the requirement.

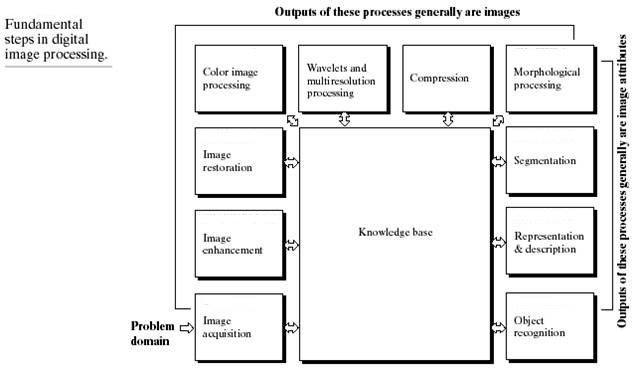

Computerized processes on images are basically divided into three parts.

- Low-level processes

- Mid-level processes

- High-level processes

In Low-level processes both inputs and output are images. It involves basic functions like reducing noise , image sharpening and setting brightness and contrasts of an image. Generally this type is called Image Processing.

Mid-level processes involve classification and segmentation of an image. For instance, if an image is taken as an input, its output would be the attributes of that image like identification of individual objects in that image. Segmenting an object from the background by finding its contour etc.

High-level processes include image analysis. Like taking an image as input whose objects are recognized and thus making sense of all the information and making meaningful inferences from it which are associated with human vision. This part is called Computer Vision.

Look at the picture below. It shows all the stages in detail. I will try to cover each with detailed examples and description. Let's start with Image Processing. This domain is itself divided into various categories as you can see in the diagram. The knowledge base box is linked with each stage. That means we may have some prior information of the image under processing. So, let's begin with first phase.

Image Acquisition

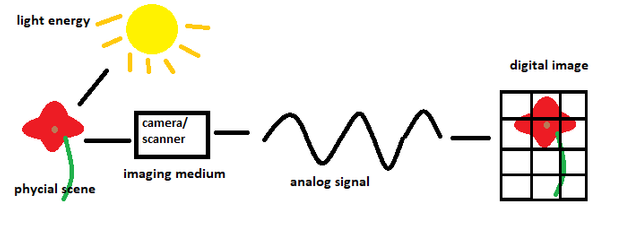

The first step in image processing is acquiring an image to perform processing. That image can be obtained from a physical scene through a camera or captured from a photograph on piece of paper through scanner etc.

Previously mechanical and chemical processes were applied to develop photographs. But that was prior to computers age. Today, we use electronics to convert images or pictures into digital form.

Basically light energy is converted into electrical energy, when making digital images. Solar Grid example is very common and easy to grasp the concept i.e. Suppose there is a grid of solar cells and charge of each solar cell is interpreted through some phenomenon and according to the intensities of light on each cell, the color of each cell is acquired which results in a group of pixels thus making an image.

There are various methods of image acquisition using sensors. The idea behind is that incoming light energy is transformed into voltage by using electrical energy and a sensor. The output is in analog waveform which is later digitized to make a digital image.

Following are the methods of image acquisition, if you want to know how they work, click here.

- Single Sensor Method

- Line Sensor Method

- Array Sensor

Now we have sensor output which is in analog form. We need to digitize it to get the image. There are two phases of digitization.

- Sampling

- Quantization

In analog waveform, the image is continuous, both in the form of x and y-axis and also has amplitude.

Basic difference is that Sampling is digitization of coordinates(x and y-axis) and Quantization is digitization of amplitude.

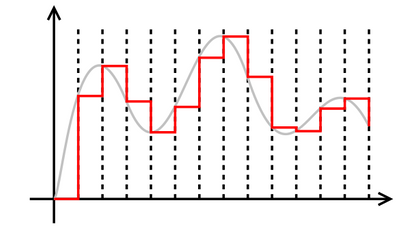

First, the waveform is marked by equal spacing. And samples of coordinates are taken at each marking. As you can see in the diagram, red line has marked intervals with equal spacing on the wave-form. This is called sampling of coordinates.

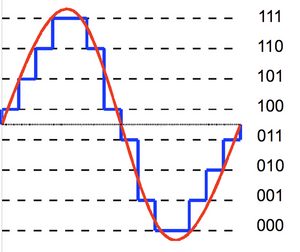

Sampled points still have some continuous signal which also needs to be digitized. That continuous part is discretely defined into gray levels from white to black. One level is assigned to each sample of coordinates. Gray levels are shown in the form of binary values. This is quantization of amplitude.

We have coordinates now and its values(gray levels). This results into a matrix of real numbers which has M rows and N columns. Each coordinate has its discrete value of gray level making it into a grid of pixels which is a digital image. Each gray level value of a coordinate decides the color of that pixel.

That's all about Image Acquisition.

Although there are few terms and concepts which need some explanation before we move forward to next phase of Image Processing.

Pixels

By now we have learned that digital images are in the form of matrix or grid. Each entry or cell of a grid is called Pixel. In short, pixel is the smallest element of an image. Pixel count can be found out by multiplying the number of rows and columns in a digital image grid. Higher the number of pixels, better the quality of image. But it's not true in some cases. We will see why, shortly.

Resolution

Resolution is closely related to pixels. If there are m-rows and n-columns in a digital image. Then mxn is the resolution of that image.

For instance, there is an image with 64 rows and 32 columns. It refers that its Resolution is 64 x 32. Pixel count is 2048.

High Pixel Resolution doesn't assure good quality of the image.

Spatial resolution

It is commonly said that high resolution of a picture or high number of pixels result in higher quality. It is very wrongly said. Actually, it is Spatial Resolution which decides the quality of a picture.

What is Spatial Resolution?

It is basically the number of pixels/dots/lines per inch. Higher the number of pixels per inch, higher is the quality of an image.

Two same images of different dimensions (width and height) cannot be compared quality wise because the image with higher dimensions could be zoomed and would be blurred.

Rather we compare images with same dimensions and check how many pixels each image holds per inch. Image having higher pixels per inch would be better, quality wise because it will show the minor details more clearly. In cellphones' features, there is a thing ppi density which pixels per inch. Higher the ppi density, better the quality of image on same screen sizes.

There are some simple functions performed on digital images which are relevant to the concepts we have discussed so far.

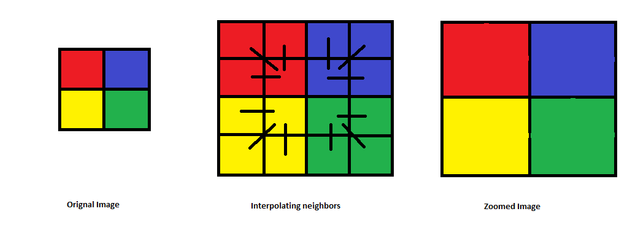

Over Sampling (Zooming)

Zooming is one of the simplest functions applied on digital images. The idea behind is to increase the number of pixels or samples. Zooming is related to Over Sampling because in Over Sampling we increase samples like in zooming. Pixels increase when we take more samples of coordinates (x and y-axis).

How it works?

Answer: Replicate the pixel to nearest neighbors.

I couldn't show properly but when the image is zoomed, it gets blurred, depends on how much zooming is performed. Because replication of pixels isn't perfect. Noise occurs and image looks blurry.

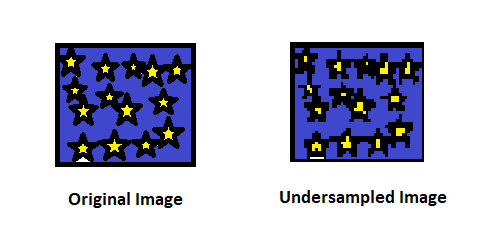

UnderSampling

Less samples are taken in under-sampling, so it misses out on minor details. Less samples mean less pixels. Aliasing is almost the same thing because it also refers to indistinguishable signals which also refers to less samples/pixels. When we don't have enough samples, we lose details in the image or the clarity.

References

- https://sisu.ut.ee/imageprocessing/book/2

- https://buzztech.in/sampling-and-quantization-in-digital-image-processing/

- https://www.tutorialspoint.com/dip/image_processing_introduction.htm

- http://legendtechz.blogspot.com/2013/03/5-explain-process-of-image-acquisition.html

Great to see a new contributor from subcontinent region. Will you be explaining the math like sampling theorem / fourier stuff etc in future? Since you mentioned sampling, it would be nice to introduce its math in a easy way. :) Just a suggestion. Signal processing was my favourite subject when I used to do electronics engineering degree.

I didn't intend to focus on the mathematics of image processing techniques. You know how complicated it is and my aim is non tech people as well. I didn't want to yawn them at my piece. Lol.

But sure I will discuss maths where needed the most in future posts.

I dont think sampling theorem and basic fourier stuff is that tough. Actually these are concepts people can relate with, at least with proper examples. :)

Like I said, I had non-tech people in mind too while writing this. So I decided not to go that deep and just explained the basic concepts.

Anyways thanks for the suggestion, I will consider it in future posts.

Thanks. As a photographer this is an interesting read. When you look at social media , stock photography companies like EyeEm they are really branding themselves as AI image ranking companies now. As the proliferation of images on the internet creates a quality problem and they are using AI that has learnt from the social media platforms to sort out what is an good image from a bad image.

I'm glad, you find it interesting.

And yes EyeEm is using Image recognition technology to classify good and bad quality image. Object Recognition, detection and identification is performed for that purpose.

For instance, to check the quality of a medical image, they look for specific cells or tissues that should be detected in that image.

A good contribution to the Steemstem tag. Have an upvote.

If anyone is interested I wrote a style guide (link here) for STEM posts that you might find interesting (or not, up to you).

Hi @event-horizon I might have to talk to you sometime (maybe discord if you're on?) as the whole image processing pipeline is something I'm quite interested in.

btw congratulations to being featured on distilled.

Why not? Will try to catch up with you on discord.

And thanks for kind words.

Any thoughts as to the digital vs film debate? :P

Good question. lol

Film technique is fascinating that's why people from that age are still not ready to let it go. No doubt, It delivers promising quality, Dunkirk is the new proof. I like that movie. But there are shortcomings too.

Digital world is more advanced now with easier use and lesser cost.

If I would be given a digital camera or the old one with film roll. I would choose the digital one. That doesn't mean that I'm not fascinated by classic film rolls. 😀