New questionnaire draft and distilled of what was said in the last meeting

On Monday we had a meeting with Utopian where we discussed a draft for the new questionnaire. We discussed the questions and some general points regarding our translation category. Many LM did not make it to the meeting so we will distill in this post the major points made and we will propose a new draft of the questionnaire following the suggestions we received.

The main comments our draft received were:

- The score of the questionnaire did not start from a value of 100. For the sake of discussion we decided to work with positive scores and we think it may be worth doing so until we reach a consensus on the questionnaire. Before implementation, the scores can be adapted to follow the same structure that the devs are using.

- There should not be any distinction between major and minor mistakes, a mistake is a mistake. We had two questions regarding mistakes, one was dealing with major mistakes and the other was about minor mistakes. A major mistake is considered a mistake that changes the meaning of the sentence and a minor mistake is considered something that does not have as much influence. The two questions gave more granularity and fairness to the questionnaire in our view. However, nobody spoke in support of this during the meeting and we were asked to combine both questions into one.

- Add a question about legibility of the translated text. A translator could be accurate in his translation but choose a difficult word that not many users understand. This question will ensure that translators that care also about the legibility of the text will receive a higher score.

- We had a question about translation style and were were asked to change the word from "style" to "consistency"

- Merge all questions regarding contribution post grammar, style and descriptiveness into one. There was quite a bit of discussion over this, users debated whether contribution posts are important or not and if this will have sense in Utopian v2. But for now we rely on contribution posts and LM will need to receive enough information to carry out their review. On the other hand, a translator should be able to write without making grammar mistakes.

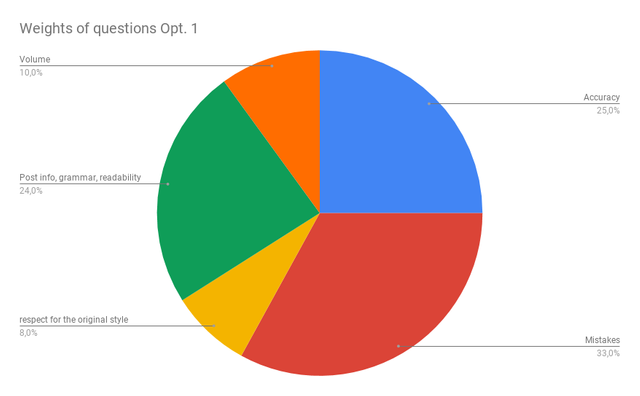

Here we propose two possible distributions of scores throughout our questions. In Option 1 we have some emphasis on the descriptiveness of the contribution post and the grammar and lower emphasis on volume and consistency of the translated words.

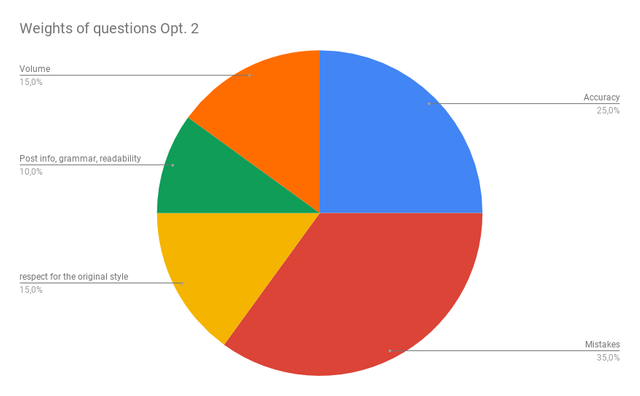

In Option 2 we have more emphasis on volume and consistency and descriptiveness and grammar of the contribution post have very little weight and serve just to give more granularity to the score.

Which of these two options do you think is better?

During the meeting, Utopian has also shown openness regarding suggestions that may require work from a developer's point of view. Right now their devs are quite busy but they are willing to discuss our proposals. Something we also agreed on is to link the reward for the LM to the volume of words their had to review. If you have more suggestions feel free to add them in this list:

https://docs.google.com/document/d/1EwCP8GWyoWdKTfh7jcqTJ1z8Va_wR02PqUReopYjFkE/edit?usp=sharing

For proposals regarding the questionnaire please use the comment section below. As usual, if you express your opinion on something we would also appreciate if you provide a counter proposal.

I very like the idea, but we should also consider the number of words translated vs the errors made by the translators.

example: 1100 words with 3 errors is more valuable for me than 800 words with 1 error. let's think both translations done in the project with the same difficulty. if we just focusing the error and not the value/numbers of the total words that the translator accurately contributed to such project is a little bit out of sense.

Thank you for your comment, the errors are already expressed as a percentage so they take into account also the volume of the translated text

not in every way @aboutcoolscience🔬.

if the both contributor submitted more than the maximum then they have both same score in the number of words.

how if

translator 1 translated 1500 words for example accurate but have 3 errors in overall.

translator 2 translated 1400 words for example also accurate and only have 1 error.

compare the 1500 words to 1400 words, for example the maximum is 1000 words above as before, then they have the same score at the number of words but look.

deduct all the errors the translator 1 still gives more contribution vs translator 2. and translator 2 will get more score because he only got 1 error.

for me the most important is the contribution we provided to the projects. the errors can be corrected by LMs or re-translate by other translators.

Instead we can compute first the numbers of words vs the errors, that would be more fair.

The "mistakes" question is percentage on the actual wordcount (mistakes per 1000 words), and not on what you fill in the questionnaire, so:

so there is no change there. The wordcount issue is not that easily fixed, we would have to go to a formula of $X/word and is something Utopian doesn't want from what I gather, as it gives the sense of a normal job and not an incentive.

As I've been saying all these months: we are all volunteers and we are not guaranteed to get

a salaryan upvote by @utopian-io. It might not sound right to some, but that's the truth, that's how the system worked since the beginning in all categories (and that was the purpose of utopian - to provide incentives and not payments). if it was sustainable, we wouldn't have to change the questionnaire and make it harder to get a better score, at all.Utopian and DaVinci

Thanks @davinci.witness for your awesome work with the Utopian translations category!

Contribute to Open Source with utopian.io

Learn how to contribute on our website and join the new open source economy.

Want to chat? Join the Utopian Community on Discord https://discord.gg/h52nFrV

I’m sorry I wasn’t able to participate in the meeting. Unfortunately, I was still at work and unable to follow and take part in a vocal chat.

I would definitely have spoken up in defense of distinguishing between minor and major mistakes. I believe there’s quite a big difference between completely mistranslating a string (major) and forgetting a comma (minor) and our review should mirror that. Therefore, I’m curious as to whether those in favor of only one question will make every comma and accent count just as much as a translation mistake or are planning not to count those mistakes in their review. @egotheist you’re my personal reference for strictness: what’s your take on this?

With regards to the two new proposals, I’m definitely in favor of the second option, giving more weight to the actual translation than to the presentation post.

Thanks for all the good work and feedback, by the way!!