Journey through the understanding of particle physics - One

[image credits - Pixabay]

A couple of weeks ago I volunteered to join @lemouth's experiment to involve programmers in the Steemit community in developing open source software for analyzing data produced by high energy collisions in CERN's Large Hadron Collider (LHC)

Though I've always have an interest in the subject of particle physics, I come from a software background and my understanding of this complex subject is limited to what I have read in popular scientific media such as TV shows, books and magazines.

While it would be possible to produce the software required for this project without much understanding of the subject matter, I think it would be much more fulfilling to develop some comprehension of what is being implemented, at least to a point where I could describe in plain English the objectives of the analysis.

To this end I am starting a series a posts in which I will attempt to describe my current understanding of the project, using my own inaccurate words and side research.

I fully expect that these posts will cause a lot of sighs and head shaking from the scientific community ;-) but I'm also hoping that it will trigger constructive discussions and corrections by means of comments and maybe side posts.

In the end I hope that this effort will lead other laymen like me to get a deeper understanding of this very interesting subject that is trying to figure out how nature works at its most fundamental level.

What I have learned so far

As its name suggests the LHC is a huge particle accelerators used to accelerate and collide hadrons.

Hadrons are particles made of quarks held together by one of the four known fundamental forces: the strong force.

Different types of hadrons exist. Among the most well known we find protons and neutrons which can combine to form atom nuclei.

Different scientific theories (models) exist to explain the fundamental laws of nature at microscopic levels.

These theories make certain predictions which need to be verified through experimentation to ascertain their validity.

For example, a theory may predict that the collision between particles at certain energy level would result in new particles emerging, pairs of particle and anti-particle interacting and radiating into other elements and so on.

The LHC can be used to produce the enormous levels of energy required to collide hadrons at speed high enough to cause these particles to smash into their different sub-elements.

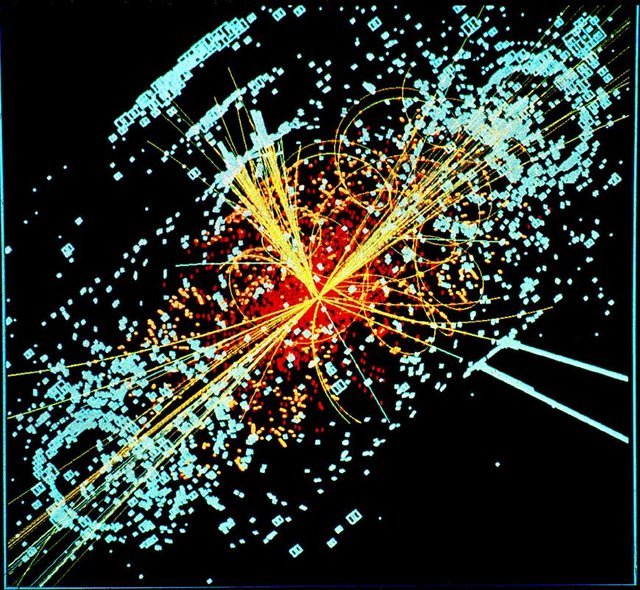

The result of these collision is a mess of various particles literally flying in many directions, interacting with each other (in some case several times), decaying into other lower-energy particles until finally being detected by the various detectors within the collision chamber.

[image credits - Wikipedia]

All of this happens in times that are so small that it is hard to comprehend, but the lifetime of some particles produced is so small that these may not be detected directly by the detectors. Rather the artifacts produced by these particles interacting with each other, such as radiations, can be collected by the detectors.

It is the objective of the theories to predict the measurement of particles and radiations.

When enough data is gathered and analysed to corroborate a theory it brings up the level of confidence among the scientific community towards that theory and brings it one step closer to be accepted as a universal law of nature.

Types of Particles and Clean Signal Isolation

So far @lemouth introduced us to 3 types of particles which belong to 2 categories: stable and unstable.

Stable particles live long enough to reach the detectors unaltered, leaving signals recognizable by scientists.

We were introduced to 3 stable particles: electrons, muons and photons.

Unstable particles decay almost instantly and result in new stable or unstable particles. For this reason unstable particles cannot be detected directly.

The LHC collides protons which are made of quarks and gluons. These two particles interact strongly with each other but can be separated through the high energy collisions.

These collisions produce through radiation more quarks and gluons.

Pairs of highly energetic particles can combine and radiate again into other other of lesser energetic particles. The energy of the parent particles is shared among all produced particles so that the conservation of energy principle is preserved.

This process can happen several times until the resulting particles don't have enough energy to radiate and become stable.

We also learn that, by virtue of the theory of strong interactions, quarks and gluons cannot be observed on their own.

The unstable particles produced through the original collision and ensuing radiations all travel more or less in the same direction and are detected as jets of strongly-interacting particles, or simply jets.

Stable particles and jets are indentified through the fingerprint that they leave within the detectors.

However these fingerprints can be extremely similar making it unavoidable to have confusion in some cases with regard to the true nature of a detected signal.

For example, an electron can sometimes be initially detected as a jet.

Scientists have devised rules which use certain properties of the measured signals to allow resolution of this confusion and determine with some level of confidence the true identity of the measured particle.

All particle detections are also not considered.

In order for a detected particle to be considered for the theory, it must undergo a selection process consisting of:

- Detected particle must meet minimum measured properties. These particles meeting these requirements are referred to as baseline particles.

- Particle overlap (confusion between pairs of detected particles) must be detected and result in certain particle candidates to be dismissed in order to end up with "clean" signals. In effect the analysis should result in clean particle isolation. This will be expanded upon in future exercises but my understanding so far is that the mechanism should allow extraction of clean signals from the original mess resulting from the collision and the unavoidable measurement errors.

- Resulting particles are further selected through more stringent criteria into a final set of signal particles.

Some Particle Properties

All of the above steps make use of measured properties of the particles.

We were introduced to the following properties:

- Particle transverse momentum which is the component of the particle momentum (mass * velocity) in the plane perpendicular to the collision beam. Correction: I was being naive here with regards to the momentum formula. The formula p=m.v is only valid at non-relativistic speeds. After looking it up, it turns out that the formula is actually p=γmo.v , where mo is the mass at rest, v is the velocity and γ is a relativistic factor 1/SQRT(1 - v^2/c^2). In non-relativistic condition v << c and therefore p=m.v is correct.

- Particle transverse energy which is directly related to transverse momentum

- Particle pseudorapidity which describe the angle of the particle relative to the beam axis. In other words post-collision particles take different paths predicted by the theories.

Research paper used for @lemouth's exercise

The following research paper is at the center of the @lemouth's exercise.

What is the subject of this paper?

The title does little to help me understand this :-) :

Search for top-squark pair production in final states with one lepton, jets, and missing transverse momentum using 36 fb−1 of √ s = 13 TeV p p collision data with the ATLAS detector

It seems like the paper discusses the attempt to discover a pair of quark types resulting from some particle interaction.

I'm sure that this will become clearer with future exercises and I'm happy to wait until then.

That's it for now. Phew! :-)

References

- CERN Large Hadron Collider (LHC)

- Search for top-squark pair production in final states with one lepton, jets, and missing transverse momentum using 36 fb−1 of √ s = 13 TeV p p collision data with the ATLAS detector

- # Particle physics @ Utopian - Detecting particles at colliders and implementing this on a computer

- # Particle physics @ Utopian - Implementing an LHC analysis on a computer: the physics objects

Great write-up! I have a couple of comments. But only a couple. Please don't take them personally. I really enjoyed what you write! My point is to show where you have room for improvements :)

In fact, we remove the overlap to end up with particle that are isolated from each other. We want clean signatures. Of course, I didn't fully address isolation yet (this is for next week) but I wanted to add you an appetizer ;)

All these misidentifications leads to a component of the background to any signal: fakes. Most of the times these are smallish, but sometimes, they can be dominant;

Here, everything is relativistic. The p = mv relation has to be modified accordingly.

Finally, I think your post would benefit to be divided in different sections, facilitating the reading for anyone not familiar with the topic :)

No problem, I was definitely looking for corrections and improvements so any criticism is most welcome.

I will add a note about isolation.

Ah, didn't think about that. I read about it before but it didn't cross my mind. Will correct the text.

Good idea. I will revise the structure of the post.

You have to think more to any other potential readers than to me. This is the key: they know less than you :)

I see why they call it a particle zoo. I also don't envy the data wranglers at CERN. They sheer amount of data reduction would make your eyes cross.

No everything is recorded. Humanity does not have enough storage for that. There is a process reducing the rate to something manageable (throwing away the not-interesting stuff on the basis of a super quick decision making process).

Yes, I suspect there are many years of research and experience behind to be able to do this...

To be capable of explaining difficult things in a simple way "normal" people can understand, is a gift and bridges two worlds. Thanks and steem on!

Thank you johano.

Congratulations @irelandscape! You have completed some achievement on Steemit and have been rewarded with new badge(s) :

Click on the badge to view your Board of Honor.

If you no longer want to receive notifications, reply to this comment with the word

STOPTo support your work, I also upvoted your post!

Wonderful write-ups, Steemit is such a place to earn and still learn at the same time, I love here

Thanks a bunch.

I'm not really a physics person but the picture caught my eye and made me read, interesting stuff I must say

I have also wanted to see first hand how the LHC works as a student a long time ago. As I have also mentioned to @lemouth.

Thanks for bringing me closer again. I will try to keep up with your update

A unique place which is the result of cooperation between many countries.

If you come to Europe in a close future, be sure to stop by in Switzerland to visit CERN =)

STOPIt's actually causing me a fuzzy feeling of excitement :D I find it great when people engage with this community in a way that puts genuine interest and learning first and dough second, and shows that one can profit from this even without the latter.