Changing the Utopian Review Questionnaire for the Translations Category

Repository

https://github.com/utopian-io/review.utopian.io

Components

The Review Questionnaire is the place where reviewers submit their answers and get the score for a pending contribution. While most categories may have a very streamlined experience with it, the translations' questionnaire has many issues.

The most common complaint about the questionnaire is the difficulty question. There are 4 difficulty levels (Easy, Average, High and Very High) and there is a lot of unfaireness on how each project is scored.

Most projects are rated as "Average Difficulty", even though they aren't, as going for "High" will increase the score dramatically.

Since a few days ago, the Utopian voting bot was updated with new voting logic to make sure no great contributions will go unvoted (while not-that-great ones get their upvotes), there is another step to make the whole process better: Change the questionnaire.

Instead of presenting each question here, and then propose my changes in the next session, I decided to merge both the presentation and the proposal on each question below, just to make it easier to comprehend.

The DaVinci team is already gathering their own proposals for the questionnaire, so we will have the best and fairest questionnaire in no time!

Proposal Description

I'll present each question, together with its issues and proposals in sections to make it more readable.

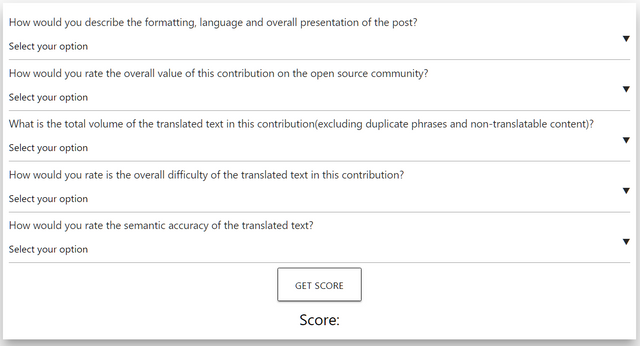

How would you describe the formatting, language and overall presentation of the post?

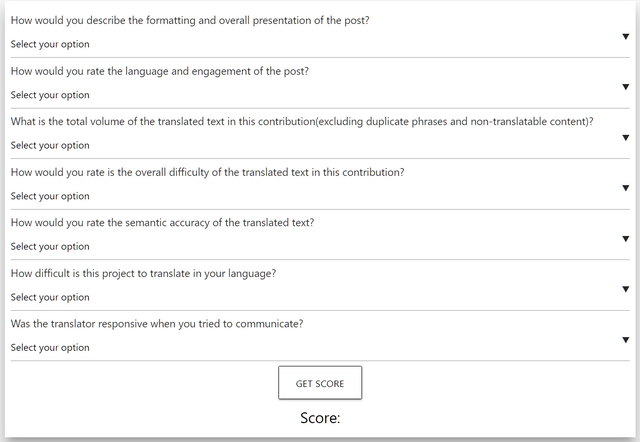

This question is too generic and could be broken in 2 parts. The first part should be about the formatting and overall presentation of the posts. A reviewer should take into account if there are images/videos and if the author has taken steps to make the reading experience better and easier on the eyes.

The second part would be about the language (are there syntax mistakes? did the author forgot/misused punctuation marks like I'm doing in this post?), if the post is trying to engage the visitors in conversation and try to get them learn more about the project and/or the category.

How would you rate the overall value of this contribution on the open source community?

This question should not be in the questionnaire. It might make sense, but all contributions are valuable. And since most contributions get "This contribution adds some value to the open source community or is only valuable to the specific project," the question shouldn't make a difference in the score.

What is the total volume of the translated text in this contribution

With the way the questionnaire is now built, there is no score change if you translate 1000 words, 1200 words, 2000 words, 5000 words and so on, so most translators are translating 1150 words on average. But if someone translates less words, they are getting penalised (the total volumes the questionnaire has, are: Less than 400 words, 400 - 700 words, 700 - 1000 words, More than 1000 words).

There should be more volume levels (at least 2) and the current levels should be changed. My proposal is to have the following volume levels:

- More than 3000 words

- 2500 - 3000 words

- 2000 - 2500 words

- 1500 - 2000 words

- 1000 - 1500 words

- 500 - 1000 words

- Less than 500 words

How would you rate is the overall difficulty of the translated text in this contribution?

This question is the one that makes our reviewing lives difficult. There are 4 difficulty levels (Extremely High, High, Average and Low), and each step is removing a big chunk of the score. My proposal is to have 7 (if not more) difficulty levels, and each project should have a predefined difficulty level:

- Very High

- High to Very High (middle ground between 1 and 3)

- High

- Average to High (middle ground between 3 and 5)

- Average

- Low to Average (middle ground between 5 and 7)

- Low

There should also be an extra question that takes into account the actual difficulty of the project in each language and gives a small bump (or penalty) to the score. The reviewer could rate the "language" difficulty of each project as:

- Very Difficult

- Difficult

- Neutral

- Easy

- Very Easy

How would you rate the semantic accuracy of the translated text?

This question is also too punishing for the contributions. We are trying to choose an answer with our own metrics, but that's a problem. One reviewer might choose "Good" instead of "Very Accurate" with 1 mistake, while the other might choose "Very Accurate" with 3 mistakes.

Of course, this one depends on the impact each mistake has on the translation. Forgetting to translate half a string has a bigger impact, than using a synonym. The questionnaire should move to a "count of mistakes" type of answer. This one needs a lot of thought (to make clear what counts as a mistake). It could also be broken up into 2 questions:

- How many serious issues did you find in this contribution?

- How many non-altering mistakes did you find in this contribution?

New question proposal:

Another question that we could have added, is one that gives a little incentive to encourage better communication between a translator and a reviewer. An extra point could be given if the translator responds promptly, and a point should be subtracted if the translator was responsive to a reviewer's communication.

Mockups / Examples

This is how the questionnaire is currently structured:

And this is the questionnaire with my proposals:

Instead of having a lot of different images here, I've taken the initiative to implement my proposals into a copy of the actual questionnaire, just to make it a little bit more of an interactive proposal. You can find it here together with the current one.

Please note: while I've modified some of the scores, I'm not an expert on that. My proposal is just for the Questions/Answers. The scores should be decided and changed by the @utopian-io team.

Benefits

The translations category is currently one of the biggest (if not the biggest) ones in the Utopian Ecosystem. By implementing part (or all) of my proposals, the questionnaire will be able to give a more accurate and fair distribution of incentives on a bigger amount of contributions.

Hello @aristotle.team! Although this proposal is cool, I would like to mention that this is not the kind of "Idea" contribution we want to see in the suggestion category. Suggestions should not be related to "organizational process" but should be for the "technical" aspect of the project which includes ideas on implementing new features and of course enhancing the existing ones. The #iamutopian tag was created with the aim of getting valuable feedback from both CMs and moderators to help us improve the general/specific category guideline and also the questionnaires across each category. Having an idea like this in an iamutopian post would greatly be appriciated.

PS: This post was not scored according to the questionnaire.

Need help? Write a ticket on https://support.utopian.io/.

Chat with us on Discord.

[utopian-moderator]

I discussed it with one of the CMs before posting this, and I was told to post it under the "idea/suggestions" tag, but I guess it was the wrong category.

Thank you.

- @dimitrisp

Thank you for your review, @knowledges! Keep up the good work!

This was tagged both "blog" and "ideas," with ideas tagged first. So, I won't be reviewing it officially for Utopian. I do, however, have comments. Mostly, they can be summed up as "what @ruth-girl said." But beyond that, I want to stress the need for granularity.

Also, beyond accuracy and semantic accuracy, I would also suggest giving some love to readability. And, yes, I realize the original text does have an effect on that. But I do think you should give some weight to how good the text is in the translation. Text can be semantically correct and accurate, while being unduly difficult and/or not fun to read. Naturally, my own outlook is colored by the fact that the majority of my own translation work has been in fiction, but it holds true for any kind of translation.

There are strings (and in software like ReactOS, that's the standard) where it's not possible to do that, while it would be lovely. Apart from that, the questionnaire does not allow/support this kind of review but it would be a nice addition.

Thank you for your comment!

(The "blog" tag was added as a generic tag, not directly related to the utopian tag. If this causes any issues, I'll stop using it if the post in question is not a "Utopian Blog" post.)

I agree with these ideas presented here. We need to address the questionaire issue ASAP.

My points are:

Hopefully, all these will be rectified soon ;)

I am so proud of you @aristotle.team!

Aristotle is proud of you too! xD

Hey, I very like that last question, that is one of the most important when fixing the errors

ASAPif we have that question the translator will be more attentive and responsive in terms of correcting the errors in their translation. the rest question is awesome for me.P.S. we should have some example of the Above Average

POSTto make the translators aware how their post can be Good and above average.Hopefully, we will have such examples soon, together with the questionnaire (if not earlier)

This was a really great breakdown of the current questionnaire’s issues. I began writing a comment multiple times... the elear’s post came out and I switched over there... then the comment kept on growing and I ended up writing my own post in the matter.

Thank you for this, though, which was definitely a good stepping stone from all the reflection that followed and is going on right now.

I really like this idea and its totally the embodiment of what I have in mind. Nice job!

Hey, @aristotle.team!

Thanks for contributing on Utopian.

We’re already looking forward to your next contribution!

Get higher incentives and support Utopian.io!

Simply set @utopian.pay as a 5% (or higher) payout beneficiary on your contribution post (via SteemPlus or Steeditor).

Want to chat? Join us on Discord https://discord.gg/h52nFrV.

Vote for Utopian Witness!

Congratulations @aristotle.team! You have completed the following achievement on the Steem blockchain and have been rewarded with new badge(s) :

Click here to view your Board of Honor

If you no longer want to receive notifications, reply to this comment with the word

STOPDo not miss the last post from @steemitboard: