Digital Images | Image Enhancement

I am back with some more interesting information about Digital Images. Hope you find the post useful.

The images we capture through digital cameras or any other digital medium are not always of the best quality. Light plays game with the image, making it too bright or too dark sometimes, resulting in an apparent loss of fine details, making it a bad quality image. It's not always light though, at times image acquisition equipment has limitations too. But the good news is, we can improve the quality of digital images. It's just a game of Pixels. ;-)

So as the title suggests, we will talk about Image Enhancement techniques today.

Images are usually improved or enhanced for specific purposes and different images are improved with different techniques. Like, techniques used to enhance the quality of landscape image would be different from the techniques applied at medical image (X-rays image etc).

There are two different approaches of Image Enhancement.

- Spatial Domain Transformations

- Frequency Domain Transformations

We will first talk about Spatial Domain Transformations which is direct manipulation of pixels. As you know an image is just a group of pixels with different gray levels.

A specific function is performed on all pixels one by one to get the desired image.

g(x,y) = T[f(x,y)]

f(x,y) is the image with pixel coordinate values, x and y.

T is the function performed on each pixel.

g(x,y) is the resultant image.

In Spatial Domain there are various techniques of image enhancement. Let's go through each.

Gray Level Transformations

There are three types of Gray Level Transformations.

- Linear (Negative/Identity) Transformations

- Logarithmic (and Inverse Log) Transformations

- Power Law (nth power and nth root) Transformations

Linear Transformations

As the name suggests, mapping of each input pixel to the output pixel is called Identity Transformation. It is as simple as that.

Negative transformation is basically the inverse of each pixel.

s = (L - 1) - r

s is output pixel. L is the total gray levels and r is input pixel.

If L = 256 and r = 0 (black), then s = (256 - 1) - 0 = 255 (white).

Lighter pixels become dark and darker becomes light.

( Total gray levels are 256 when the image is 8bbp )

Logarithmic Transformations

When we need to enhance darker pixels and compress lighter ones, then we use this function.

s = c log (r + 1)

s and r are output and input pixels, c is a constant.

Inverse Log gives opposite results.

Power Law Transformations

This is used for enhancing images for different display screens.

s = c r γ

You can spot a gamma symbol here. Value of gamma decides the appearance of image. Different display devices have different gamma values which adjust the image accordingly and that is gamma correction.

I have shown how the image looks like at different values of gamma.

|

|

|

|---|

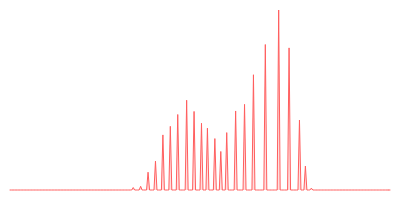

Histogram Image

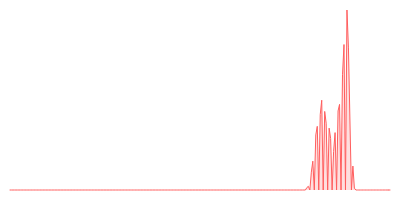

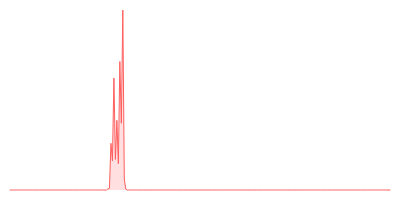

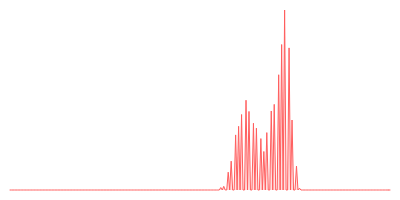

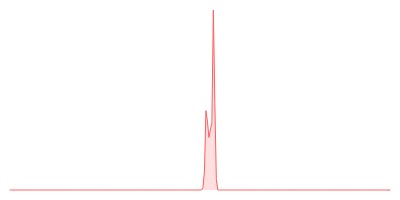

As you know Histograms are kind of graphs in which at x-axis there are subjects and at y-axis there are frequencies of those subjects.

Histogram of an Image has gray levels range at x-axis and at y-axis it has frequency of occurrence of each gray value/pixel in the image. Like if we have 8bbp image that means x-axis's range is 0-255.

Histogram of an image helps us to understand how the image may look like. For instance,

- Image is meant to be bright, if most of the bars have high frequency on right side.

- Images is dark, if most of the bars have high frequency on left side.

- Image has high contrast, if the bars are spread across much varying intensity levels.

- Image has low contrast if the graph is dense at some area and less varying intensity levels.

| Bright Image |  |

|

| Dark Image |  |

|

| High Contrast Image |  |

|

| Low Contrast Image |  |

What is Histogram Equalization?

The main idea behind this concept is to get a flat, evenly distributed histogram, using maximum of the intensity range to get the best contrasted image.

|

|---|

Arithmetic and Logic Operations on Images

We can achieve something by applying arithmetic and logic operations on images as well. You will see how and why shortly. Two or more images are taken as input and according to the operator and pixel-by-pixel fashion, generate output pixels of the resultant image. Input images are usually in binary form or if they are in integer form, then operations are performed bit-wise.

- Adding two or more images can help in noise reduction. Like if there is a same scene and images are taken at different points in time then those images can be added, ignoring what happened in between the time span (possibly noise in case of any movement).

While doing the actual programming, pixels are represented mostly by integers or bytes which allows us to use limited bits. Like in an 8bbp image, the maximum value of pixel allowed in that range is 255 which is 11111111 in binary (8 bits). So in that case, overflow happens.

Either we do wrapping or perform saturation when this happens.

In wrapping, we subtract the maximum pixel range value from overflown pixel entry, so to start again with the minimum value.

In saturation, all the overflown values are set to the maximum possible value in the allowed range.

Saturation maybe good, if there are less overflown pixel values. But if there are more overflown values and all are set to the same value (for instance 255) then the image will be white/bright in most areas and we may lose important information or detail.

One solution is to represent pixels as float while programming because float gives us much larger range of numbers.

- Subtraction of two or more images helps in motion detection or finding contrast.

To deal with negative numbers in the output pixel we use wrapping here too or we set all negative pixels to zero which means black pixels. Again it may end up in the loss of information. So the proper solution is to manipulate absolute values only.

Do you know? Contrast = Maximum pixel intensity of an image - Minimum pixel intensity of an image

- Multiplication can help in making the image brighter or darker. If pixels are multiplied with larger number then we get high values i.e move to brighter side. If pixels are multiplied with numbers less than 1 then we get lower values i.e move to darker side.

- Division can help in motion detection like Subtraction. Unlike calculating absolute change in subtraction, we get fractional change through division.

- AND-ing is intersection of images. It helps us find the objects in images which didn't move ( have same pixel values).

- OR-ing is union of images. It helps to find all the objects that have represented that image in some point of time.

- XOR-ing helps in change detection as well. If you see the truth table of XOR , it is like this:

0 xor 0 = 0

0 xor 1 = 1

1 xor 0 = 1

1 xor 1 = 0

It suggests that zero in resultant pixels means there is no change and 1 means there had been a change.

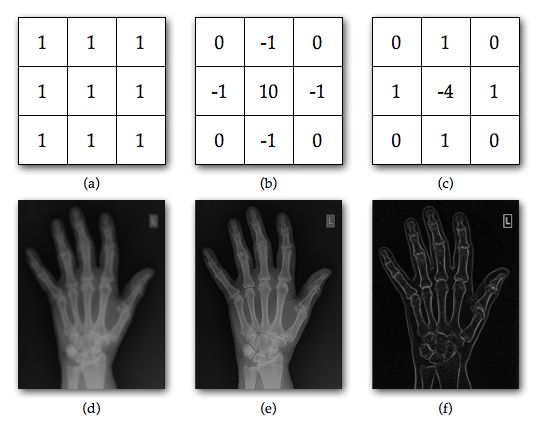

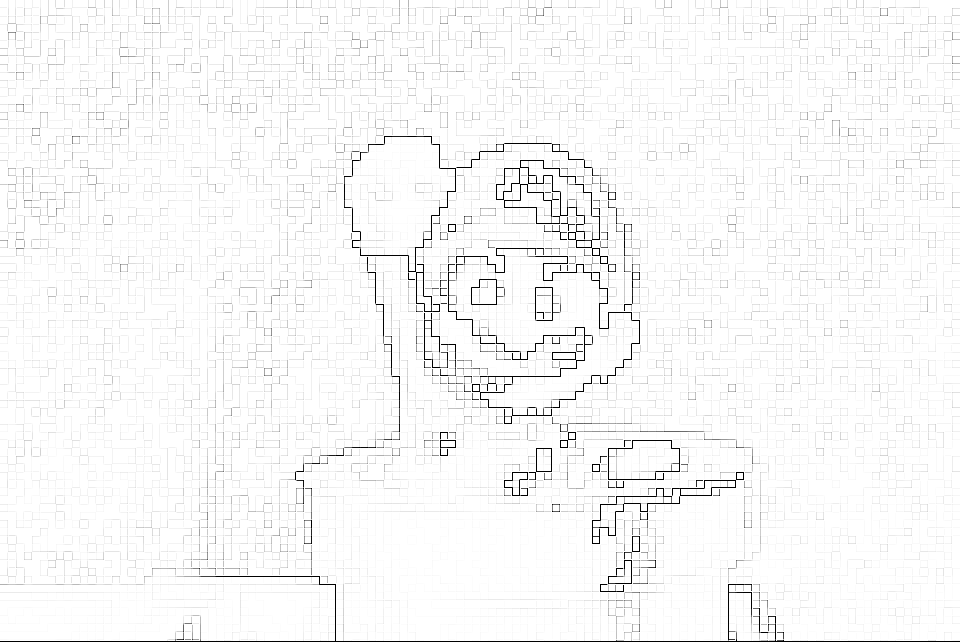

Concepts of Mask, Convolution and Filtering

Masking, Filtering and Convolution are all from the same boat.

Mask is applied to each point/pixel of an image and resultant image depends on the mask, it has been applied with. The process is called Convolution.

Like an image, Mask/Filter is a matrix with odd number of entries (Odd because we need to find middle entry for convolution process).

How convolution is done?

It is best described in this link . The explanation couldn't be more simpler than that.

Purpose of Masking or Filtering

- Blurring Effect

- Edge Detection

- Noise Reduction

- Sharpness

Blurring effect is sometimes applied to suppress the insignificant details so that significant ones can out stand.

Blurring is different from zooming because if you remember from my previous post, zooming increases the number of pixels and blurring doesn't.

Edge Detection is applied so that objects can be told apart in an image only when they have clear or sharp boundaries or edges.

These were the basic concepts related to Spatial Domain in Image Enhancement. We will move to Frequency Domain in next post soon.

Book Reference

Gonzalez C.R, Woods E.R, Digital Image Processing, 2nd Ed, 2000. Prentice

Internet References

Image Tools

Non-credited images are edited with the help of this tool.

http://pinetools.com/c-images/

Note: I have used black and white images on purpose. Color Image Processing will be discussed later.

Thank you for your Patience

Awesome post!! Keep it up and check out THIS POST as well as I have something similar.