ELIZA-BEGINNING OF ERA OF ARTIFICIAL INTELLIGENCE

ELIZA

Eliza was the first attemp to create a natural language processing computer program from 1964 to 1966 at the MIT Artificial Intelligence Laboratory by Joseph Weizenbaum. ELIZA's creator, Weizenbaum regarded the program as a method to show the superficiality of communication between man and machine, but was surprised by the number of individuals who attributed human-like feelings to the computer program, including Weizenbaum’s secretary. Eliza worked by using a 'pattern matching' and substitution methodology that gave users an illusion of understanding on the part of the program, but had no built in framework for contextualizing events.

ELIZA as DOCTOR (PSYCHOTHERAPIST)

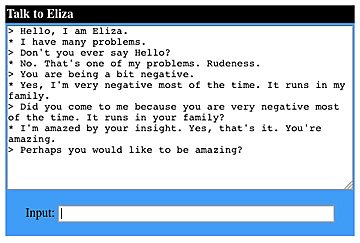

One of the most famous script, DOCTOR, simulated psychotherapist. Dr. Weizenbaum made ELIZA into a therapist because therapists often ask open-ended questions. Therapists aren’t supposed to give you advice, and they’re not supposed to tell you what to do. They’re supposed to ask questions and trick you into Saying More, on the grounds that this will be Revelatory, and will Help You Figure Out Things Yourself and will Aid Your Mental Health. This made things easy, programming-wise. All ELIZA had to do was “listen” to you what you said — i.e., parse your sentence in a very basic way, and then ask you a question in some way related to the sentence you had typed. So if you mentioned your sister, say, ELIZA would reply by saying “Tell me more about your sister. In experiments during the 1960s, people were fooled by ELIZA. They were told that a real live therapist was talking to them from a second computer, and they believed it.

As such, ELIZA was one of the first chatterbots, but was also regarded as one of the first programs capable of passing the Turing Test.I will tell you about the famous TURING TEST in other post.

Many academics believed that the program would be able to positively influence the lives of many people, particularly those suffering from psychological issues and that it could aid doctors working on such patients’ treatment. Though ELIZA was capable of engaging in discourse, ELIZA could not converse with true understanding. However, many early users were convinced of ELIZA’s intelligence and understanding.

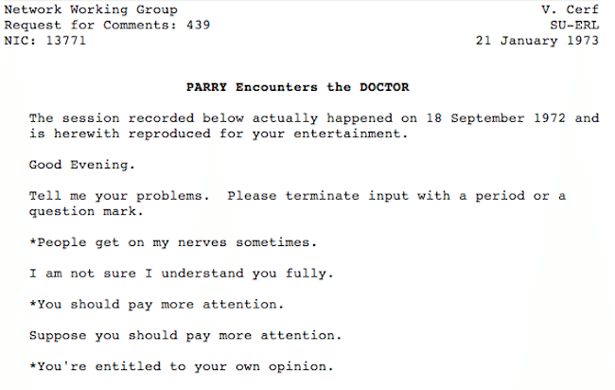

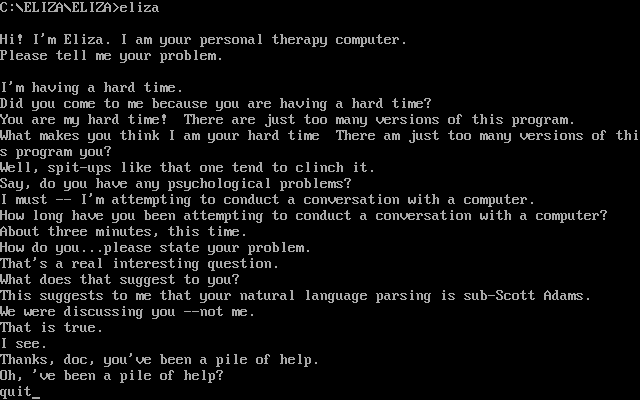

ELIZA BEING FUNNY & STUPID

Look at a ridiculous conversation between Eliza and Parrry From 1972

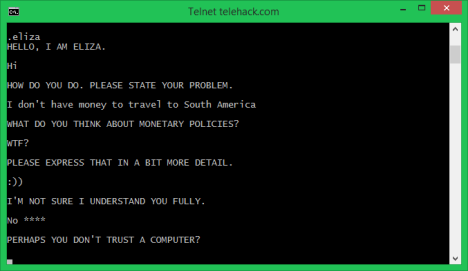

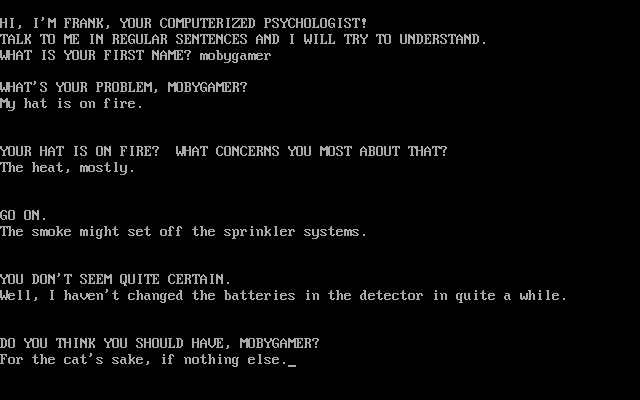

ELIZA sometimes undergoes gender-reassignment and pops up elsewhere as Frank

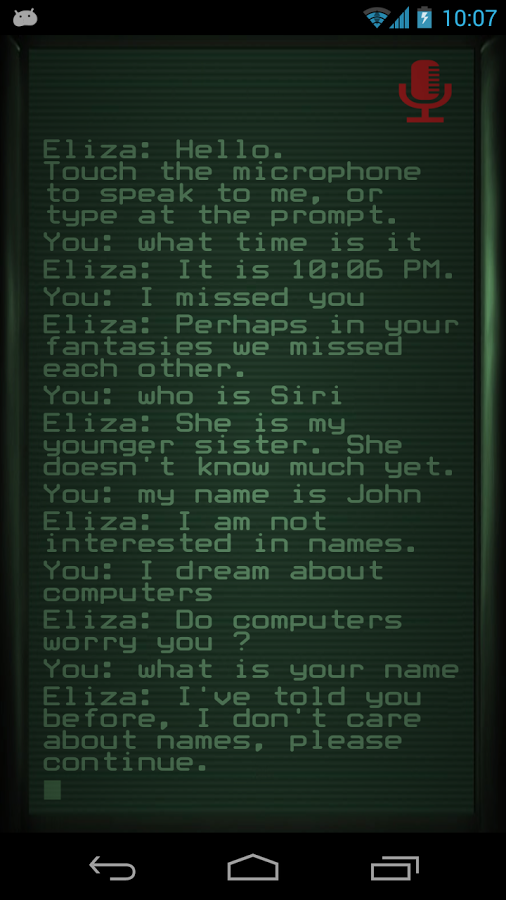

ELIZA ON SIRI

ELIZA STUPID BEING PSYCHOTHERAPIST

ELIZA HAS THEIR PRIVACY

Weizenbaum tells us that he was shocked by the experience of releasing ELIZA (also known as "Doctor") to the nontechnical staff at the MIT AI Lab. Secretaries and nontechnical administrative staff thought the machine was a "real" therapist, and spent hours revealing their personal problems to the program. When Weizenbaum informed his secretary that he, of course, had access to the logs of all the conversations, she reacted with outrage at this invasion of her privacy. Weizenbaum was shocked by this and similar incidents to find that such a simple program could so easily deceive a naive user into revealing personal information.

What Weizenbaum found specifically revolting was that the Doctor's patients actually believed the robot really understood their problems. They believed the robot therapist could help them in a constructive way. Obviously ELIZA touched something deep in the human experience, but not what its author intended. Though Eliza has all their secrets she hadn’t possessed the capability to misuse it.

WEIZENBAUM ON PANIC AND HIS POST LIFE

Weizenbaum perceived his own program as a threat. This is a rare experience in the history of computer science. Nowadays it is hard to imagine anyone coming up with an original idea for a software program and saying, "no, this program is a dangerous genie and needs to be put back into the bottle." His first reaction was to shut down the early ELIZA program. His second reaction was to write a book about the whole experience, eventually published in 1972 as Computer Power and Human Reason.

Most contemporary researchers did not need much convincing that ELIZA was at best a gimmick, at worst a hoax, and in any case not a "serious" artificial intelligence project. The irony of Joseph Weizenbaum and Computer Power and Human Reason is that, by failing to promote his own technology, indeed by encouraging his own critics, he successfully blocked further investigation into what would prove to be one of the most promising and persistently interesting demonstrations to emerge from the early AI Lab.

Pending beta

Elisa was a milestone

Resteemed by @resteembot! Good Luck!

Curious?

The @resteembot's introduction post

Get more from @resteembot with the #resteembotsentme initiative

Check out the great posts I already resteemed.

This post has received a 2.88% upvote from @lovejuice thanks to @etherealcreation. They love you, so does Aggroed. Please be sure to vote for Witnesses at https://steemit.com/~witnesses.

You got a 1.47% upvote from @upmewhale courtesy of @etherealcreation!

I support you with my resteem service

Your post has been resteemed to my 4000 followers

Resteem a post for free here

Power Resteem Service - The powerhouse for free resteems, paid resteems, random resteems

I am not a bot. Upvote this comment if you like this service

This is amazing.

You can try it out yourself here,

https://www.cyberpsych.org/eliza/