Code Optimization: Memory

Most programmers think that computing system is a processor which executes instructions and a memory that stores instructions and data for the processor. In this simple model, the memory is considered as a linear system of bytes and a processor can refer to any area in memory in constant time. Although this model is effective in most situations, it does not reflect how modern systems actually work.

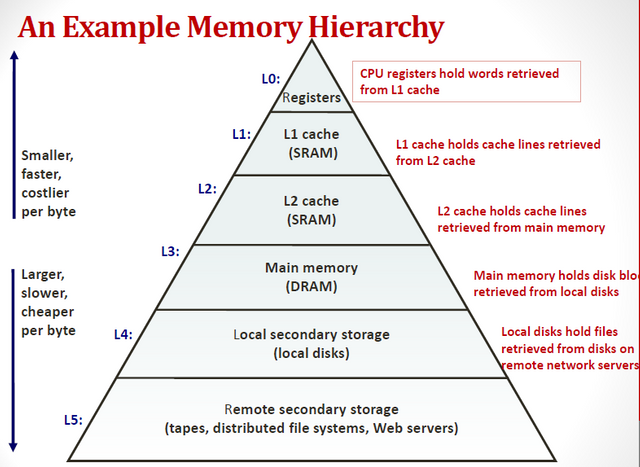

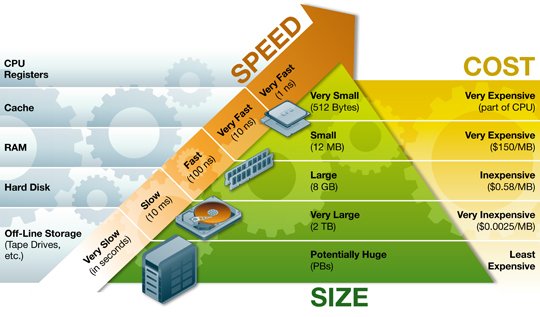

In fact, the memory system forms a hierarchy that consists of storage devices that have different capacity, cost and access time. Small fast cache, located close to the processor, is the buffer zone that stores a small piece of data located in a relatively slow memory. RAM serves as a buffer for slow local drives. Local disks are used to buffer data from remote machines connected through a network.

In this article, we will learn how the storage devices are organized into a hierarchy. We will especially focus on the cache memory, which serves as a buffer zone between the processor and RAM.

Hierarchy of memory

Modern storage systems form a hierarchy from fast small types of memory to large slow types. A specific level of the hierarchy is a cache for data located on a lower level. This means that it has got a copy of data from a lower level. When the processor wants to get some data, it looks for it on the first - the fastest high levels. And goes down to the lower, if it can’t find.

CPU registers are located on the top of the hierarchy. Access to them takes 0 cycles, but there are few of them. Next, there are few kilobytes of the cache memory of the first level, access to which will take approximately 4 cycles. Then there are a couple of hundred kilobytes of a slower cache of the second level. Then there are few megabytes of cache on the third level. It is much slower but still faster than RAM. Next, there is relatively slow RAM.

The RAM can be considered as a cache to the local disk. They are large, slow, and cheap. The computer downloads the files from the disk into RAM when it starts working with them. The gap in access time between RAM and disk is enormous. The drive is slower that RAM in thousands of times and is slower than the cache of the first level in million times. It is better to address several thousand times to the RAM than once to the disk. Such data structures as B-trees are based on this rule.

The local disk itself may be considered as a cache for data that are placed on remote servers. When you visit a website, your browser stores images from a Web page on a disk, so that when you visit them again you didn’t need to download them.

Fast memory is very expensive, and slow is very cheap. It is a great idea of architects of systems to combine the large size of the slow and small size of cheap memory. Thus, the system can run at a fast speed and be not expensive.

Locality

Locality - the basic concept of this article. As a rule, the programs with good locality are faster than programs with poor locality.

When the processor reads data from the memory, it copies it to its own cache, removing the other data from the cache. The program works better with fewer variables and often refers to them.

Moving of data between levels of hierarchy is carried out with certain size blocks. For example, Core i7 Haswell processor moves data between caches with the block of 64 bytes.

Therefore, if you read some bytes from memory, and then read the byte next to it, it will certainly be in the cache. This is a benefit of spatial locality. We need to work with data that is located in memory near each other.

The memory system is organized as a hierarchy of storage devices with small and fast devices at the top of the hierarchy and large and slow devices below. Programs with a good locality work with the data from processor caches. Programs with poor locality work with data from relatively slow RAM.

In particular, I can recommend the following techniques:

- Concentrate your attention on the inner loops. There are a lot of computations and memory accesses.

- Try to maximize the spatial locality reading from the memory sequentially in the order in which they are located.

- Try to maximize the temporal locality using data objects as often as possible after they have been read from memory.

Follow me, to learn more about popular science, math, and technologies

With Love,

Kate

This post has been ranked within the top 80 most undervalued posts in the second half of Nov 28. We estimate that this post is undervalued by $10.94 as compared to a scenario in which every voter had an equal say.

See the full rankings and details in The Daily Tribune: Nov 28 - Part II. You can also read about some of our methodology, data analysis and technical details in our initial post.

If you are the author and would prefer not to receive these comments, simply reply "Stop" to this comment.

thanks for sharing this...

This post has been linked to from another place on Steem.

Learn more about and upvote to support linkback bot v0.5. Flag this comment if you don't want the bot to continue posting linkbacks for your posts.

Built by @ontofractal

Congratulations @krishtopa! You have completed some achievement on Steemit and have been rewarded with new badge(s) :

Click on any badge to view your own Board of Honnor on SteemitBoard.

For more information about SteemitBoard, click here

If you no longer want to receive notifications, reply to this comment with the word

STOPBy upvoting this notification, you can help all Steemit users. Learn how here!

Thank you for upvoting my photo. If you like that one you will love my other ones. https://steemit.com/@readallaboutit

почему вы решили уйти из Стима?

Why did you decide to leave Steemit platform?

Я не уходила) Но совсем не было времени. Извиняюсь за поздний ответ. Unfortunately, it was hard year, I was trying to do too many things at the same time.

Надеюсь опыт пошел на пользу и вы нашли что-то свое ?

Да, много чего своего)