What is behind the Steem bandwidth issues...

The last bandwidth issues inspired me to start my own debugging. I'm an OPS guy and when I'm facing issue I need to understand it and find the root cause.

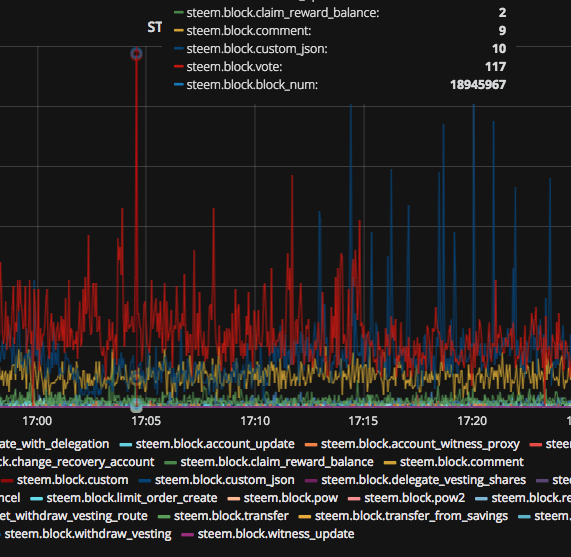

Steem Blockchain provides a bunch of raw data, we can easily get details for any block we want, but we are people, we can't read and process millions lines of text on the fly... but... we're better at reading charts ;-)

I've built a system which gather data from Steem blockchain and generate dynamic charts for transactions and bandwidth details. The system is build on Open Source software such as Grafana, Graphite and few Python scripts I wrote for parsing and collecting data.

Bandwidth

The Steem blockchain's bandwidth depends on the 3 parameters:

- current_reserve_ratio

- maximum_block_size

- number_of_block_per_week

and it's calculated based on formula:

max_bandwidth = number_of_blocks_per_week * maximum_block_size * current_reserve_ratio

- number_of_block_per_week is constant, Steem generates 20 blocks per minute * 60 minutes * 24 hours * 7 days = 201600 blocks

- maximum_block_size is set by TOP 20 witnesses and currently is 65536

- current_reserve_ratio it's a kind of anti spam parameter, whose value decrease when the average size of the new blocks is greater than 25% of the maximum_block_size (65536 * 0.25 = 16384).

Every transaction generates data

- more transactions = more data,

- more data = bigger blocks,

- bigger blocks = lower current_reserve_ratio,

- lower current_reserve_ratio = lower bandwidth,

- lower bandwidth = MOAR! issues...

Let's take a look on the charts

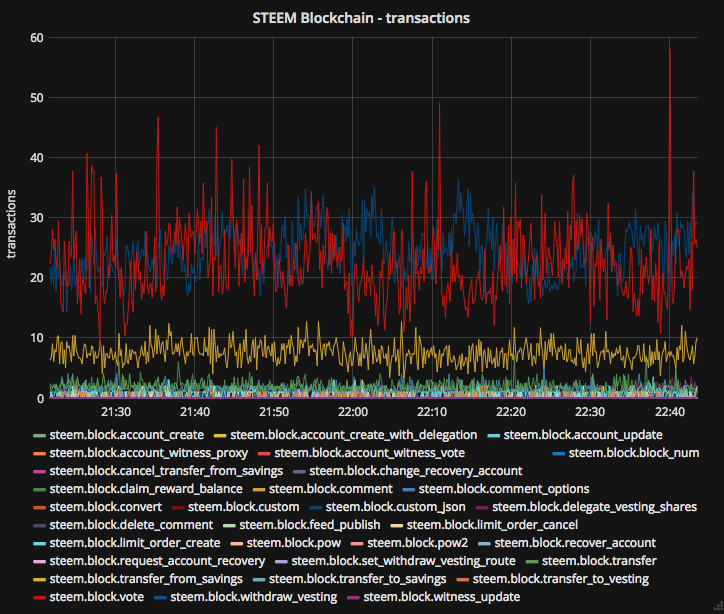

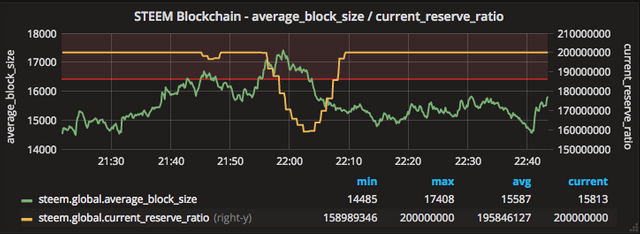

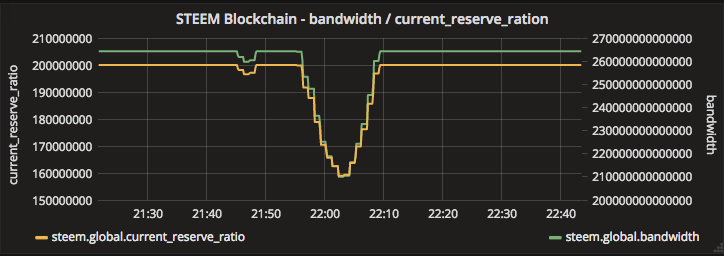

This is a snapshot of data I made today, the charts show how many transactions are processed, what is the current_reserve_ratio, average block size, and how the Steem blockchain automatically reduce the bandwidth when average_block_size hit the limit.

We can see the most popular transactions are,

- custom_json (blue) - when we follow/unfollow somebody

- votes (red) - when we upvote post or comment

- comment (orange) - when we add comment

The average_block_size hit the limit (red horizontal line set on 25% of max_block_size = 16kB) after which the current_reserve_ratio value is decreased.

And finally the bandwidth is reduced as well. The lower value of current_reserve_ration, the lower value of available blockchain badwidth.

Spikes

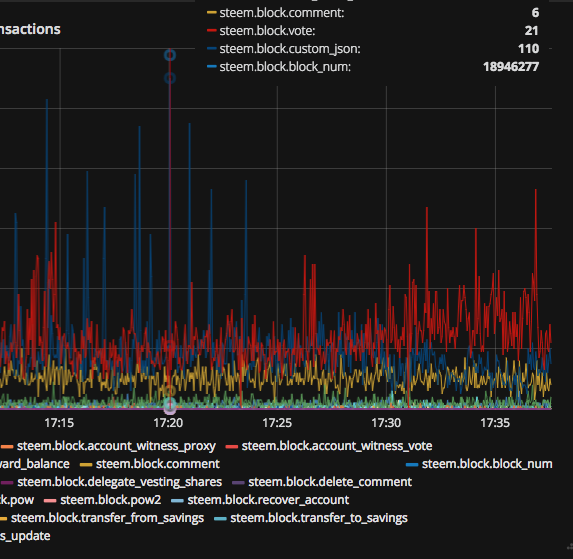

From time to time we see spikes caused by operations like votes, custom_json, delegate.

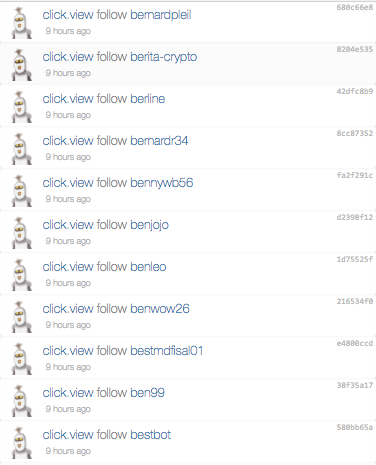

custom_json

More than 100 custom_json operations in a one block 18946277

Ok... it seems like the click.view user bot is looking for new friends...

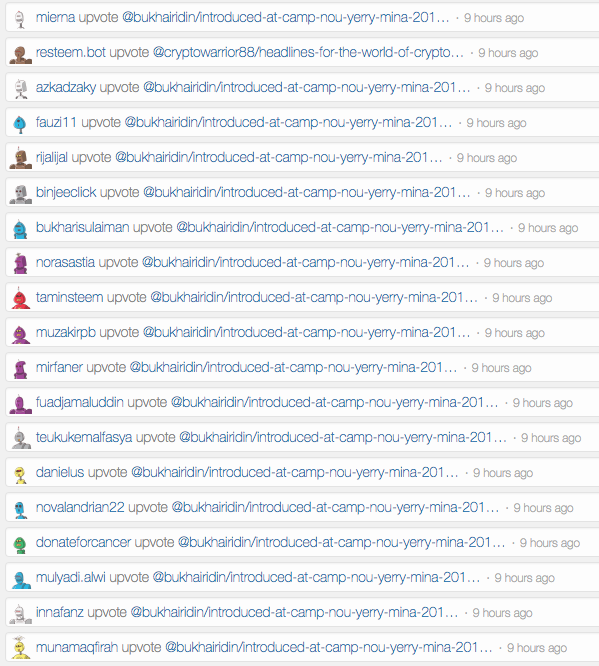

Votes

117 votes in a one block 18945967

Dozen of minions upvoting the same post at the same time... probably voting bots.

My conclusion

In the next few weeks/months something needs to be improved to make enough capacity for all new Steemians, it could be the algorithm itself or the max_block_size parameter set by Witnesses. We're hitting the limit which is set to just 25% of the max block size... :)

blockchain.steem.pl

If you want to track the current Steem blockchain transactions in real time, I've made a public page with the chart for you. Enjoy! ;-)

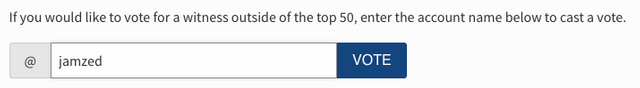

Please vote for me as witness.

If you think I'll be a good witness, please

- go to https://steemit.com/~witnesses

- scroll to the bottom of the page

- enter my name jamzed next to the @ symbol and click the VOTE button

Thank you!

Probably some vote bot went haywire and started spamming the chain with an inordinate amount of votes.

Yupe, I wanted to check if the bandwidth issues are caused by the abusers or some algorithm tuning on blockchain side is required.

i have problem with this bandwidth issues if you are gonna fix it. This would be awesome. Thanks to you. :)

Which is being handled in Hardfork 20. The abuse of blockchain through malicious voting will be stopped.

Not to complain about a gift, but can you move the chart down a few inches?

I loved it once I figured out how to select what I wanted to see.

The legend should be more visible now ;-)

That works too! Thanks a bunch!

You’re welcome ;-)

Thank you, my plan is to put more charts below, (btw, you can zoom out by clicking ctrl-z). I will move this chart down a bit ;-) please refresh the page in 10 minutes ;-)

As we thought eh?

Longer term if the usage continues to grow there will certainly need to be an increase to the block size. The other thing that is needed is more visibility of bandwidth usage and limits so people can be more aware of them and not get cut off so frequently or for such long periods of time. When the reserve ratio is reduced, the accounts which are cut off first are those which are using the highest percentage of their maximum allowance (which can be increased by powering up for SP). Each user has to make a bit of a tradeoff between higher usage during low-usage periods, being cut off during high-usage periods, and powering up more SP. Unfortunately this tradeoff is difficult to make now in practice because bandwidth usage is not even visible at all in the UI.

Thank you for your comment, I completely agree with you. New users don't know how the platform works, and this is the UI responsibility to help them understand the issue and suggest the solution.

This probably should be an advanced option though. Surely, if regular users will have to take even more parameters into account in the future, this will not help us attract normal, nongeek, population. It would greatly put me off as well.

Really great post! I just wanted to point out one error:

This should say “20 blocks per minute”. The number of blocks per week is correct so it seems to be just a typo rather than a math error.

Hey, thank you for catching, it's a typo, updated. ;-)

It would be quite nice to be able to select multiple things to track at once, which may be possible but was not immediately obvious to me how to do.

Very keen, though. Nicely done.

Hey, thank you :) This is possible, you can choose the metrics you want to tracks under the chart. If you want to select few of them, please click on the metric name with CTRL key.

Ah, ctrl-click. Makes perfect sense; I'm just not used to thinking of using that in web interfaces. Excellent.

Last one month I faced issues a lot regarding bandwidth. Hope it will reduce by the reducing price of steem.

Hm, conclusion - bots generate traffic spikes that affect normal users :/

Great work!

So this is how I see it, @steemit holds the largest stake which means he has the largest bandwidth and since there are high volumes of transactions inside issues like these occur. There is also a chain of effect of this issue, like anyone who has been delegated by @steemit, and then lost the privilege to do things in steemit i. e upvoting, commenting, posting etc. That is why also @steemit is undelegating his sp to newbies before but what about those who havent got enough sp?

Correct me if i am wrong @timcliff & jamzed.

Sort of. Part of the purpose of the reserve ratio is to account for unused and underused stake like the steemit account (and in practice most other large accounts). Since those accounts are not using their full share of bandwidth, the reserve ratio grows (or stays) higher, and that unused portion becomes available to every else via a (temporarily) increased allowance.

As I understand it, the account is supposed to be undelegating from accounts which have been misbehaving (in this case many accounts controlled by the same person/group and mostly spam self voting). To the extent those accounts are cut off from the delegation and more limited in bandwidth, more bandwidth should then be left for everyone else.

Wait a minute, its not just me?! I thought I was just doing something wrong using up all my bandwidth in the mornings and then having tons of bandwidth in the afternoon, with morning bandwidth in the kilobytes and afternoon in the megabytes, I thought it was because I was new and needed more steem power.

The new member issue shouldn't last too long, but it does have something to do with having limited bandwidth, though with a 41 rep those issues should have cleared up by now.

Cg

it's just in the mornings, its happening right now

It's not just you ;-) Your bandwidth depends on the maximum blockchain bandwidth and VESTS (SP) you hold. If you see this issue very often, the solution is to get more SP.

its only in the mornings

Woo, you can tell he's an Ops guy cuz he pulls out Grafana for his go-to. Will you be releasing the scripts used to get the data?