Make Your Steem Server Last Longer With Memory Compression

On 13 January 2018, the RPC node at steemd.privex.io went down because the Steem daemon (steemd) exhausted the 256GB of RAM on that node. The Graphene in-memory database just kept getting bigger.

To get their RPC node back online, @someguy123 wanted to upgrade to a server with 512GB of RAM, but their provider told them that it would take 10 business days, and that's not including the time it would take to get the node set up!

My team (@dutch and I) suggested a fix to @someguy123: RAM compression with zram.

The fix worked, and despite running out of 256GB of RAM, the @privex RPC node came back up after less than 34 hours of downtime.

Unfortunately, the only way to prevent this ever-inflating memory usage from becoming unmaintainable would be to overhaul how Graphene accesses what it needs from the blockchain. There are already mitigations in place, like the LOW_MEMORY_NODE compile-time option or disabling unneeded plugins, but as the blockchain grows, so will memory usage.

I've got some good news, though:

- zram will delay the inevitable. Our testing so far has shown that the life of a Steem witness node with a fixed amount of RAM can be extended by months (or even longer; it's hard to gauge for sure) with zram.

- It's easy to set up zram.

- If you're running Ubuntu 14.04 or newer, it's even easier to set up zram.

- If you're on Debian 7 or newer, you can also use the Ubuntu instructions with some extra steps.

We are now recommending the use of zram as a new best practice for all new and existing Steem nodes.

Witness @gridcoin.science Uses zram

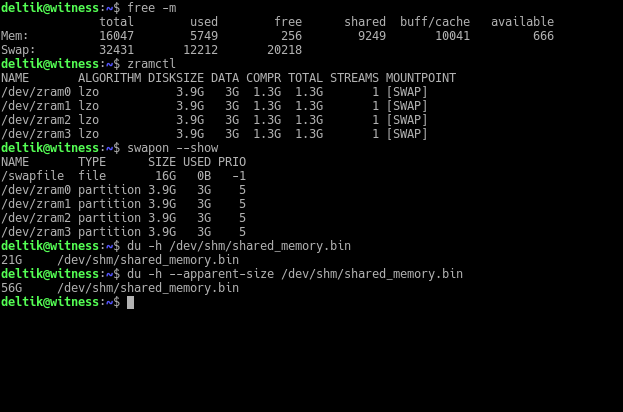

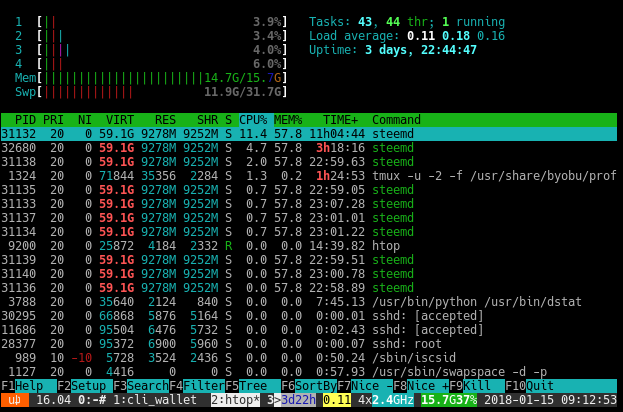

Our witness @gridcoin.science, intentionally configured with just 16GiB of RAM, is currently making use of zram:

Above, you can see that the steemd memory-mapped file is 21GiB large, but zram has compressed some of it:

Thanks to zram, we're able to run a witness below the commonly accepted minimum RAM requirement.

When either the CPU struggles to keep up with zram swapping or when zram swap space runs low, we plan to fail over to the backup witness briefly, increase the RAM of the primary witness, catch up the blockchain, and resume operations from the primary witness.

Currently, there's barely any CPU load, so we expect that zram will last us a while.

Steem Daemon with zram on Ubuntu or Debian

Ubuntu makes it dead simple to set up zram.

Debian 7 only: You need to enable the backports repository in

/etc/apt/sources.list:deb http://ftp.debian.org/debian wheezy-backports main

All Debian releases: Manually download and install the

zram-configpackage version 0.5 from Ubuntu:sudo apt update wget 'http://archive.ubuntu.com/ubuntu/pool/universe/z/zram-config/zram-config_0.5_all.deb' sudo dpkg -i zram-config_0.5_all.deb sudo apt install -f rm -v zram-config_0.5_all.debThen go directly to step #3.

If you haven't already, enable the "universe" repository:

sudo add-apt-repository "deb http://archive.ubuntu.com/ubuntu $(lsb_release -sc) universe"Install the

zram-configpackage:sudo apt update sudo apt install zram-configBy default,

zram-configsets up zram swap half the size of your RAM, but our testing revealed that thesteemdin-memory database has a zram lzo compression ratio of greater than 2×, which means you can comfortably double the default zram swap size.Set the calculated zram swap capacity to be equal to that of RAM:

Ubuntu 14.04 only:

sudo sed -i 's|/ 2 /|/ 1 /|g' /etc/init/zram-config.confzram-config 0.5 (all other releases as of September 2018):

sudo sed -i 's|/ 2 /|/ 1 /|g' /usr/bin/init-zram-swappingStart up

zram-config:Ubuntu 16.04 and newer or Debian 8 and newer:

sudo systemctl restart zram-configAll releases:

sudo service zram-config restartYou should now see zram swap:

$ swapon --show NAME TYPE SIZE USED PRIO /dev/zram0 partition 2G 0B 5 /dev/zram1 partition 2G 0B 5 /dev/zram2 partition 2G 0B 5 /dev/zram3 partition 2G 0B 5 /dev/zram4 partition 2G 0B 5 /dev/zram5 partition 2G 0B 5 /dev/zram6 partition 2G 0B 5 /dev/zram7 partition 2G 0B 5 $ zramctl NAME ALGORITHM DISKSIZE DATA COMPR TOTAL STREAMS MOUNTPOINT /dev/zram0 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram1 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram2 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram3 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram4 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram5 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram6 lzo 2G 4K 81B 12K 1 [SWAP] /dev/zram7 lzo 2G 4K 81B 12K 1 [SWAP]Optional, but highly recommended:

If you do not already have regular disk swap (either a swap file or a swap partition), create one and set it to enable on boot:

This sets up a 4GiB swap file (

bs=1M count=4096):sudo dd if=/dev/zero of=/swapfile bs=1M count=4096 sudo mkswap /swapfile echo "/swapfile swap swap defaults 0 0" | sudo tee -a /etc/fstabExtra swap will keep

steemdrunning longer, even if you run out of zram swap. You are at elevated risk of missing blocks when using disk swap because disk swap is much slower than zram swap.If you are not already storing the

steemdmemory-mapped file in a tmpfs (ramdisk) mount:In

witness_node_data_dir/config.ini, setshared-file-dirto a tmpfs mount (/dev/shmby default):shared-file-dir = /dev/shmIn the same

config.ini, setshared-file-sizeto something sane. In January 2018, the default for witnesses is54G(54GiB). Anything below22G(22GiB) in January 2018 will fail for witnesses because thesteemdin-memory database is about to reach that size.We suggest that you use double your RAM size plus however much disk swap you have minus 1GiB for other things that may be running in RAM. If you have 16GiB of RAM and 4GiB of disk swap, set

shared-file-size = 35G(16GiB × 2 + 4GiB - 1GiB = 35GiB).Regardless of how big you set the file size,

steemdwill only use as much space as it needs.Remount the

/dev/shmtmpfs so that it can hold the entireshared_memory.bin.If your

shared-file-size = 35G, consider setting the tmpfs file size to36352M((35GiB + 0.5GiB buffer) * 1024 = 36352MiB):mount -o remount,size=36352M /dev/shmIf you have the files

shared_memory.binandshared_memory.metaalready, copy them over to/dev/shmso that you don't have to replay the blockchain.Start the Steem daemon:

steemdif you copied the files in the previous stepsteemd --replay-blockchainif you need to replay the blockchain

Steem Daemon with zram on Other Linux Distros

These instructions should be pretty portable across Linux distros as long as you install the util-linux package because it contains /sbin/zramctl.

- Debian/Ubuntu:

sudo apt install util-linux - Fedora:

sudo dnf install util-linux - RHEL/CentOS:

sudo yum install util-linux - Arch Linux:

sudo pacman -S util-linux - Gentoo:

sudo emerge util-linux

If

zramdoesn't show up inlsmod | grep zram, runsudo modprobe zram.If you get a message that starts with

modprobe: FATAL: Module zram not found, then you'll need to boot up with a kernel that haszram(standard with Linux 3.14 and newer).Run

zramctl -fto confirm that/dev/zram0is the first zram device available.If it's not

/dev/zram0, that means you already started up zram somewhere else. This guide recommends that zram be used exclusively forsteemd's memory-mapped file and assumes that/dev/zram0is the device you choose to use.Determine how much space to allocate to the zram device. Just use however much RAM you have:

$ totalmem=$(LC_ALL=C free -b | grep -e "^Mem:" | sed -e 's/^Mem: *//' -e 's/ *.*//') $ echo "$totalmem" 16742518784Create a zram device with the size you determined in the previous step:

$ sudo zramctl -f -s "$totalmem" /dev/zram0Format the new zram device as swap:

$ sudo mkswap /dev/zram0 Setting up swapspace version 1, size = 15.6 GiB (16742514688 bytes) no label, UUID=632f5bc3-d5cf-4983-a5ba-bcbcfe9dd238Mount the new swap device:

$ sudo swapon /dev/zram0Go to step #5 of the Ubuntu/Debian instructions above.

Conclusion

I want to contribute to alleviating the operational growing pains of the Steemit platform. Growing RAM usage, which increases costs of running Steem nodes, continues to be a nagging problem. Collectively, that's a lot of RAM. I hope this zram tutorial helps to squeeze out more value from the hardware available while being transparent to the software.

Perhaps if using zram becomes standard operating practice, we can have more reliable witnesses to support the long-term endurance of Steem (and by extension, Graphene).

Reducing RAM usage isn't all, though. The witness @gridcoin.science is at the forefront of all the improvements I have worked on for witness operations. For an overview of what @dutch and I have already done differently with @gridcoin.science, see our announcement post.

To support this witness, visit https://steemit.com/~witnesses and add gridcoin.science to the box at the bottom of the page, click vote, and authorize using your Active Key.

We want to continue innovating and sharing our findings. Please let me or @dutch know if this tutorial was helpful and what other topics you'd like us to explore.

I have been looking around. Is there a wiki or anything with this kind of information being curated? I feel like I'm missing something obvious, but I'm having a hard time finding consolidated information on running witness nodes.

Using zram is new advice pioneered on the witness @gridcoin.science. We hope this article is the first step towards widespread acceptance of memory compression as standard operating practice, as the benefits seem to outweigh the drawbacks, if there are even any drawbacks at all!

As far as consolidated information, there's no authoritative guide nor a "right way" to run a witness. The best sources of basic witness operations have been guides that get written and rewritten periodically.

We built @gridcoin.science from gleaning information from all over the place, but there are some good guides out there still mostly relevant today like @jerrybanfield's, @krnel's, and @klye's.

I really appreciate that. Hopefully, we will see that come about. I would be happy to help, if there was a good spot for one.

Deltik/Dutch,

This is great news especially for those Witnesses under Top 50 including myself to cut the operating costs and continue to serve the community. I will definitely will try this approach and report back.

Where you been for the last 6 months? I've spent too much money on servers :)

Cheers mate,

@yehey

Hey @yehey!

This idea was actually @deltik's genius coming through. Hope it works out for you, let us know!

Dutch

I use zram on my Gridcoin and SolarCoin fullnodes, they run on Raspberry Pis, so no-where near the RAM requirements of Graphene, but its the same fundamental problem.

I was just thinking we could do the same for Gridcoin, especially on the Pis which tend to struggle to keep the wallet running.

If you run headless then its not really an issue so far, but I imagine trying to use Qt on a Pi you would be running up against the RAM limit.

Of course the other option is to buy an SBC with more RAM, models are coming out with 4GB now...

Thank you for this. Do I need to take down the witness (stop the docker) when I set up the zram?

No. But if you are already using disk swap and some data is already on disk swap then you might want to setup zram and replay to get less data on disk

hey - I think there is a small mistake in line where swapfile is added to /etc/fstab

echo "/swapfile swap swap defaults 0 0" | sudo tee /etc/fstabThis will over-write the existing one. please check

Yes, that is a mistake. This is the correct command:

Unfortunately, I can no longer edit the article, so hopefully readers see this comment thread before it's too late!

you could edit it now;)

The correction has been applied to the article.

Seriously awesome work on this post, thanks for putting it together. This inspired me to play around with zram and I had a LOT of trouble so I wanted to share my solution in case anyone else has issues.

It installed without issue on Ubuntu 16.04 but simply wouldn't start, responding with the less than helpful

zram-config.service: Failed with result 'exit-code'.To spare anyone the literal hours of troubleshooting I spent on this, the solution is pretty simple:

apt-get -y install linux-image-genericRun this first and then follow this post from the top.

For anyone wondering why installing

linux-image-genericworks, it's the dependency on the "linux-image-extra" for your kernel version. Thezramdriver is included in that dependency:Without the package, the necessary driver might not be installed.

Yeah, I read that

It is a module of the mainline Linux kernel since 3.14and so I checked:And then I scratched my head for a bit until I realized it's not part of the base kernel package.

Congratulations @deltik, this post is the eighth most rewarded post (based on pending payouts) in the last 12 hours written by a Dust account holder (accounts that hold between 0 and 0.01 Mega Vests). The total number of posts by Dust account holders during this period was 11547 and the total pending payments to posts in this category was $4033.65. To see the full list of highest paid posts across all accounts categories, click here.

If you do not wish to receive these messages in future, please reply stop to this comment.

Thanks for shearing news

Thanks for me informasion ..

Very nice post

Congratulations @deltik, you have decided to take the next big step with your first post! The Steem Network Team wishes you a great time among this awesome community.

The proven road to boost your personal success in this amazing Steem Network

Do you already know that awesome content will get great profits by following these simple steps, that have been worked out by experts?

wow! I will give a try to run a seed node on a 16GB RAM machine. . This is very cool.