A RUNNING FEED OF PAST PROJECTS: a new feature for the open-source project The Amanuensis: Automated Songwriting and Recording

logo by @camiloferrua

Repository

https://github.com/to-the-sun/amanuensis

The Amanuensis is an automated songwriting and recording system aimed at ridding the process of anything left-brained, so one need never leave a creative, spontaneous and improvisational state of mind, from the inception of the song until its final master. The program will construct a cohesive song structure, using the best of what you give it, looping around you and growing in real-time as you play. All you have to do is jam and fully written songs will flow out behind you wherever you go.

If you're interested in trying it out, please get a hold of me! Playtesters wanted!

New Features

- What feature(s) did you add?

The ultimate concept behind The Amanuensis is to have a dynamic, customized and self-created soundtrack following you everywhere you go. One step towards that reality has been implemented with a running "feed" that starts up upon inactivity.

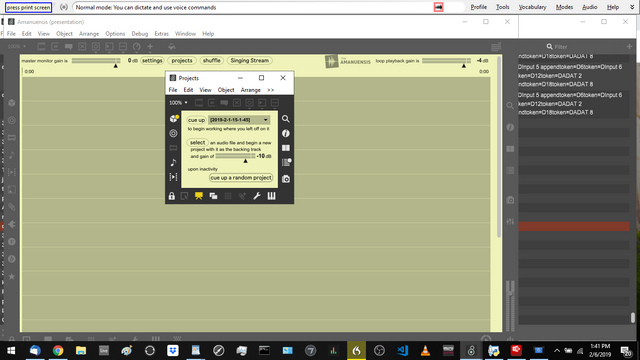

Obviously it is not a mobile app currently, but if you leave the program running in the background on your computer it will now cue up a continual stream of your past projects for you to listen to, much in the way a person might listen to any other music as they're working. You can then refine and manage your musical creations while doing anything else on the computer at the same time.

Using existing hotkeys, a quick BACKSPACE stroke will end and erase completely any song you hear that you don't like or is flawed, END essentially functions as "skip to next" and HOME as "skip to beginning". If you hear something you really like, you can then make a note of it, perhaps coming back to it to "learn" it and turn it into a real song that can be played live, presented as something to work on at your next band practice, etc.

If you hear something that's good but you want to keep working on it via the free-flowing, open-ended strategy of The Amanuensis, you can easily at any time. Just start tapping on a nearby MIDI keyboard or other controller. The Amanuensis doesn't need to be the program with focus, it just needs to receive MIDI for you to play with it. It's entirely possible to rig up custom sensors or buy a product that can turn your idle finger-taps into MIDI as well, meaning you can write songs without conscious effort the entire time you're working or doing whatever else on your computer.

- How did you implement it/them?

If you're not familiar, Max is a visual language and textual representations like those shown for each commit on Github aren't particularly comprehensible to humans. You won't find any of the commenting there either. Therefore, I will present the work that was completed using images instead. Read the comments in those to get an idea of how the code works. I'll keep my description here about the process of writing that code.

These are the primary commits involved:

A new option was created in the "projects" menu (see above screenshot) and the user's selection is conveyed to machine.maxpat through feed. There, it is determined whether or not all the requirements to start or stop the feed are satisfied.

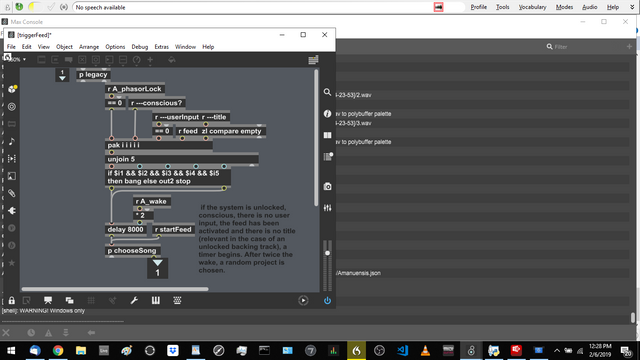

p triggerfeed in machine.maxpat

Previously ---title could only be queried through a value object and it was realized that out of the five requirements to trigger the feed, if the title was the only one to change, this would not be actively conveyed to the if in charge of the feed's management. Therefore, it was necessary to find all of the places in the code where v ---title was changed and also actively relay that information through a send/receive pair.

Thank you for your contribution @to-the-sun !

Maybe you can work with @dsound ?

Your contribution has been evaluated according to Utopian policies and guidelines, as well as a predefined set of questions pertaining to the category.

To view those questions and the relevant answers related to your post, click here.

Need help? Chat with us on Discord.

[utopian-moderator]

Thank you for your review, @justyy! Keep up the good work!

Hi @to-the-sun!

Your post was upvoted by @steem-ua, new Steem dApp, using UserAuthority for algorithmic post curation!

Your post is eligible for our upvote, thanks to our collaboration with @utopian-io!

Feel free to join our @steem-ua Discord server