Machine learning serie - part 4: How does a robot learn to ride a bike?

Reinforcement learning

Another semester is finished and I would like to extend on my abandoned Machine learning series. This time I'll talk about reinforcement learning which is the algorithm that is behind most of the cool AI stuff you heard about lately - like the success of DeepMind's Go or Poker. So reinforcement learning is the algorithm that can beat you in poker or go or even in some Atari games (like for example pong) but it is also the algorithm which is trying to teach robots walk and do other practical things like carry your things around or drive cars. So, how is it all (or at least some of it) done?

First let me tell you a story about a bike

Imagine you're a child (or even grownup for the matter) and you're learning to ride a bike. How do you do it? And why do you do it? There might be a distant reward promised by your mother or father that if you manage to ride to the end of the parking lot, you get an ice cream. And you like ice cream, so you try to really manage the task.

But how do you connect the exact movements of your legs on the bike with this delicious reward? In the case that you're a human that's quite easy. First of all we're very good at extracting action and causes from our world and second of all our brain is amazingly equipped for learning any new movement tasks. So we can quite easily learn to walk, run, write, or even to ride that bike. But imagine that you're a robot, and none of this is really true for you. So how do you do it or how does machine learning do it?

Part of the answer to this is reinforcement learning, it answers how a machine learning agent can learn even if the reward is very distant, but it doesn't answer the question about how to mimic that amazing movement ability of humans (or animals).

To give you the idea about how difficult it is for robots to do tasks in the real world, here is an example of robot using reinforcement learning to do a simple pancake flip.

OK, once again, what is this Reinforcement learning?

It's a magic box, where on one side you insert a task, on the other side you insert a reward for finishing the task and in the middle there is an agent that learns your task. And now you know it. You might be thinking: "Wait what? This is some oversimplification, isn't it?!" That's partly right, but actually it's not that far from the truth.

In the machine learning field there are three basic types of "machine learning". The first one is the supervised learning, where the task is usually quite simple (like: "What's on the picture?" or "Where is a dog on the picture?") and the feedback (reward) is given to the algorithm after each answer. The algorithm can therefore gradually improve its hypothesis. On the other side of the spectrum is unsupervised learning, where the algorithm gets no feedback at all and it is just trying to find some reoccurring (generalizing) patterns in the input (images, text, videos). Reinforcement learning algorithms deal with problems that are somewhere in the middle. Sometimes the agent gets the reward right after its action and sometimes it takes many steps before reward is received.

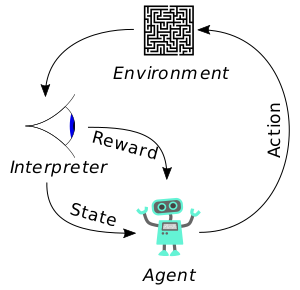

The basic idea of reinforcement learning is that there is an interaction between an agent and an environment. The agent does some actions and it receives reward for its actions from the environment. The environment is observed by the agent and agent creates states based on the observation, state can be seen as some inner model of the world a belief of the agent about where it is located in the world. The agent learns by creating a policy based on what rewards it got after its actions, and the policy then determines which actions does the agent select in different states.

In general the basic agent-environment interaction cycle just looks like this:

If you find like you need a bit more explanation, than here is a nice video by the Udacity explaining the basics of reinforcement learning.

So, how far are we from robots that would do my chores?

Actually, we're yet quite far from that. The RL agents are not yet ready even to ride that bike, or for the matter be able to really walk in any terrain. But there are many really clever people who are trying to figure out how to get closer to those or similarly difficult tasks. In the next posts I'll talk about what are the newest algorithms that should bring us closer to it.

Here is a short follow-up. Just some more reading and watching about the topic. :)

This is a pretty good technological progress, good luck, we are following you!

Robots will be able to replace certain moves a person can make, but they'll never be able to do everything a person is capable of doing at the spur of the moment...

@pocketechange

I'm not sure about that actually. We might be greatly overestimating the "spur of the moment", as all comes down to our brains which are in the end (according to science and me) just huge computers.. But we'll see. Even with my optimistic view - human level artificial intelligence is doable - it is probably not going to happen in the next ten years..

There are those who are already looking for "an ideal" mixing both: man and machine. And as has happened with other technological advances, it begins in the military area:

Part of video, since 10m 15s: www.youtube.com/watch?v=1brEPzkJIsA&t=10m15s

Channel (other user): www.youtube.com/channel/UCc0AzRNy9TY5r2Fx5C8_8LQ

Regards.

Watching this technology grow is great, wonder what it will be like in 5 years time.

Great article, very interesting always wondered how it worked ! will follow please share more intesting posts :D !!!!

Thanks, I'll try my best..

@mor thanks for sharing, great article

Good share

That's interesting to learn about. Thank you!

You're welcome :)

Reinforcement learning is amazing. Can a robot ride a bicycle? I look forward to your writing in the future.

Very interesting. Congratulations :)