Will AI Cause World War III?

Yesterday, Jack Ma, the founder of Chinese Internet giant Alibaba (the world’s largest retailer – generating more gross merchandise volume than Amazon and eBay combined) predicted that our current technological revolution and the rise of artificial intelligence (AI) will cause World War III. In an interview with CNBC at the Gateway ’17 conference in Detroit, Ma said “The first technology revolution caused World War I. The second technology revolution caused World War II.” He concluded his reasoning by pointing out “This is the third technology revolution.

Unlike Elon Musk, although Ma thinks machines will be smarter than people, isn’t worried that artificial intelligence will rise up and kill us. He claims that the problem is that “people are already unhappy because machine learning and artificial intelligence has killed a lot of jobs,” he said. His solution is to get small local businesses to become global in the hope that it helps them survive but says “The next 30 years are going to be painful.”

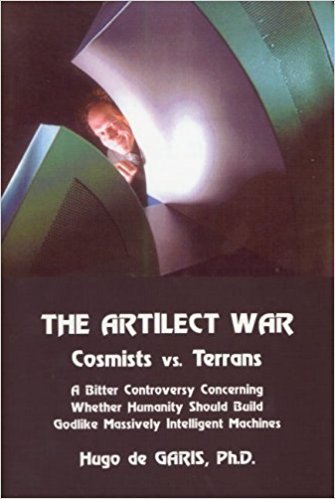

Ma is not the first to make such a claim. In his 2005 book, The Artilect War: Cosmists Vs. Terrans: A Bitter Controversy Concerning Whether Humanity Should Build Godlike Massively Intelligent Machines, Hugo de Garis claims that the question that will dominate global politics this century will be whether humanity should or should not build massively intelligent ARTIficial intelLECTs. His claim is that this question will lead to a major war in which billions of people die.

Others, believing in the so-called “intelligence explosion”, claim that the first AI to be created will have such an advantage that it will likely be able to prevent the creation of any other. This has lead to the expectation that many countries will go to war in order to prevent that creation if it appears that a rival is about to succeed. Most peer-reviewed artificial intelligence researchers don’t believe in such overwhelming intelligence – and many believe that it is likely that humans will be able to keep up by using the same computational tools available to “artificially” intelligent entities – but public fears could easily lead to war nonetheless.

We believe that human short-sightedness, most particularly in the forms of fear, inequality and the unwillingness to see the blindingly obvious long-term results of our own actions, are the greatest dangers that humanity faces. We are accumulating ever-greater power (and influence) but appear to be back-sliding on applying wisdom in using it. We are our own worst enemies and as Ma says "The next 30 years are going to be painful" and possibly fatal unless we gain and use some much-needed wisdom.

In my opinion, the growth of artificial intelligence technology has been really fast, especially in the recent decade where computer infrastructure gets improved and machine learning models become more accessible. Yet, now, AIs is still far from being really intelligent compared to humans. They can do specific jobs only. I look forward to the AI age and I do think AI will enable humans to work on more creative work by saving time of doing tedious jobs. Thanks for raising this interesting topic.

Congratulations! This post has been upvoted from the communal account, @minnowsupport, by mark-waser from the Minnow Support Project. It's a witness project run by aggroed, ausbitbank, teamsteem, theprophet0, and someguy123. The goal is to help Steemit grow by supporting Minnows and creating a social network. Please find us in the Peace, Abundance, and Liberty Network (PALnet) Discord Channel. It's a completely public and open space to all members of the Steemit community who voluntarily choose to be there.

If you like what we're doing please upvote this comment so we can continue to build the community account that's supporting all members.