Nonuniform Distributed Consensus - Scaling Steem for Mass Adoption

The

innovation of Steem's blockchain block scheduling system, designed to stop spam transactions and converge within 3 seconds also enables the fastest blockchain transaction achieved to date. But eventually the number of users will grow to the point where this limit is exceeded.

The simple fact is that even if all users were on the same gigabit LAN with all the witnesses there is a point at which you can't add users.

Increasing Throughput Slows Convergence

To enable a greater throughput in the system you have to make a sacrifice in increasing the time to finding consensus.

In switching/routing systems this is called convergence, in blockchain it is called finding consensus. Consensus is when the amount of nodes agreeing on new added data is to the point it is said to be the "main chain". Convergence means all known possible routes are available on all routers, that no existing path or endpoint cannot be reached after it has been added.

Of course there is a necessary delay between transaction posting and it being canonical.

Comparison between Steem and Bitcoin

The Bitcoin blockchain is ad-hoc, broadcasts and replication propagate in mostly random directions, and as they are confirmed, they become confirmed by more nodes. This is why it can take anywhere from 10 to 90 minutes to join onto the main chain.

Steem transactions are faster because unlike Bitcoin, miners do not create blocks and confirm transactions, only new tokens. New blocks are made in 3 seconds. In fact, because of this tight schedule it is difficult to distribute mining power, for each miner account outside of a small, fast network (maybe), and each node requires the miner account master key. This was done in part to stop botnet miners working on the Steem blockchain.

But mining is deprecated in Steem. Witnesses are the important nodes.

What is a Witness?

A witness is a full node that certifies transactions (confirmations) and keeps the complete blockchain to ensure all new transactions are consistent with the canonical chain.

This chain is discovered and is generally within 9 seconds of the latest transactions. Sometimes Witnesses miss their turn to make a block. More rarely, two witnesses might miss a block in a sequence. Very rarely three in a row.

Distributing Witness Workload

Currently, there is a 21 stage schedule that synchronises all witnesses so they produce new blocks on a regular cycle. The election for Witnesses assigns the first 19, one is randomly selected from the runners up, and then one more, which I forget exactly how it is chosen.

Witnesses thus take turns being randomly chosen for the next time slot, and maintain the regular heartbeat of the network.

Fractal Delegation

Currently the pattern of workload distribution is a single main chain. It is basically a ring-buffer, dithered by randomising the positions in the sequence to reduce the ability to use predictive attacks.

But if the network is to scale, it has to break his into multiple groups. The new data should pass through a multi-stage process of integrating data into the consensus. So to achieve this, the schedule used to have just one line, now we want to break it down adaptively into multiple parallel paths.

The result of increased throughput of the network is that there will be a faster rate of generation of new blocks. Scaling the network will require it to allow the careful reduction of data that must be carried by nodes.

Association Analysis and Consensus Account Delegation

The accounts in Steem are recognised to be cost-generating entities. Bitcoin charges by transaction, which raises the minimum transaction. The transactions cost according to the size of the data, and the demand for transaction processing. Steem instead uses a rate limiter, and each account can only get transactions processed as long as they don't exceed a moving average all nodes may not exceed.

In Steem, it is a tracked number on every user account, the size of the data each node pushes out for transaction processing.

So already it is a design principle that user accounts are the active entities. Acknowledging that they both cost and increase the value of the Steem economy, transactions are not charged for but simply you may not spam the network.

So, as point one in considering how to parallelise the network processing capacity, you would need to monitor who is paying who. You could visualise this as a cloud that you can continuously regenerate that plots a network showing the nodes that associate, and this will show you who does not.

From this map you can then instead of centralising it, break it up in accordance with the moving average of the total network load. When correlating scatter plots, it is relatively simple to apply a formula that finds one, two, three or more cluster locii. By this I mean, that the closer a node is to his point, it can be less replicated (as quickly) to other cluster locii.

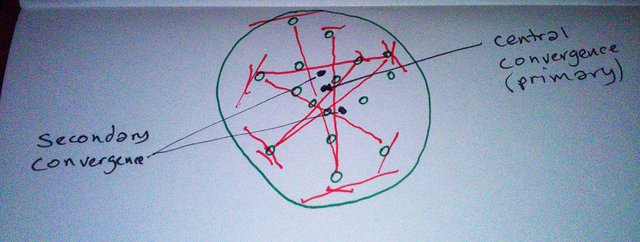

A picture can help explain this:

The green circles are the nodes, their distance from each other is closer the more transacions with each other, the red lines I draw between distant points creates what you can see will generate crisscrossing lines which centre around a midpoint. Then in black I show the single center versus the dual center.

These graphs can be generated by any node with complete blockchains that are correct at the last transaction considered. These patterns allow you to schedule instead two, three, or more parallel network segments, or making it possible for the network to adapt to high load by splitting into more segments, in a way that can become itself part of a consensus.

The witnesss can then delegate multiple parallel processing paths and in the background they can synchronise blocks agreed by network consensus to be canonical though they cover diverging fragments of the whole.

But Wait, There's More!

Next to this, with our transaction associativity graph, you can break it down more. Specifically, you can create a new class of nodes whose job is to distribute he load of client queries. Perhaps there is a need for a name for this. Intermediaries.

The accuracy of their data must be tested and certified in such a way that it is nearly impossible for them to cheat. So, the Intermediaries will be subject to routine queries by Witnesses that know the right answer to every query.

The Purpose of Intermediaries

Intermediaries allow the constructive participation of part time and small nodes to the network. They can apply tentative confirmations to transactions as well as answer any query for data relating o an account.

Intermediaries basically only carry data from a subset of accounts. The domain they cover is generated by the network consensus associativity graph. They cannot be witnesses, but they can preconfirm and enable two account holders to know the transaction is valid, and then he transaction will propagate into the nearest network centre as dictated by the Consensus Distribution Graph.

Parting Comments

By making the calculation of a network associativity graph part of the global network consensus, the distribution for parallelisation becomes possible to agree on by the network. By allowing non authoritative but certified preconfirmation, transactions can be made secure without hem immediately being on the canonical chain. And these nodes can handle queries, which means less retrieval and more certification for Witnesses.

As far as the change it create to Steem the two parts, the associative graph and parallelisation merely change the schedule to allow more than one witness to make a block every 3 seconds.

The Intermediaries are in part to increase the amount of contact surface between users and the network, but also to separate more the job of reading and writing to the database.

As a part of Intermediary codebase, also, they can function simply as caches for a user on their own machine, and build a cache of relevant data. The cache function would allow faster retrieval of blockchain data for users, as Intermediaries can give a less authoritative confirmation of a transaction.

I think the graph analysis algorithm I described will be the more interesting to the Steem devs. Intermediaries span between apps and caches are a client developer concern more. This is just to point at where the work should and probably will be done.

We can't stop here! This is Whale country!

Loki was born in Australia, now is wandering Amsterdam again after 9 months in Sofia, Bulgaria. IT generalist, physics theorist, futurist and cyber-agorist.

Loki's life mission is to establish a secure, distributed layer atop the internet, and enable space migration, preferably while living in a beautiful mountain house somewhere with a good woman, and lots of farm animals and gardens, where he can also go hunting and camping.

"I'm a thoughtocaster, a conundrummer in a band called Life Puzzler. I've flipped more lids than a monkey in a soup kitchen, of the mind."

- Xavier, Renegade Angel

All images in the above post are either original from me, or taken from Google Image Search, filtered for the right of reuse and modification, and either hotlinked directly, or altered by me

Your line of thinking is similar to what we are working on.

The rate of increase in transactions could rise very quickly, since already we have posts, votes, power downs, power ups, conversions, flags, and escrow and savings and sbd and there will be sooner or later new types of transactions related to new applications like PEVO and busy and talk of q&a and wikipedia type sites with different kinds of article posts... So I am pleased to hear this is on the agenda for when the transaction flood comes...

I don't know all of the technical points or terms, Loki, but I'm wondering if Dash is using something like the "intermediaries" you describe to provide their "instant" confirmations for transactions? Imo, the real bottleneck for practical spending of bitcoin - for store purchases and whatever - is the long wait for confirmations. So then, intermediaries could provide a quick preconfirmation for spending transactions as well, is this correct?

I'm thinking at some point it would be good if Steem could become "digital cash" like Dash, especially considering all of the types of apps and web properties that can and will eventually be hooked into Steem.

I am more of a mind now that they are mainly caches and replicators for clients, and with the associativity graph and then the split data caches of blocks can replicate network segments (which is smaller) and Intermediaries. Also, it may be better to split up the network more, sooner, far ahead of transaction demand, since this also could shorten the network heartbeat (time to block). This would also allow a minor hardfork to adapt to increasing utilisation (changing the number of parts you divide the network into) instead of letting that be fully automatic.

If current load was handled by 3 blocks at a cycle, it could also process transactions in one second. The paralel blocks could run on staggered seconds, meaning 1 block per second. There also has to be an overlap of accounts between schedule lines, and a simple scheme to independently determine which witness should take the transaction where each schedule thread overlaps.

I will be thinking a lot about this schene yet. scalability and low latency at the same time is the goal.

Very interesting! Really did my best but still find some things hard to understand. But learned a lot too, thanks for sharing your knowledge :)

Too complicate to understand .

Upp anyway

I think the Intermediaries must create a communication layer between themselves to verify accounts. I know as an account(s) holder I do not want to be locked into any one Intermediary. How would you measure my ability to be on the network? Who pays for the network costs? And how? In any market the health is measured usually in its liquidity. How would you measure the health of the Intermediaries?

It would be a rankng system where failures push them down in a list and a client chooses from the top from those that store relevant data when making a transaction or making a query.

Then, when work is performed, the client acknowledges with a signature and this adds to a reward table for this task. The client could refuse to sign but then the Intermediary can refuse to act on requests afterwards. For this, requests have to be signed, and thus the querant is identified.

Yes, I am not sure exactly. I know caching could be done and to get people to run these as public utilities they have to profit from this. But the point of it is in allowing non-full witness nodes to contribute to the network in a useful way. Because the blockchain will grow fast, to ensure availability, there needs to be a way to lower the bar on storage requirements.

The creation of an association graph helps distribute witness threads to avoid the duplication of included transactions in blocks, but the same graph can be analysed to distribute partial storage nodes. The exact set of accounts an Intermediary handles can be assigned by Witnesses based on the consensus map, and this list is published so clients know where they can pull down data without putting load on witnesses.

The pre-confirmation idea maybe is not necessary but more copies of the blockchain means faster synchronisation for setting up witnesses as well as for other intermediaries, same as how bittorrent fans out distribution of file blocks to partial and full caches, including even other Intermediaries downloading.

There is a law of diminishing returns at some point for replication of data for availability purposes, but I think you can agree that at some point the blockchain must get too big for average systems to fully mirror. And witnesses don't have to fuss so much with data distribution and replication, and, I think important, query handling.

Great stuff. Resteemed!