Deep Learning Explained - in 4 Simple Facts

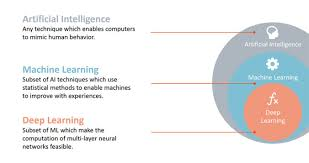

Yesterday, I talked about Machine Learning, and the huge impact it will have in the world in the future. Today, I’d like to talk about a similar paradigm, that often gets mixed up with it, but that is not the same thing at all. I’m talking about Deep learning.

First off, let me say: this topic is vast. In my article, I’ll try to boil down the main facts, but be warned, you should investigate the matter on your own to learn more.

Hope I can set you on the right path at least. Lets dive in!

1. Deep learning is a type of machine learning

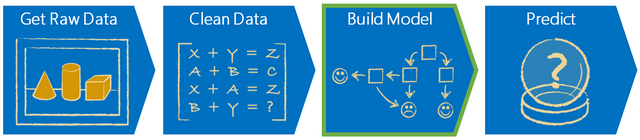

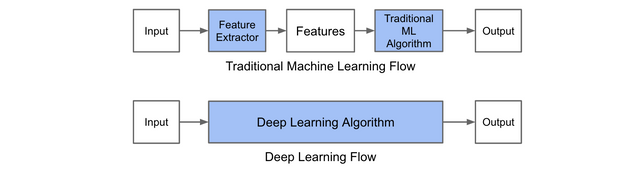

Machine learning, as we previously examined, consists of a machine learning from experience, by perfecting a model it forms after doing a task over and over again.

Deep learning involves learning on a different level. I’ll go into it later, but basically, it forms a lot of connections between different characteristics of given data, trying to find the hidden patterns in a multi-tiered system. It works in a way that mimics the human brain, and adds in a layer method that's very interesting.

That said, it has fundamental differences from machine learning. For starters, it’s more high demanding. Try running a Deep Learning algorithm on a low end machine, and see how fast it crashes… and possibly burns. A machine learning algorithm is more lenient, and can even run on a crappy computer (I should know. My PC crashes during LoL :( ).

On a data level, Deep Learning performs at its best when it has huge volumes of data to analyze. Machine learning does its’ best when it has a small sample of data it can perform its’ task with repeatedly.

Finally, on a software level, these different types of systems tend to solve problems differently. But enough technicalities, lets get into the meat.

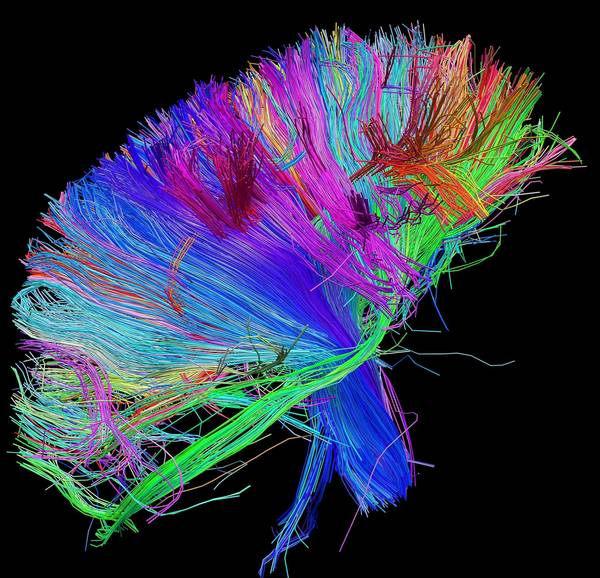

2. It mirrors the functioning of our brains

Have you ever thought how your brain works?

Put suuuuuper simply (I’m no neurologist, don’t quote me on any of this), there are little “wires” in your brain, called neurons, that take information, and pass it along between themselves to the main parts of the brain that handle certain functions. The brain parts do their job, and info is passed again, until the end goal of the neural activity is reached.

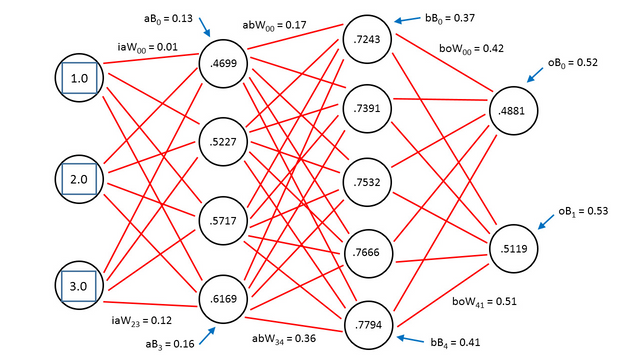

Deep learning imitates this sort of structure, with some differences. First, data is divided into many nodes (taking the place of neurons). This data gets transmitted to other nodes, which manipulate this data in some ways, so as to make it better usable for the end goal of the system. The most often way the data gets manipulated, is by assigning a weight to it. The weight determines how valuable that information is for the desired output. However, this is where the differences between neurons and Deep Learning come into play.

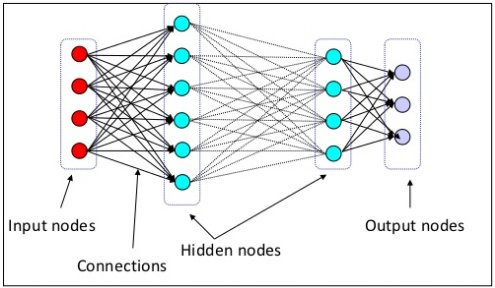

3. Deep learning works in layers

The nodes I mentioned previously are just the first layer of such nodes, that assign weight to the data. After that, the data gets passed to another layer, that will take the manipulated data so far, and manipulate it even more. The layers go on and on until the output is reached.

Confused? Yeah, so was I when I first learned of this. Let me give you an example. Lets say we want a computer to be able to identify a picture of something. Lets say, a cat (this is the example most give. I guess Deep Learning investigators love cats hehe).

The computer would start by dividing the above picture into a ton of small pixels. Every pixel would then go to a separate node, which would evaluate how important it was towards the overall picture. The blank space is not important at all. It would get a weight of 0. The cat ones are pretty important, so they would get a value of 1, or close to that.

Then, the processed picture goes into the next layer. It looks at the colors, and determines if the colors are suited for a cat. The pixels it doesn’t think belong to a cat get a weight of 0, the ones that do, get a 1. Then, this picture goes into another layer, then another, then another.

What you get finally is a bunch of numbers, who then get a probability of being a cat or not. The researchers then tell it if it was a cat or not, and it stores this experience in a model. That model can be perfected later on with more cat pictures, until it can always tell if there is a cat in the picture or not.

4. It will drive an AI explosion in the future (and is already doing it)

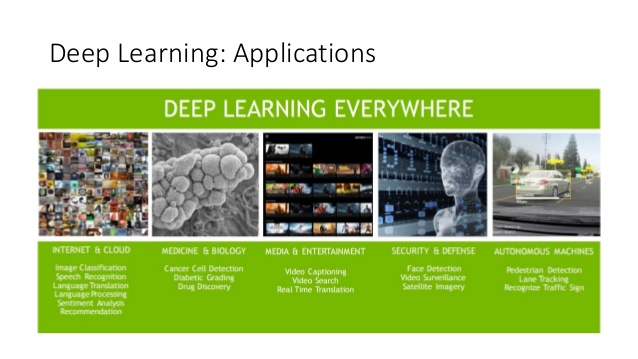

Remember all the possible applications I mentioned Machine Learning would have in the previous post? Well, this one could take every single one of those possible applications, and supercharge them.

Whereas Machine Learning allows a machine to simply learn, this paradigm has given birth to systems with capabilities better than those of humans. Everything a Machine Learning program can do, these systems can do better, as long as they have enough data.

Many programs have been made to perfectly understand what a human is saying, for instance (Goodbye crappy Youtube subtitles!). Other programs can analyse a picture, and say what’s in it with near perfect results (they can play “Where’s Waldo?” better than most humans). They can read your shopping list and know exactly what you could want next. And many more applications still.

With systems like this, a machine can perfectly understand what’s around it, and that could prove to be a miraculous breakthrough for AI. I will talk about that in one of my later articles…

Mind-bending stuff, right?

This topic is very confusing, and even after investigating it in depth, I still can't say I understand it fully. However, I truly believe this will be a very important topic in the world of technology later on, and did my best to pass down what I know!

I'll be covering and even more confusing topic the next time - Artificial Intelligence! That will bring us full circle in this series of articles! It might take some time though - this last one will be probably the hardest to make of all 3.

In the meanwhile, if you enjoyed this article, or have some question or criticism, feel free to sound off in the comments! I’m always looking forward to improve, and I’m far from an expert in this subject, so I advise you to look for info on you own.

I hope I’ve wet your appetite. Till the next, dear readers!

Awesome article! I really enjoyed this.

Thanks man! That means a lot to me :D

I try to deliver content like this consistently, and I'd like people to keep giving me feedback. Consider following if you want to see more :)

I have already followed you, haha. :)

hahahaha, sorry bro, my bad, and thanks :D

Gonna follow you back!

I very much enjoy your articles. You've written about it in a clear way that non-experts could understand. It's not easy. Keep up the great work.

Dude, feedback like this is why I keep making these articles! I'm on summer vacation goddamnit, and it IS HARD AS HELL to deliver these consistently! Thanks a lot for you support :D

Congratulation for the article! It's very well explained. Also few years ago I invest a lot of time in AI & Machine Learning but, in the end I resign because It's not so easy to made at least a mock-up of kind of algorithm. Have you ever try to implement a DL algorithm, at least, lets say to recognize letters?

Thanks! I did my best :D

I too am beginning to invest time into this, but due to pressures on my schedule (I should be on vacation :( ) I havent been able to implement any algorithm yet . I'm looking at the TensorFlow tutorials, when I start, it will likely be around that. Anyway, when I get around to it, I will post the results right here, so feel free to follow if you want :)

Tensorflow is really straight forward! I'd recommend trying it right away on real data by just copying a tutorial and then changing it so it accepts your inputs. Then play around with the parameters a bit to see how good you can get it to be. I first started playing around with TF a month ago and I already have a pretty good project going on. Keep me updated on your progress. :)

Great read.

Thanks for the good explanation looking foreward to more articles from you.

Just saw this now friend! I like to answer everyone! If I'd known then how much you'd have helped me in the future, I'd have answered on the spot!

It's my pleasure to inform people, and I hope I can continue churning out good articles like this :D

Wow, great to see a deep learning community has potential on this site. Hopefully more people make posts like this, so it can grow. :)))

Awesome Post!

I have studied accelerated learning most of my life, and i am currently working on an ebook based on behavior change, utilizing this exact process.

This is how our subconscoius mind works, building reference experiences, finding regularity or patterns in the experience, and then forming general models that allow us to predict events and behavior.

On an individual level, the vast majority of people have never learned to take this fundamental unconscoius learning process and to do it deliberately, and this is what i intend to change.

the real benefit comes when we can link this unconscoius patterning process to the conscious ability to represent information.

Great post man, liked and followed, look forward to reading more posts from you in the future!