Exposing the invisible: Multi- and Hyperspectral imaging

I think it is safe to assume most of us are at least partially familiar with how a camera works. And for what we as humans can see, the technique is perfect for capturing your delicious food or funny pets (Of course cameras can be used for more serious matter). But still a lot of information hides in the light that hits your sensor. As most people probably know, light consists out of a variety of wavelengths that make up the electromagnetic spectrum but in a regular camera this is blended to only 3 colors, red, green and blue.

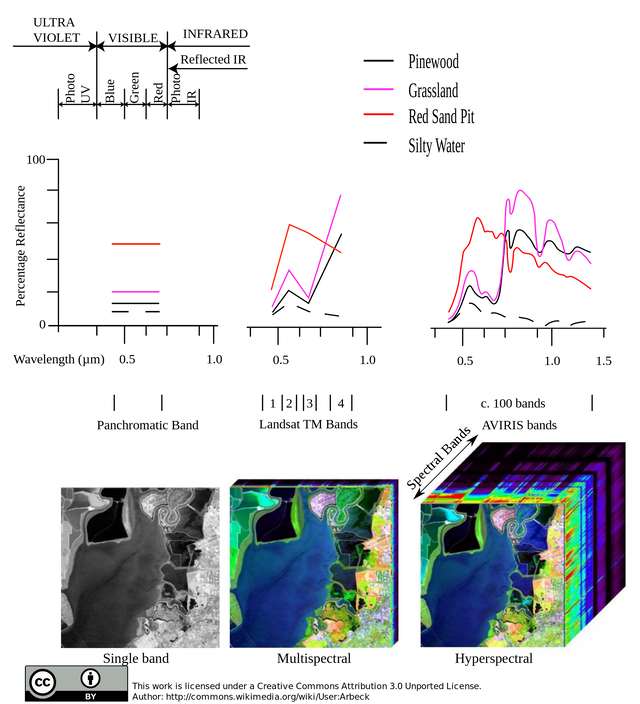

If we could only differentiate more wavelengths in a picture, perhaps more information can be subtracted from an image than just the colors. And that is exactly the aim of Multi- and Hyperspectral imaging. Multispectral images work similarly to a regular camera, but have a different construction of filters in order to capture more "bands". Hyperspectral imaging uses different techniques with the goal of capturing the entire spectrum of light without being restricted to bands.

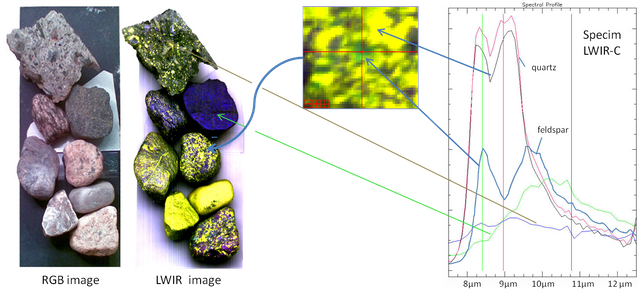

Regular RGB Image on the left compared to a hyperspectral measurement of the same image. In this example precise measurements allow determination of minerals, solely based on the reflected light.

How a regular camera perceives light

The lens projects an image on a sensor consisting out of many light sensitive pixels These pixels store an electric charge depending on the amount of light that hit it, after which the electric charges are "read" and used to construct the image.

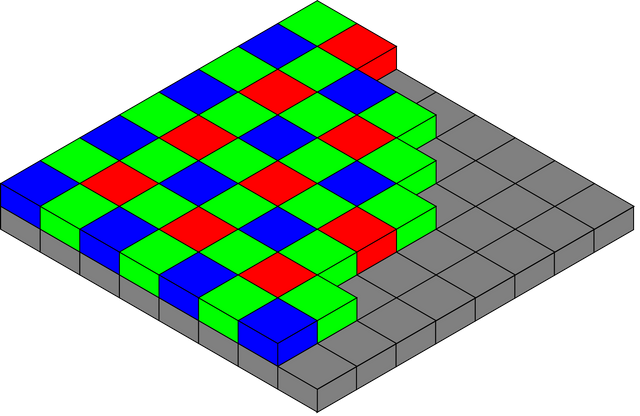

In the first instance, an image sensor does not capture color. The pixels are sensitive to all light and further adaptions have to be made in order to for you to catch your selfie in RGB tones and not only as a panchromatic grey image. What makes this possible is most often a Bayer filter. Other filter techniques exist, but this is the most common due to its low cost. With this filter, each photo sensitive pixel has a particular filter so that pixel only captures the light intensity of that color. In practice 50% are green pixels, and blue and red make up the other 50% of the filter (25%/25%). This is done to mimic the human eye, which during the day is more sensitive to green colors.

The raw image can then be processed in a variety of manners to produce a final image.

Illustration of a Bayer filter. Each pixel has a red, green or blue filter. This is later processed, "demosaiced" or "debayered" to construct an image.

This technique is adequate for displaying and reproducing images that will mainly be visually assessed.

Multispectral Imaging

Multispectral imaging works similar to a regular photo sensor, where certain pixels are targeted on a specific group of wavelengths. In fact regular cameras are a kind of multispectral cameras as they allow to capture three bands. But besides only using the three bands for red, green and blue, additional bands can be observed to visualize light that is not visible to the human eye. This technique of imaging is often used in satellite cameras as they often need additional bands for monitoring the earth's surface. With this extra data, calculations can be made to assess vegetation cover, water masses, urbanization and much more.

The thematic mapper of the Landsat missions for example carried 7 radiometers, each focusing on a specific spectral band ranging from blue to far infrared and even thermal infrared.

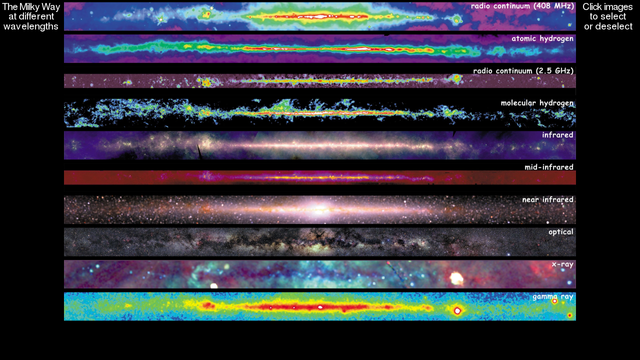

Multispectral images show a variety of ways to visualize the Milky Way (NOT LANDSAT 7). False colors are used as none of the other bands are visible to the human eye.

Hyperspectral imaging

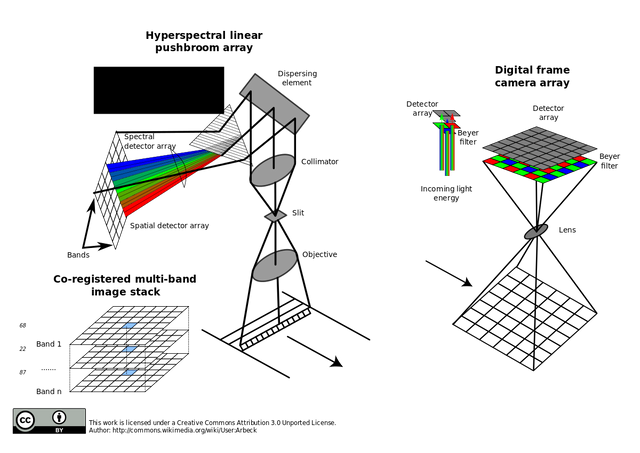

Instead of filtering certain bands of light, hyperspectral imaging techniques capture the entire spectrum and measure the intensity of a broad range of wavelengths. It does this by refracting the light and capturing the intensity of this refraction. This vastly increases the resolutions of wavelenghts that can be measured but also comes with drawbacks.

Since the refraction of light has to be projected on a sensor, only one line can be analysed at a time. For each line of the image the entire spectrum is measured and instead of multiple images, a 3 dimensional matrix of data is acquired. On the other hand does this increases the time needed to capture a full image and reduces the mobility of such systems. For small and precise measurements, objects can be moved on a conveyor belt in front of the sensor.

Schematic comparison of hyperspectral imaging (left) and regular photo sensors (right). Only one line can be scanned each time as that line gets refracted and fully occupies the sensor. On regular cameras, this refraction is replaced by filters.

Different outputs from single band imagery, multispectral imagery and hyperspectral imagery.

Uses of multi- and hyperspectral images

Multispectral images are often used in satellite imagery as they allow to observe the earth's surface more thoroughly. Furthermore does it make possible to observe factors such as NDVI or NDWI. Concepts that would not even exist without multispectral imaging. Thanks to these sensors global vegetation trends or land use changes could be observed in only a fraction of the time. Another common use of these extra bands is the managing of farmlands to achieve maximized productivity.

Hyperspectral images broadened horizons as well. Specific materials can be detected by a unique spectral signature, the individual dips or peaks in reflectance of a certain wavelength. These spectral signatures allow for example geologists to identify certain minerals or potential resourceful rock formations. The signatures could also be used for defense or remote sensing to identify or differentiate man made elements in the environment from natural elements.

As you see my examples are mainly geographical and geological since these are my personal interests, but the amount of fields this technology can be applied to is remarkably vast.

Conclusion

A lot more information is hiding in the light that surrounds us everyday. If we could only make this information more accessible I believe a lot of new innovations could arise. Just picture every smartphone camera with the capability to capture the entire spectrum of light. This combined with the unique spectral signatures of objects, artificial intelligence and object recognition would make for some really interesting new innovations! I hoped you enjoyed reading this as much as I enjoyed writing and researching it. If you have any comments, questions or remarks on the topic/my writing, please let me know!

Regards!

Sources

All images in this article were labeled for reuse.

https://technology.nasa.gov/patent/TOP2-262

http://www.whatdigitalcamera.com/technology_guides/bayer-filter-work-60461

Multispectral imaging: a review of its technical aspects and applications in anatomic pathology.

Vet Pathol. 2014 Jan;51(1):185-210. doi: 10.1177/0300985813506918. Epub 2013 Oct 15. Link

https://www.imec-int.com/en/hyperspectral-imaging

I am wondering how much social media would become flooded with "ghost" pictures and stories. And of course all the explanations that try to disprove those. Sounds like a lot of fun.

Hah! I didn't even think about that. ghost hunting would become a lot more scientific then!