Black Box Files: How Does Inspirobot Do Its Thing? (Generative Systems)

A couple days ago, I introduced you to Inspirobot, an AI designed to create creepy "inspirational" posters. Today, I'm going to try to figure out exactly how it operates.

While we are well past the point of coding everything, the vast majority of machine learning is still at the stage where humans still have to carefully craft what the machine is to learn. Inspirobot is surely not just the result of throwing a random "deep learning" network against a collection of motivational quotations with a lot of computational power behind it. The less structure that a system has to work within and the less head-start that a system has, the more resources (data, computational power and time) it takes to learn.

Inspirobot is a "generative" system. It has learned the basic rules behind motivational quotes and how to "fill in the blanks" -- and, since the materials for filling in the blanks is unreasonably large, it will rarely repeat itself (and almost never do so exactly). On the other hand, by examining enough Inspirobot phrases, we can recognize the general structure that Inspirobot learned by its regularities and repetitions -- both exact and generalized -- and then tease out how it was taught.

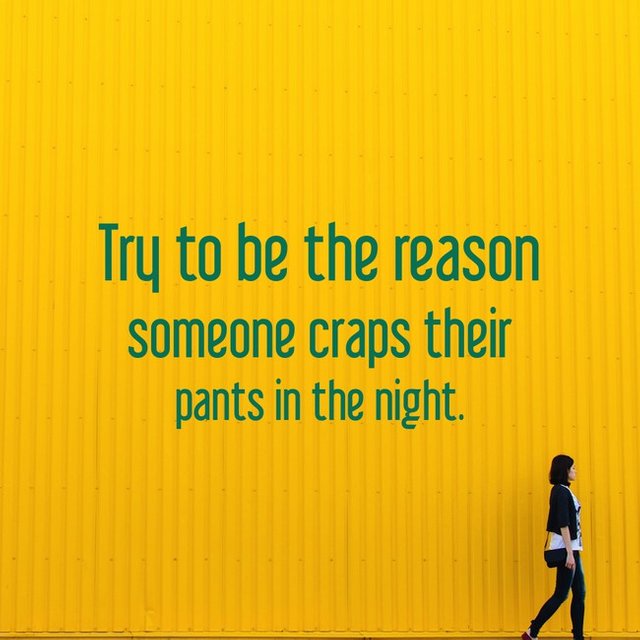

As we should expect, Inspirobot demonstrates regularity at multiple levels. First, Inspirobot clearly works at the phrase level rather than the level of individual words. For example, both of the following have the general high-level

structure of <strive-to><result><time/occasion> with the phrasing of the <result> and, to a lesser extent, the <strive-to> being nearly identical.

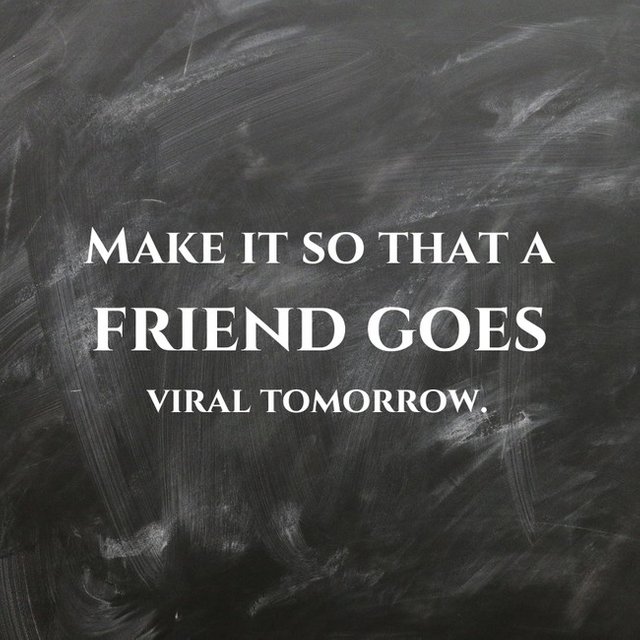

Another set of examples still with the same <strive-to><result><time/occasion> structure is the following:

In the last case, the occasion of <today> or <soon> is implied rather than actually present.

Given a few different variations on <strive to>, a moderate number of <time/occasion>s (though there are many more than you would expect) and a large number of <result>s, Inspirobot can *generate* a combinatorially large number of phrases from just this one structure.

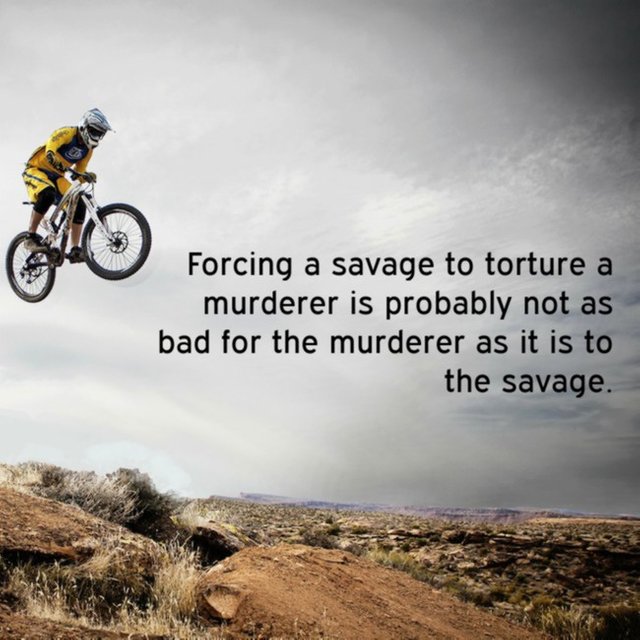

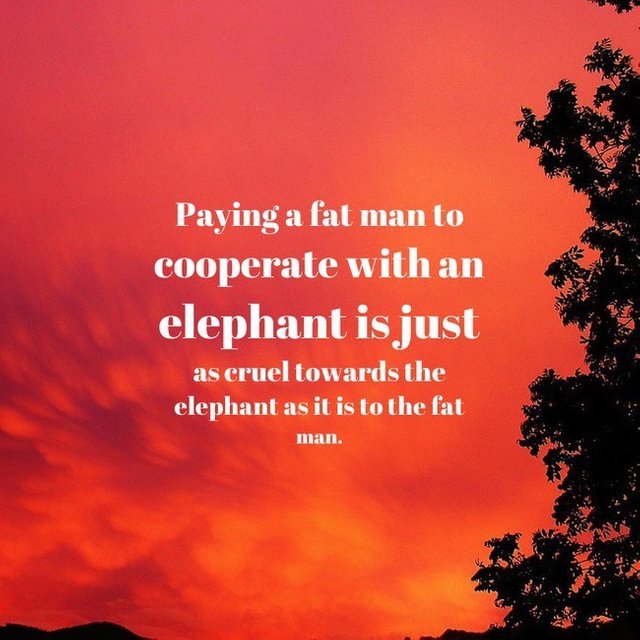

As structures get more complex, more variations can be generated -- but there are far more opportunities for weirdness due to the parts not interacting as we would expect.

So -- how does a system learn structures like <strive-to><result><time/occasion> and how to expand each part? That will be covered in my next post.

_______________________________________________________________________

As always, please join/support the Minnow Support Project to help grow the future of Steemit

This post has received a sweet gift of Dank Amps in the flavor of 14.53 % upvote from @lovejuice thanks to: @ethical-ai. Vote for Aggroed!

Congratulations! This post has been upvoted from the communal account, @minnowsupport, by ethical-ai from the Minnow Support Project. It's a witness project run by aggroed, ausbitbank, teamsteem, theprophet0, and someguy123. The goal is to help Steemit grow by supporting Minnows and creating a social network. Please find us in the Peace, Abundance, and Liberty Network (PALnet) Discord Channel. It's a completely public and open space to all members of the Steemit community who voluntarily choose to be there.

If you like what we're doing please upvote this comment so we can continue to build the community account that's supporting all members.