Why modern business runs on streaming data || Part : 1\2

Data is moving. Almost all data sources have an element of dynamism and movement in them. Even data at rest in some form of archival storage used to lead a more fluid life between applications, devices, and backbones, and inevitably also moved to storage via a transport mechanism.

But while almost all data moves, not all data moves at the same speed, at the same cadence, and with the same kind of circularity of system, size, or value.

Continuous data in streams

In the ever-active world of cloud computing, the ubiquity of mobile devices, and the new population of intelligent "edge" machines on the Internet of Things (IoT), there is a continuous flow of data that we naturally call a stream.

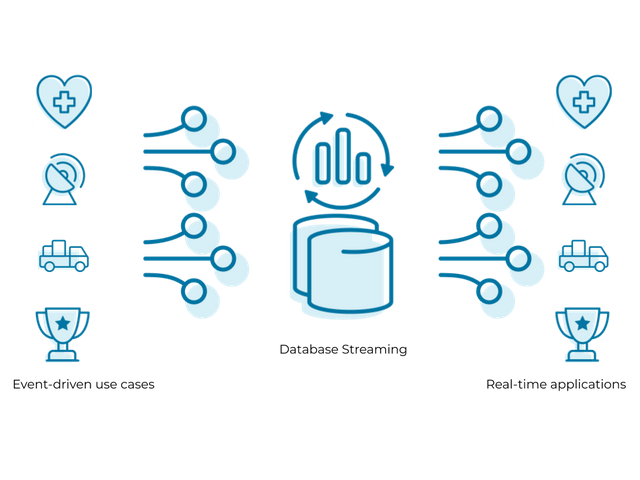

So what is data streaming and how should we understand and work with it?

Data streaming is a computing principle and operating system reality where (usually small-sized) elements of data travel through the IT system in a time-ordered sequence.

Often referred to in the same breath as IT "events" (everything from a user pressing a button on a mouse or pressing a key on a keyboard... to the asynchronous changes that occur when applications run code and do their work), smaller elements of data that streams of data streams in the form, log files (small records tracking every step of behavior performed by applications and services), financial transaction logs, web browser activity records, data from IoT smart-machine sensors, geospatial or telemetric information, in-game information can be formed.

information about movement and action in a video game… and everything down to the instrumentation record of the smallest device that, like everything else here, creates blobs of data that form part of a continuous flow that ultimately forms a stream.

An organization that works with a data-driven and data-driven approach can take practical steps to analyze its data streaming channels in real-time to provide a detailed and accurate view of what is happening in the business.

By using a streaming data platform to perform sequential analysis of each data record in a stream, an organization can sample, filter, correlate, and aggregate its streaming data pipeline and begin to create a new layer of business insight and control.

Thank you so much for reading share your thoughts in the comment section : )

Warm regards,

@Winy

35 % set to Ph-fund