Storage Costs on Blockchains using EOS.IO Software

In the EOS.IO Technical White Paper we discuss some of the features of a blockchain that adopts such software unmodified. In the paper we describe a method of resource allocation where if you own 1% of the tokens then the software will allocate 1% of the available storage capacity of the blockchain to you. If there are 1 billion tokens and 1 terrabyte (TB) of storage then each killobyte (1024 bytes) of storage would cost about 1 token. At a $3 billion dollar market cap that would represent about $3 per killobyte. If the tokens reach the market cap of Ethereum then it would could be $30 per killobyte or about 3 cents per byte.

We also know that every single account created with EOS.IO software has about 1000 bytes of data just to track the permissions, balance, and other miscellaneous overhead. This means that it would cost $30 per account which is still much too high.

Increasing Capacity to Reduce Costs

In order to bring costs down when token values are high we would need to increase capacity. In order to reduce the cost-per-account to $0.01 we would need 3000 TB of storage. If we could use SSD, this kind of storage would cost about $1M dollars. If an EOS.IO based blockchain reaches a $30 billion valuation, then $1M may be insignificant amount of money for a blockchain able to allocate $1.5 billion dollars per year to block producers (5% inflation).

Unfortunately, SSDs are about 2500x slower than RAM and forcing the operating system to “swap” to SSD can absolutely kill performance as many Steem witnesses recently discovered when Steem upgraded to chainbase.

This means we would require 3000 TB of RAM-speed storage. This is not unusual, Google keeps their entire database in RAM. What is unusual is needing that much RAM for a new platform.

New Storage Technologies

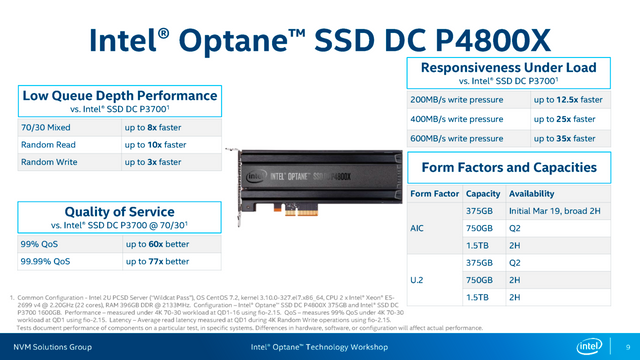

Intel recently started shipping its first Optane SSD based on the new 3D XPoint technology. This is the first SSD that can be configured for use as RAM and has performance that is dramatically faster than prior SSDs even if it is slightly slower than traditional RAM. They will be releasing these drives with 1.5TB capacities later this year.

With these new technologies we think the cost of high-performance memory should fall dramatically and block producers should be able to scale up the available memory to bring down costs. The higher the market valuation of the tokens the more memory the block producers will be able to afford.

The True Nature of the Challenge

The tokenization of RAM storage by the EOS.IO software causes it to acquire monetary value in addition to storage value. This added monetary value makes it expensive to use for actual storage relative to storage that lacks monetary use. Gold is not used in industry as much as it could be because it’s monetary value exceeds its industrial use value. Tokens created by those who use the EOS.IO software effectively monetize RAM storage capacity by demanding proof-of-performance from elected block producers.

Like gold-reserves in a bank, most of the time it sits there and is never used. Block producers could advertize a “capacity of 3000 TB” when in reality they only have 3 TB of capacity, 1000x fractional reserves. Under this model the cost of storage would be reduced the same way the value of “gold backed notes” falls under fractional reserve banking. Everything will work fine until there is a “run on the bank” when all of a sudden someone decides to buy 1% of the currency and attempt to store 30TB of data when there is less than 3TB actually available.

Preventing a Run on the Memory Bank

A network can operate with “cheap storage” per token so long as the majority never attempt to actually use the storage they are entitled to. As the available storage decreases the price will have to increase. At any time someone attempting to consume 100% of the available storage will have to pay 100% of liquid tokens; however, someone only attempting to consume 1% of the available storage may only need to pay .01% of the available liquid tokens. The exact equation that is used will require some modeling and approximation, but it should be possible to make the initial price of the first byte of storage used be 1000x cheaper per byte than consuming all available storage.

It could be as simple as starting the reserve ratio at 1000x and then reducing it to 1x as the percent of actual memory is consumed. So if you have 1TB of real RAM you start out at 1000TB of virtual RAM (1000x). After consuming the first 100GB (10%) your reserve ratio can fall to 100x resulting in a new virtual RAM of 100TB. By the time you consume 500GB (50%) your reserve ratio would fall to 20x giving virtual RAM of 20TB. As virtual RAM falls the virtual RAM per token will also fall automatically increasing the price of each additional unit of storage.

Implications of Variable Pricing

The market will naturally consume resources until supply and demand are balanced at a constantly changing market price. If the initial pricing of storage is too cheap it will rapidly be consumed until the price rises to a level where only valuable data is stored. At this point block producers can either increase capacity or the maximum reserve ratio to bring prices down. The token holders will vote for the producers who offer the most capacity for the least money and if the token is growing in value the producers will be able to afford the additional capacity.

Another factor of variable pricing is the financial incentive to release memory when it is not used. As the token value increases the opportunity cost of using those tokens to maintain storage also increases. Smart developers will design their applications to minimize memory usage and maximize opportunities to reclaim memory.

Memory Squatting Attack

A side effect of this algorithm is that someone looking to consume a lot of memory has financial incentive to be the first to consume the memory. Once they have consumed it they can repurpose it as they see fit within their contract. If they ever need their money back they can release the memory. Reserving memory “first” will quickly push the price up to a point that balances speculative demand with actual demand.

Fortunately this attack is greatly mitigated by the fact that reserved memory is “not transferrable” and that initial memory costs will still be about 100x more expensive than buying actual physical RAM. Every byte of memory used by the network is replicated and stored in over 100 full nodes and often many partial nodes. The network has to pay these people enough to justify purchasing and maintaining this real memory. Therefore, decentralized RAM replicated across 100 nodes will always be 100x more expensive than centralized RAM on a per-byte basis. Block producers should take care to keep the reserve ratio set such that the cost-per-byte doesn’t fall below the actual cost of the real memory being used by the network when factoring in desired level of redundancy.

Summary

The market will naturally and unavoidably imbue monetary properties to tokens created with the EOS.IO software. It will be necessary to implement dynamic pricing on the cost of consuming an additional unit of memory in order to keep prices practical for actual application development. This in combination with new memory technologies will ensure that the cost of storing data on EOS.IO based blockchains is reasonable for decentralized application developers.

Often, these EOS posts are above my tech-level. This one, however, I loved. Fascinating notes on SSD vs. RAM server storage, and the analogy comparing unused storage to fractionally reserved notes was very helpful. It seems like there is a really good grasp on the potential market counter-factors you may run into. Looking forward to participating when I can.

Aren't we supposed to go as far as possible from the actual fractional reserve system backed up by thin air ? Why are you replicating this system for storage ? If the storage is too expensive no DAPPS can be developed. The price per Gigabyte should be under 1 USD at all times to allow DAPPS to grow as much as they need to. With the fractional reserve system that you want to put in place, "a run on storage banks" will happen and everything will be shut down.

EOS got hundreds of millions of dollars in the ICO, why they don't reinvest half of it to send storage units to the producers ? Let's say 1 EOS token is fractionalized x10000, producers will have to add new storage units every single day in their datacenter. Also, if tokens are fractionalized, nobody will be able to rent out EOS tokens as 1 EOS token will give access to a lot of storage and there is no need to rent tokens as it was previously thought.

EOS is supposed to be the blockchain to run applications like Steemit, Steemit has a lot of content, it uses a lot of data storage.

The most important thing for EOS is to let people store data on the ledger for as cheap as possible, if possible cheaper than centralized systems.

5% of let's say 10 billions USD market cap its a lot of money, surely enough to buy thousands of storage units. Producers have to be big data centers companies, not single dudes in their room with a 6 rig GPU.

I think Steemit is better served with their own Blockchain. Small apps who do not warrant their own blockchain and do not have such a huge community are perfect candidates for eos. It is a different animal...

You don't understand the problem. When it comes to smart contracts we need RAM not DISK and they don't make individual machines capable of 3000TB of RAM and cluster support isn't ready.

Furthermore, you didn't grasp the point of the article was to come up with a pricing algorithm that ensures there can never be a "run on the memory bank".

How is cluster support isn't ready? What if you find a nice vendor like Cray?

https://www.top500.org/lists/2017/06/

bro plz vote me i am new here plzz.

I agree on decentralisation for cheaper prices on RAM/IO/CPU. But there's a catch within these types of industries... the adoption of new hardware by the community will always be biased by extreme configurations falling in the hands of who can afford them. The speed of an "Optane" will not matter much in a distributed environment if you can buy 20-30 disks with the same amount. Plus with conventional disk (rotary) technology, you will get so much more capacity, that in long term kills any SSD. Maybe in a few 10 years, things will start changing... No doubt that SSD revolutionise the IO/s world, but in terms of decentralised storage... that is not a must have in my opinion.

Although in HPC (High Performance Computing), these things are AMAZING assets and a must have for sure... but simply due the importance of latency at those areas! Low latency is a key player if you want to bring performance up on specific synchronous workloads.

I am eager to try EOS.IO with some real world research codes... going to be an amazing step in humankind!

Good thanks for sharing

This comment has received a 0.14 % upvote from @booster thanks to: @hamzaoui.

That's exciting to read about how dramatically faster the Intel Optane is, man we're propelling into the future - no need to look back if we can't even keep pace on what's new.

I think EOS along with Tezos is a record breaking ICO hype market boom and won't climb much more. Buy early and sell opportunity, no long term.

EOS is gamer changer longterm. I am not sure what Tezos is offering besides a governance mechanism. I believe ETH will reach over $1000 into next year and EOS will trade up much higher than where it currently sits upon its release.

I understand that the EOS token we have bought recently, is not related to any EOS.io based blockchain , not now nor is it the intention of the developers to establish a connection in the future. The Eos.io blockchain ecosphere could be wonderful, but the EOS coin of today is useless and has no value at all.

Maybe, but i'm totally in to hold there few thousands — just because i'm believing to the technology and block.one team

There will definitely be selling opportunities...but that doesnt mean that EOS isn't able to actually do what ethereum claimed to. which means it will be huge. Watch my friend ;)

I agree ... +1

It seems EOS and Tezos have noting to show for jet ...

Also cehck this ... my main points of consern are:

There is much discussion going around that EOS is a scam. This is down to two key points:

EOS TOKENS HAVE NO RIGHTS, USES OR ATTRIBUTES. The EOS Tokens do not have any rights, uses, purpose, attributes, functionalities or features, express or implied, including, without limitation, any uses, purpose, attributes, functionalities or features on the EOS Platform. Company does not guarantee and is not representing in any way to Buyer that the EOS Tokens have any rights, uses, purpose, attributes, functionalities or features.

NOT A PURCHASE OF EOS PLATFORM TOKENS. EOS Tokens purchased under this Agreement are not tokens on the EOS Platform. Buyer acknowledges, understands and agrees that Buyer should not expect and there is no guarantee or representation made by Company that Buyer will receive any other product, service, rights, attributes, functionalities, features or assets of any kind whatsoever, including, without limitation, any cryptographic tokens or digital assets now or in the future whether through receipt, exchange, conversion, redemption or otherwise.

PURCHASE OF EOS TOKENS ARE NON-REFUNDABLE AND PURCHASES CANNOT BE CANCELLED. BUYER MAY LOSE ALL AMOUNTS PAID.

EOS TOKENS MAY HAVE NO VALUE.

COMPANY RESERVES THE RIGHT TO REFUSE OR CANCEL EOS TOKEN PURCHASE REQUESTS AT ANY TIME IN ITS SOLE DISCRETION.

As such, there does not appear to be a set in stone definition of what the exact function of each token has – if any - on the EOS platform.

No U.S. Buyers. The EOS Tokens are not being offered to U.S. persons. U.S. persons are strictly prohibited and restricted from using the EOS Distribution Contract, using the EOS Token Contact and/or purchasing EOS Tokens and Company is not soliciting purchases by U.S. persons in any way. If a U.S. person uses the EOS Distribution Contract, uses the EOS Token Contract and/or purchases EOS Tokens, such person has done so and entered into this Agreement on an unlawful, unauthorized and fraudulent basis and this Agreement is null and void.

Your picture is funny, is that really you?

Can the EOS software just be "added " to the blockchain as an afterthought, or must it be part of the planning of the chain?

I wish EOS. IO a long life and to be as good as it can be

Wish it the best of luck 🍀

beautiful