Emotiv Neuroheadset First Try and Experience Sharing - Emotiv头盔初体验

I have spent over 5 months on this Emotiv neuroheadset due to my dissertation, which had been a nightmare for me☹. In case of anyone who is not that professional was cheated to play with this apparatus, I share some using experience and matters needing attention.

从首次带上Emotiv的头盔至今已经至少五个月了,可以说是漫长而痛苦的五个月。为了让更多人了解这类消费型脑电波头盔(避免非专业人士入坑),这里我不得不分享一下我的产品体验历程。

BRIEFING

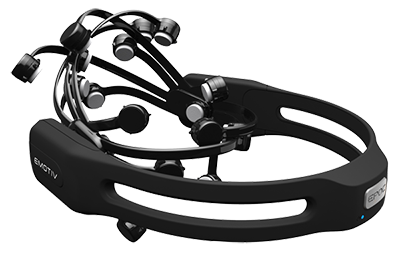

First of all, what is Emotiv? (I promise I am not advertising this product 😉) Emotiv has two types of wireless noninvasive consumer-grade (electroencephalography)EEG device, Emotiv Insight with 5 channels and EPOC+ with 14 channels (updated at end of Nov.). More information can be found at official website: https://www.emotiv.com/

首先说一下Emotiv的产品,有两款平民价的无线非入侵式的脑电波读取仪器,Insight和EPOC+,前者有5个感应器,后者有14个感应接收器。更多信息可以到官网查看。

【Emotiv Insight】

【Emotiv EPOC+】

Its convenience is intuitively obvious. It’s much cheaper than headsets with dozens even hundreds of electrodes in research labs. Emotiv headsets can be offered by nonprofessional people like normal consumers or students. In addition, it provides easy connection via Bluetooth, accessing cell phones, personal computers, etc.

产品的优点还是很直观的,首先,便宜。专业的实验室例如神经科学实验室带有几十个甚至上百个感应电极的头盔是不知道贵个百倍、千倍了。Emotiv的产品起码像我这样贫苦的学生党还是能买得起啦。其次,连接方便。采用蓝牙连接的方式,只要带有蓝牙功能的手机、电脑等都能轻易连接 。

OPERATING GUIDE!!!!!!

It needs some techniques to wear the headset properly with good contact quality. If you have one, it is suggested to pay some attention to the following points.

以下是我对这两台设备的使用建议。

- Charge first!

- Hair matters, remove hair from sensors as much as possible.

- For EPOC+, always hydrate the sensors to be FULLY saturated with saline solution. Or try hydrating hair directly where sensors are. BUT when they get dry, hydrate them again, ... and again. T^T

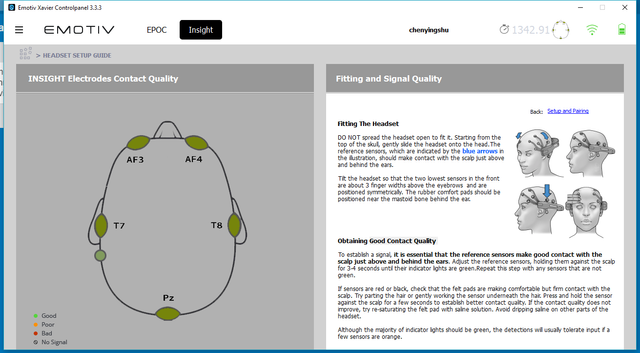

- To fit correct sensors positions, among which the two references guide the others. When the reference sensors (mastoid bone) behind ear is detected well, others can be detected much better.

- Sensors touching fully and firmly with scalp leads to good connection.

- For Insight, DO NOT unpack the device if you are still using it. Recharge it again when you use it after re-assembling it.

- For EPOC+, not good for raw EEG data record, most data are ZERO!

【Remember to remove hair(especially for girls)】

APPLICATIONS

Here follows some official applications, free or paid.

以下是一些官网提供的相关体验软件,有些是免费的有些又是来坑钱的。

MyEmotiv – phone app

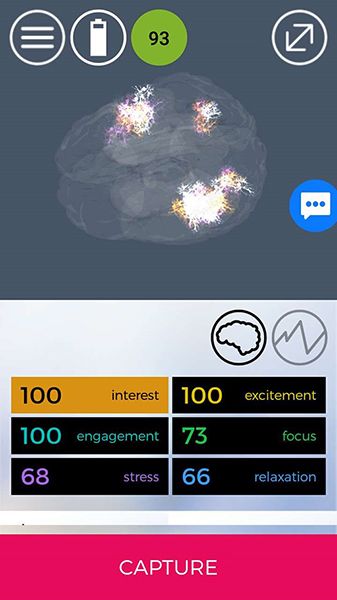

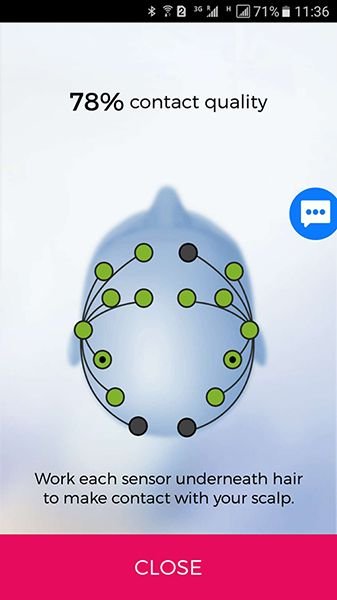

Well here comes trials of some software and applications. My choice is MyEmotiv a phone app, which can assess your emotional performance under different situations. I provide friendly interface to connect the device and visualized 3D brain for real-time neural signals.

MyEmotiv 是最方便的第一体验的app,只需要手机在官网下载并安装好应用程序,打开蓝牙,把头盔的蓝牙也打开,软件就会自动检测到设备,并实时以图像反馈连接程度。

【MyEmotiv with Insight】

【MyEmotiv - EPOC+ contact quality】

Both Insight and EPOC+ can be utilized in this app. And we can immediately see intuitively the contact quality. With my experience, Insight can reach a better contact quality (100%) with shorter setup time (within 30 mins) than EPOC+ (lower than 80%, over 30 mins). Besides, it becomes weaker and weaker contact gradually as using EPOC+ in longer timer. It may blame the sensors which are supposed to keep hydrated all the time.

根据我的经验,两种头盔神经头盔中Insight能更快地完全百分百地连接到所有感应点,而EPOC+最多只连接上了12个感应点,只有70%的数据感应程度。而且EPOC+明显比Insight需要花费更多的时间进行使用前的设置和连接。一般来说,Insight在半小时内甚至几分钟内就能连接上所以感应点,而EPOC+超过半小时都达不到80%的连接度。

Emotiv EmoBot (V.3.3.3.0) – window desktop app

It a virtual robot face simulating human facial expressions only working for EPOC.

这款软件是利用EPOC+的数据来让虚拟的机器人模拟使用者的表情,包括眨眼,皱眉,微笑等。但是,这个机器人经常神经错乱就是了。

【EmoBot - Facial Expression】

If you wonder the accuracy, well, I have to say it is #@$!#*&...<( ̄ ^  ̄)@m

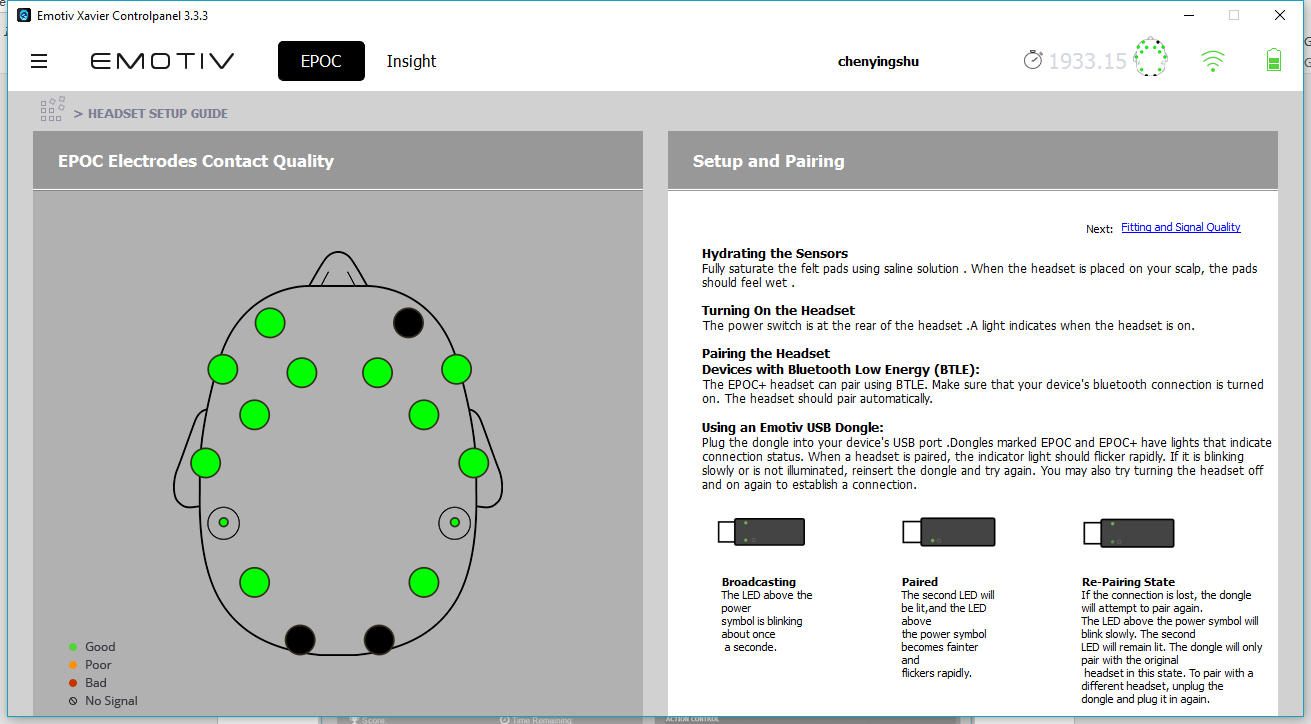

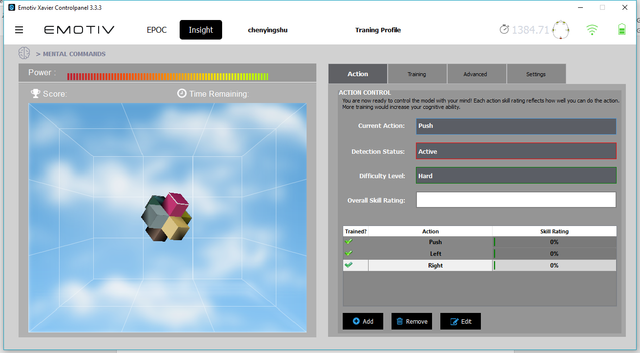

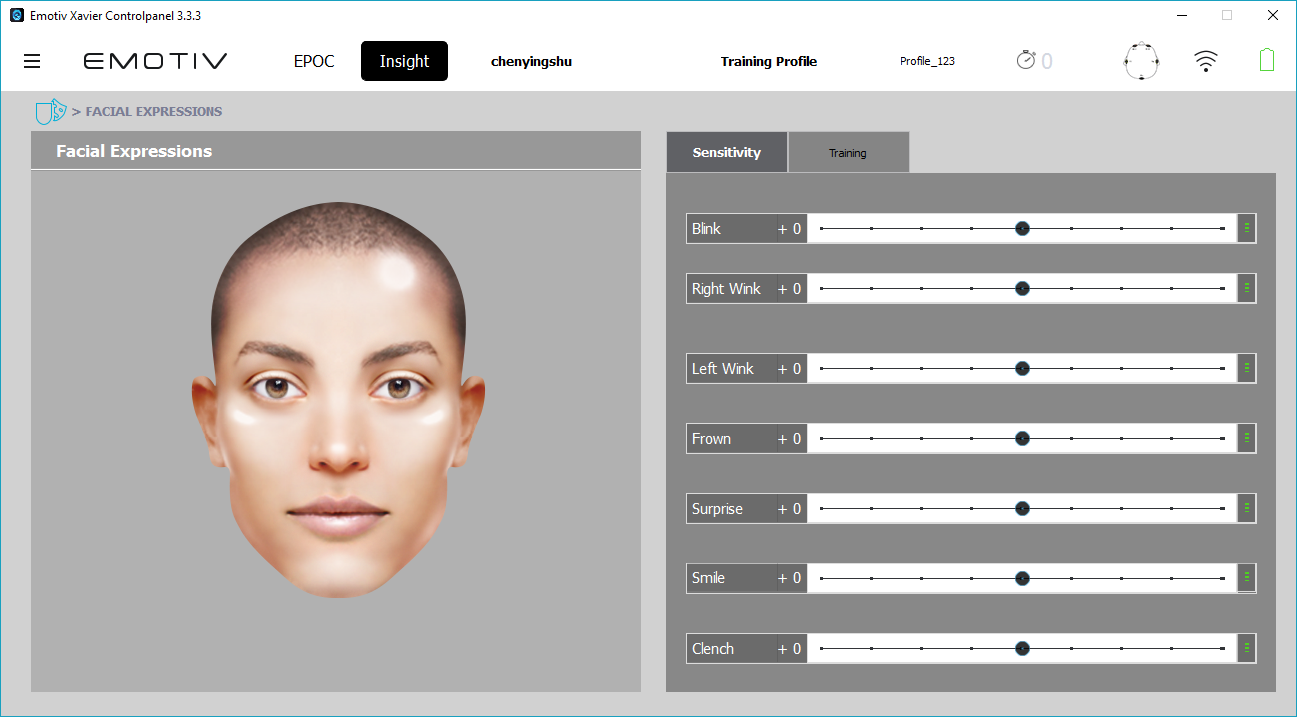

Emotiv Xavier Control Panel (V3.3.3) – window desktop app

The control panel helps users how to set up the device, observe the real-time contact quality and realizes the functions like training and detection of mental command and facial expression.

官方的这个控制面板可以说十分重要,从佩戴设备到指令感应和脸部表情识别的初体验都可以一并用这个应用实现。

【Insight setup】

【EPOC+ setup】

【Mental command】

【Facial expression】

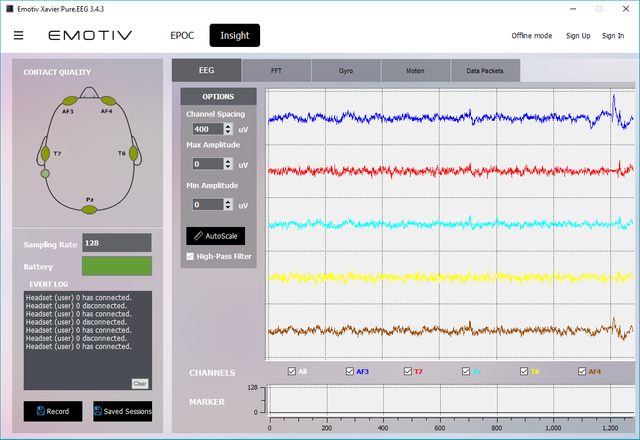

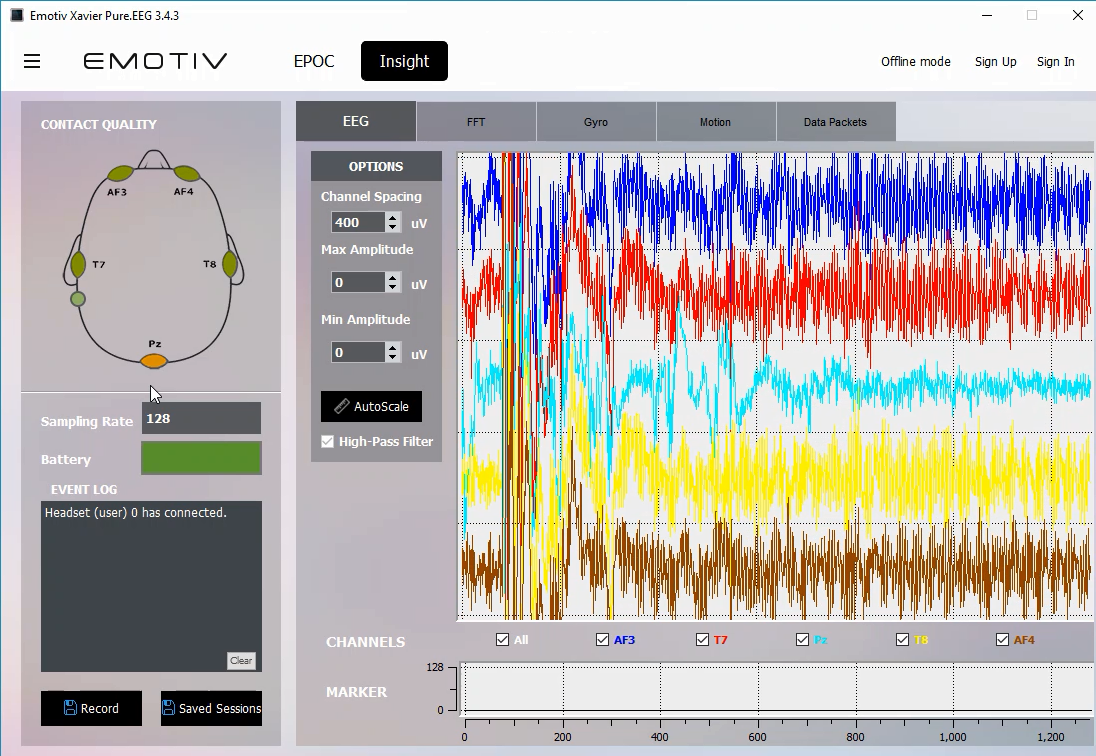

Emotiv Xavier Pure.EEG (V3.4.3 subscribed up to Sept. 2017) – window desktop app

Note: There is a new version of premium application EmotivPro after Sept.

I subscribed the raw EEG records monthly before September.

【EEG line chart- good contact】

【EEG line chart- bad contact】

The software displays the real-time EEG oscillation line chart. In experiments, some can set up within several minutes with good contact quality but some over half an hour still with bad contact.

我几个月前开始每月付费订阅了原始脑电波(EEG)数据,这款应用就是官方提供的软件,可以实时监测每个感应点的脑波数据并记录原始数据。现在的新软件已经变为了EmotivPro或者Cortex,我想应该功能会更详细些?

EMOTIV SDK (COMMUNITY EDITION - V3.5.0)

Ref: https://github.com/Emotiv/community-sdk

For developers Emotiv provides APIs for device information, average band power, facial expressions, mental commands, motion data, performance metrics(0.1Hz), raw EEG(charge), performance metrics(2Hz). If you want to access to raw EEG data, you can subscribe quota monthly or annually😩.

The SDK supports various programming languages, e.g. C++, C#, Java, Python, etc. and platforms, e.g. Windows, Mac, Ubuntu, Android and iOS. In general, C++ supports most functions of APIs, that is why I chose C++ as my development language.

Emotiv官方提供的SDK,大部分功能是免费的,有些需要付款,例如想要原始的EEG数据。这个SDK支持多种编程语言,包括C++,C#,Java,Python等等,现在貌似还新增了一些网络标记语言。

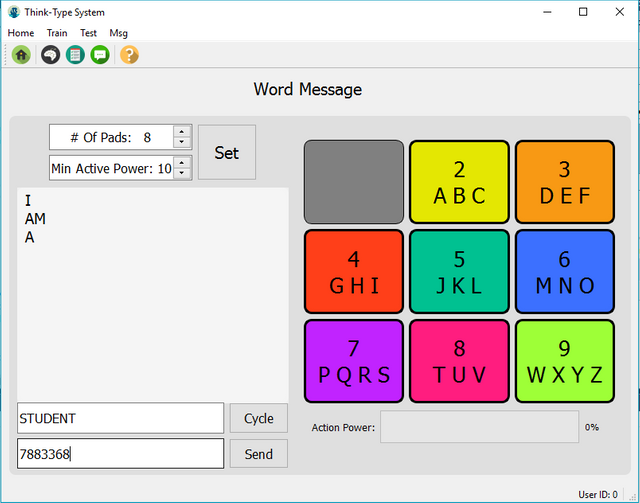

BRAIN COMPUTER INTERFACE SYSTEM

I proposed a BCI typing system in which users can type a letter or words after training specific typing pad(s).

我开发了一个脑机交互的“打字”系统原型, 为了测试影响或者有助于脑电波信号训练和检测的各类因素,可能涉及界面设计,人为因素或者环境因素。基本上人为因素在脑波控制上起了决定性的作用。

【Prototype - typing words】

I explored different potential neutral signal training and detection stimuli via mental command experiments. The possible factors influencing EEG training and detection involved UI elements, man-induced factors and environmental noises.

I mainly merely test Emotiv Insight with over 90% contact quality on average abandon EPOC+ with low contact quality (up to 78% /(T^T)~).

The analyzed results revealed that with Emotiv Insight visual factors have minor influence while major assistance is from human self. However, specifically, colors, sound effect and animation when training help users recall the feeling and increase the detection accuracy, while size or shape, position of elements make little difference to training and detection. As expected, motions or emotion like speak, blink regularly, left or right wink regularly, head moving regularly and strong emotion help detection reach over 65% accuracy on average. But some motions such as frowning, eye movements, arm movements, leg movements make little difference to detections; even unregular head movement makes EEG fluctuation.

Here is a BCI typing system prototype demonstration video.

下面是我做的一个系统原型的展示视频。

BCI Typing System Prototype from Yingshu Chen on Vimeo.

MORE….

(* ̄▽ ̄*) Recently Emotiv finally provides Cortex service for developers, but I had my work done and have NO chance to have a try (T_T). More services and applications can be found in Emotiv website with updates periodically.

By the way, questions, discussion and suggestions are welcomed.

Emotiv官方总是在不定时地有新的信息、软件和功能供客户使用,两个月前和两个月后的SDK功能和软件都不一样,这个真的让我又爱又恨。之前我还在特别无解里面API的检测功能,现在都公开介绍了。o(╯□╰)o可惜现在我暂时没有多余时间去尝试新的API的功能,如果感兴趣大家可以不定时在官网发现不一样的介绍和软件。

如果有任何疑问欢迎提问哦。

很有趣

谢谢!

Welcome to Steemit! Enjoy the ride as much as you can!

Very interesting research project!

感覺分心了會把字都刪掉,會嗎 @@

分心了基本上都是打不出字,也有可能打錯字/::>

未來科技!? 可以用來遠距離交流嗎?

未來可能真的會普及這門技術也說不定哦:-D

遠距離交流的話暫時還做不到,這款頭盔依賴藍牙連接,設備間距離越近連接信號越好,最好還是在1米範圍內吧,而且隨著頭盔耗電越來越大連接信號也會越來越差。Emotiv的頭盔特點就是在於無線連接又輕便攜帶,特別親民。而像在一些神經科學實驗室裡面帶有上百個電極的頭盔,基本都是有線連接,遠距離也一樣做不到。不過如果以後有一款用網絡連接的頭盔,我想遠距離交流應該是不成問題的😊。

o my GOLD !! beatifull main you are ; )

THANK YOU!😊

If you give some time to attention, it achieves success , Whose real evidence is that you yourself , Very learning post

I just followed you and upvoted :)

thank you

🤗🤗 https://steemit.com/cryptocurrency/@hrsh/steem-where-does-the-money-come-from