The Morality of Artificial Intelligence

For a while now, I've been really interested in strong and super intelligence along with consciousness itself. My favorite movies explore these concepts in detail and include:

- The Matrix series

- 2001: A Space Odyssey

- Inception

- Ex Machina

- The Thirteenth Floor

- Tron

- Existenz

- The Lawnmower Man

- I, Robot

- A.I.

- Transcendence

- Bicentennial Man

- Dark City

I also love fictional books which explore these topics like:

- Avogadro Corp: The Singularity Is Closer Than It Appears

- The Daemon

- Freedom tm

- Influx

- Kill Decision

- Ready Player One

- Snow Crash

- Player of Games

- The Diamond Age

(If you look closely you'll see I've listed every book from Daniel Suarez. I love that author's work. If you have additional books or movies to add to this list, please leave a comment below).

I'm also finishing up Superintelligence: Paths, Dangers, Strategies by Nick Bostrom (which has been amazing).

As a computer programmer, I'm deeply involved in the tech culture and get to see new things happening a little earlier than most. I built my first websites in 1996 and even then, I was convinced the Internet was going to change the world. I've felt the same way about bitcoin, Voluntaryism, and Artificial Intelligence. To some degree, I feel the same way about Steem, but we're still in the early days so I'm not quite ready to make that call.

Exploring the origins of our morality has been important to me in many ways because of my fascination with the future and how our understanding of morality will shape it. We're all familiar with the dystopian fictions where the robots take over the world, but how many of us explore the real-world influences which could bring about a hell or a heaven? What things can we do now (if anything) to bring about an optimal future if computers can do everything human brains can but orders of magnitude faster?

Smarter people than I have been talking about this stuff for decades. I'm just happy to be along for the ride. I started a Pindex (think Pinterest for people who like educational material) with lectures and content and want to share it with you. It's called...

The Morality of Artificial Intelligence

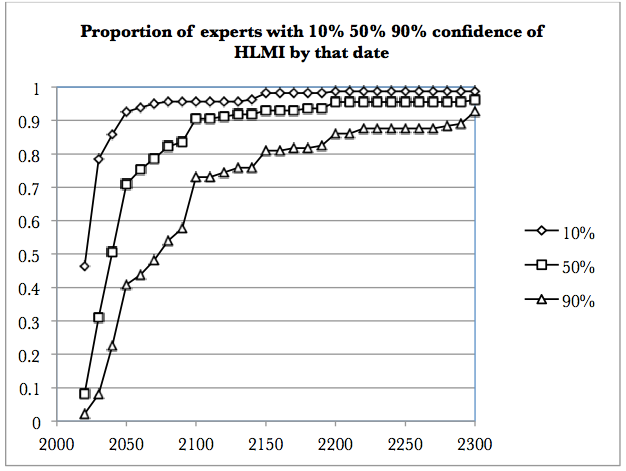

The board will stay updated with interesting content like this nugget from Nick Bostrom's paper: Future Progress in Artificial Intelligence: A Survey of Expert Opinion:

"...the results reveal a view among experts that AI systems will probably (over 50%) reach overall human ability by 2040-50, and very likely (with 90% probability) by 2075. From reaching human ability, it will move on to superintelligence in 2 years (10%) to 30 years (75%) thereafter. The experts say the probability is 31% that this development turns out to be 'bad' or 'extremely bad' for humanity."

I hope you'll join me on the Morality of Artificial Intelligence Pindex board and suggest new content as well. We as a species should work to figure this out. Most experts think a super intelligent future is inevitable and will happen within our lifetime. The question we should be asking ourselves is what morality should super intelligent beings have and why? These aren't easy questions, and it looks like we have less than 50 years to figure them out.

Great article and a hot topic (at least should be)!

On that list definitely missing is "Diaspora" from Greg Egan. It has concepts and ideas far beyond what I have read from almost any other SF novellist. It starts with the creation of a new AI from the very perspective of the growing expanding mind itself. Literally mind-blowing. Partly not an easy read, but for sure worth it.

Wish you success with the Board

All the best,

mapilem

PS: Sorry for my English, I am German from Mother tongue.

Nice! Thanks for the recommendation, I just went ahead and got it on Audible. And your English is great, no worries there.

Good Article, ty!

It is a fascinating topic. You ask the question "what morality should super intelligent beings have?" And there is the other side of the coin, what should be our ethical considerations in interacting with them?

Are artificial intelligences moral agents? Do they have interests? Is there something it is like to be an artificial intelligence (I love that framing)?

It will take longer than we have to work all these things out, I'm afraid. We haven't even gotten human morality down yet.

As they say, may you live in interesting times... ;)

I'm almost done with Nick Bostrom's book, and he talks about "Mind Crime" and the ethical challenges we may face if we choose to simulate intelligence in order to figure out the best way forward without destroying ourselves. It's really fascinating stuff, but also a bit scary. We definitely haven't figured out morality (though I'm a big fan of the NAP), but I do think the potential to use super intelligence to help us learn it is fascinating.

As you can imagine, I'm not a fan of the NAP at all. I don't believe in property rights. I would be in favor of a social convention that provides for some manner of possession, but it would be more akin to "personal effects" than anything like ownership of land, for example.

This is actually very close to where our philosophical differences begin to diverge. I would argue that it is a matter of consistency. Such property rights are not something found in nature, early societies, or even many cultures that currently exist. They are a relatively new phenomenon.

They initially required an assertion of a right which then could only be defended through force, or at least the threat of force. The very existence of these forms of property would seem to violate the NAP, had it been a governing principle when they were established. Wouldn't an adherent of the NAP have to find that it is unjustified to profit from violations of the NAP, in order to remain consistent and intellectually honest?

I've heard the "personal effects" argument in anarchist Facebook debate groups (I'm in several), and it always breaks down for me. I prefer Jeffrey Tucker's perspective on property rights which he talked about recently at PorcFest. Here's one of his articles which I liked:

https://tucker.liberty.me/the-defense-of-private-property-aristotle-and-mises/

As for defending land rights, that's definitely a more sticky topic. Go back far enough and you'll always find violence. That said, go back further than that and you'll also find non-humans, so we have to be careful to avoid a naturalistic fallacy as well. And yes, I get that agriculture may have been bad for humans, leading to The State and to war, but we can't throw the baby out with the bath water, as they say.

Okay, @bacchist, you made me do it. Let's have a full discussion of where morality comes from over here: https://steemit.com/philosophy/@lukestokes/where-does-your-morality-come-from

Thank you, as well! It's been a healthy discussion.

I found a lot to take issue with in Tucker's article, but I'll just comment on bit that you quoted. It relies on an argument from Aristotle that is really quite absurd on its face.

He is making a defense of the idea that women and children are property of men. The alternative that he is defending against is the prospect of "having women and children common." This supposes that women and children can be nothing more than property in one form or another, that they are objects or tools to be used by men as they see fit. He defends the idea of private ownership of women and children on the basis that a man couldn't regard them tenderly unless they were considered his property.

I think that it's a rather sad way of looking at the world.

And it's far from necessary to base social relationships in terms of ownership and objectification. We have knowledge of a much wider range of adaptive strategies than Aristotle was even capable of considering. Cultures throughout the world employ many different configurations of how kinship and descent are organized.

The framing of social relationships in terms of ownership of property is most commonly found in those societies in which there is a strong state with an active military, and whose economic system is based on slavery or bondage in one form or another. This isn't the result of a thought experiment, this is empirical evidence, historical record.

I completely fail to see how this view of the world can be advocated by anyone who favors freedom.

(Replying to @bacchist)

An important point regarding human ownership:

I know for certain Tucker isn't advocating slavery here. Private ownership is...

and

I agree that:

All that said, I probably should explore more of the ideas behind "personal" property verses "private" property. The arguments I've seen so far in the Facebook groups I've mentioned have not been very compelling, but I always like challenging myself. Thanks again for the dialogue!

Thank you for bringing up a topic which moves me.

Hmm ... I read most of the books you mentioned and am interested in the questions you named.

"We" (as humans) indeed figured out morality.

Study Buddhism and you will find a fascinating world of psychology, sociology, humor and a great amount of intelligence. The Buddhist model doesn't include a creator, which is the most significant difference to other models of religion. Have you ever listened to Alan Watts? I deeply recommend his lectures, they are fascinating and give new and extraordinary insights in the way of thinking.

There are sources which we can take our ethics from. I would go so far to say that without strong ethics within your every day actions - for example programming a computer application, examining a patient, teaching kids, going to the groceries - no matter what you think, do and touch - nothing really is of importance. Or I should say: Ones actions and reactions are in general just random and mostly influenced by other things but ethics. For instance convenience, distraction, fear, boredom, excitement, dullness, .... you name it.

Morality isn't that difficult to find. Mostly within interaction with living beings not so much by theory.

Bummer that I cannot give you my writings on this, because I am a German speaking person.

Good day for you.

I was just listening to a Very Bad Wizards podcast recently about how evolutionary psychology aligns well to support the concepts of Buddhism. Very interesting stuff.

can you give a link or name? Thank you.

Here you go: https://verybadwizards.fireside.fm/120

Thank you, I'll give you response on that.

Intersting post :)

I think that this is a false problem, because human consciousness is not the fruit of computation.

"Strong AI" is a interesting matter for a movie or a book, but also if we could create machines that behave very similar to real human being under many circumstances, this machine will not have experience of reality as a human being.

So to answer to your question, the super intelligent uncounscious machines created by humans, will have the morality of the human beings who created them.

This is often misundersood because we tend to think that matter is "real" and we postulate (inside our consciousness) that our consciusness is a property of matter, while it is matter that is something inside our consciousness.

That would take a lot of convincing for me to believe. The laws of physics seem to work fairly well, even if we have to make some axiomatic assumptions about reality using our current level of understanding.

Laws of physics are something your consciousness recognized. You can assume they are "real" because your consciousness recognizes this attribute of reality in them.

You can say that matter exist indipendently from consciousness, but the only thing you can really scientifically observe is that there is this tought (matter exist indipendently of consciousness) in your consciousness.

Understanding that is just an experience, doesn't need a act of faith. While believing that matter is something that exist independently from consciousness is a matter of faith!

Sounds a bit too much like, "If a tree falls in a forrest and no one is there, does it still make a sound?" We have so many methods for understanding physical reality from physics to math which help us make sense of our existence in space time. If we don't act on those interactions with the physical universe with rationality, then we'll just spend time debating what the true meaning of the world "real" is. To me, that doesn't get us anywhere. If we are going to discover something beyond what we understand now, either in the depths of the quantum world, a multiverse theory, or even a holographic universe, I don't think it'll be by just thinking about consciousness. We're just evolved primates and many, many animals have consciousness along with us. It's not magic, it's just an emergent, evolved property (IMO).

"The question of whether a computer can think is no more interesting than the question of whether a submarine can swim." - Edsger Dijkstra

I'd like to think a machine would develop the same morality we could move towards given time - a synthesis of science and spirituality like James Lovelock's Gaia hypothesis, in which life itself and solidarity with all forms of life are seen as the end goal of morality.

I have a feeling we'll use machines to help us understand our own morality. They may even reveal that we also are just biological machines.

I took the shortcut to "realize" (not to "understand") my morality.

I would like to challenge your view that we humans are just biological machines. I am an organism. I would state that organisms were there long before machines arose. We didn't know of machines before they weren't invented. But, unfortunately, since than we widely put on a mechanistic notion of the world.

The difference is quite significant. A machine is made out of bits and pieces, an organism grows and is changing every single day from birth to death - there is nothing added to the organism. That's fundamental. As I gave birth to a child this is obvious to me.

Why is it important?

In the realization that I as a creature grow organically I must admit that I deeply depend on other organisms here on earth. I am interconnected to them. That means what happens to the bee or the fish or the bear, happens to me as well. Also I depend on other humans.

When I see them mostly as biological machines, I treat them as machines, to put it simply. I then may believe in mechanical treatment, in fixing organic problems just as I fix a broken clutch. As a specialist in surgery I may know everything about the human heart or bladder but the complexity of the human body and mind I cannot comprehend to the fullest. As I am not able to full realization I must have something to rely on.

This, for me, is ethics.

To build up strong ethics, I need a source where I get them from. My existence is standing on the shoulders of others. Already ethics were formulated and given to the world. I "just" have to put them into my daily habits. Because in theory I already know that I shouldn't harm or kill other living beings, I should be authentic (truthful), not badmouthing about others, not stealing, not betraying.

Have you ever linked ethics to your daily actions, reactions and thoughts?

I try and must say: that is the most difficult thing. Being honest for a single day, not betraying this principles is just as difficult (and exiting!) as giving birth to a baby.

Make the experiment and pick just one of the premisses.

Interesting perspective. Thank you for sharing. I'd also argue treating the human body as a biological machine has led to great breakthroughs in medical science and improved wellbeing for many. Similarly, natural cycles and systems have been analyzed mechanically to bring us benefits (and risks) as we go about shaping our world. Many feel that's unnatural, but I view our actions as just another part of nature.

The distinction you make, to me, will be less obvious in the future as we begin to augment our bodies with nanotechnology and genetic engineering. We will have control and understanding on a mechanical machine level. What we do with that will determine the future of life and whether it spreads in the galaxy or ends in self destruction.

.. not sure if I got your point. Do you mean it in that way:

As - I guess, again, Alan Watts - said it: "Buildings of bricks or steel made by humans is just the same as a bird building its nest. Both can be called "natural"."

So far I can follow.

But then I ask: Do birds have an impact on humans as they have on them? ... and not only birds. As humans got power over nature we are the species with the most occupied and transformed space, because of how we use machines and mine resources. We pollute and exploit other humans & creatures & matter in a very dangerous way. We could do better.

Michael Braungart, a German Chemistry Professor and "Cradle to Cradle" inventor states in his lectures: "We aren't too many, we are just to dumb."

Following the principle that humans are't separated from all other creatures on earth and deeply depend on one another, your argument limps a little bit. Because it primarily uses the perspective on us as a species alone. What about the rest?

It is as if the parasites kill its host; although I dislike this comparison a lot. For it shows how badly we look at ourselves.

I really doubt that treating the human body as a machine was responsible for medical break throughs or if not something else could be held for a cause. Like treating humans like complex organisms and social beings, capable of feelings.

I myself are having zero experience when it comes to surgery in medical treatment. ... Actually to the contrary. My personal experience within the school medicine was in the most cases disappointing up to frightening. MRI, Ultrasound and other diagnose helping machines weren't as conclusive as their reputation. From my point of view it much more depends on a good and qualified doctor using the machinery. But I had it myself and heard it many many times from others: Doctors nowadays lack confidence and rely to much on machines, unlearning their profession. I am saying that, cause I was lucky to find my way to good doctors who were skilled by examining me without any machine whatsoever and hit the point.

I am sceptical on your outlook. From what I think is that you overestimate control and genetic engineering. But maybe wer are in the same boat and just details and language issues set our arguments apart.

Thanx for responding!

P.S. Here a video of William McDonough, the American colleague of Michael Braungart:

Would that be a revelation?

Unfortunately, for many, it would be. There's a lot of backward, primitive thinking still dominating our cultures and societies. To suggest we are just animals is offensive to many people. To suggest other animals also have evolutionary concepts built in like empathy, tit-for-tat game theory and the like is a revelation to many.

Great article - may I ask what your professional background is?

Thank you. You can find my full story by clicking my name in my signature. I majored in Computer Science, and I currently do that professionally for the company I co-founded, FoxyCart.com.

Very interesting article - upvoted. If you are interested in AI; check out some of my articles too.

Best

Alex

You plagiarized an article, cheetah bot called you on it, and you replied with "good info"? I have no interest in reading plagiarized content, but I will flag it.

Edit: I see now you did include a link to your source further down in the article, so I won't flag it. Steemit has value to me because it's where I go for original content, not copies of information I can find elsewhere.