PRISMA - How Does It Work? Minimization of Perceptual Losses

Hi Steemers!

This is my second post here, on Steemit and I extremely excited to contribute to this amazing community. In my introduction there were some discussions and debates on the fact, that I used big words of machine learning and #Blockchain just to attract attention and have nothing to do with the topic itself. Therefore I devote this post to #MachineLearning algorithms. Once again I would like to draw your attention to the fact that I am not an expert in this field of knowledge and it’s just an area of my interests. In other words I'm on the beginning of this fascinating scientific journey but would like to share some thoughts that I hope you’ll find interesting.

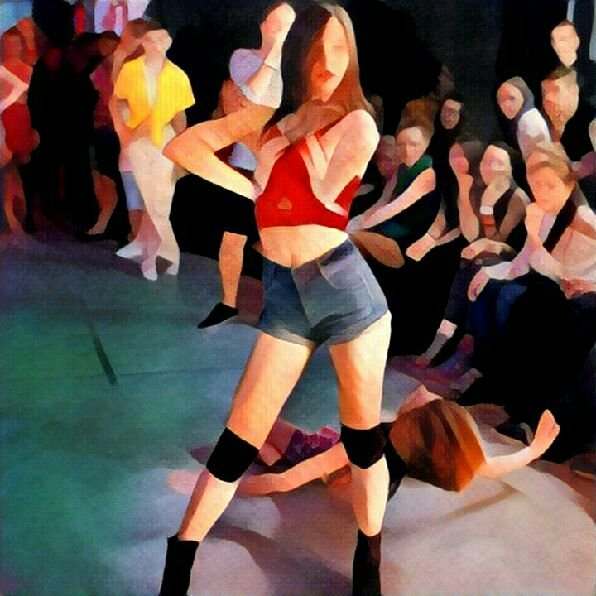

Recently I have learned about the Prisma app . At that time, its already used by most of my friends and even my stepfather, but initially it was released only for iOS, and as the owner of android, I had to patiently wait for it.

If you don’t know, Prisma is the app for processing photos. According to the analysts of "App Annie", Prisma has become one of the most downloaded apps in ten countries within just nine days: Russia, Belarus, Estonia, Moldova, Kyrgyzstan, Uzbekistan, Kazakhstan, Latvia, Armenia, Ukraine.

App description in the Google Play states: "Prisma transforms your photos into works of art using the styles of popular artists: van Gogh, Picasso, Levitan, as well as known patterns and ornaments. A unique combination of neural networks and artificial intelligence helps to transform your memorable moments into masterpieces."

From the first glance it seems magical, but your photos really become as a work of art... It is a Pity that there was no such a technology when "A Scanner Darkly" movie with Keanu Reeves was filmed. The artists had to manually draw each frame in the movie. It was extremely exhausting and required a lot of efforts.

Once I was interested in Prisma, I decided to drill down and came across an article written by researchers from Stanford. Guys from Department of Computer Science describe an algorithm that allows to preserve the sense of the image by changing the performance! This article is called "Perceptual Losses for Real-Time Style Transfer and Super-Resolution" (Justin Johnson, Alexandre Alahi, Li Fei-Fei).

Task which is solved by the algorithm is: to convert the first image in such way that its style was similar to the second, while retaining the meaning. The image whose style we use is called Target Style. The image which we transform called Target Content. Note that the neural network is trained separately for different Style Target images.

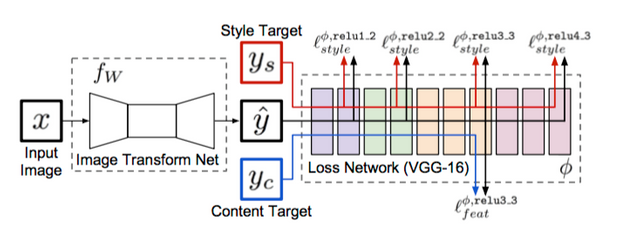

It is obvious that the convolutional neural network is used to transform the images (Image Transform Net) and we need to train this network. Initially I didn't understand how... How to explain to computer which images have a similar styles and content?

System overview from the article:

"We train an image transformation network to transform input images into output images. We use a loss network pretrained for image classification to define perceptual loss functions that measure perceptual differences in content and style between images. The loss network remains fixed during the training process." - oh, it's cool! They uses second network to define the images style similarity!

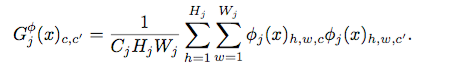

In the process, neural networks generate many "features maps". Features map - three-dimensional array of numbers.These structure contain information about the image in an accessible form for machine. The main idea is to use them to determine the style similarity of images. But they are already used to determine the class of the image, as we can use them in different way? You can use their correlation! Authors propose to encode the style by Gram Matrix:

X is the input image, C_j, H_j, W_j - size of the j-th features map, \Phi_j(x) - j-th features map. The difference between the styles of two images can be determined as the squared Frobenius norm of the matrix diff:

We can easily calculate the similarity of styles on j-th layer. To use one is not enough, you need to use many features map. Author used four outputs of convolutional layers.

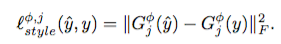

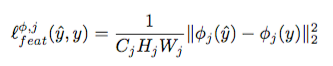

We are done with the Style Losses problem, and it’s time to solve Perceptual Loss problem. For solving this problem authors introduce another metric (MORE METRICS!), that allow to determine difference between the contents. Now, we don’t need Gram Matrix, we just need a simple vector norm:

Minimizing the total losses get us properly trained Transform Net. Notice that the Transform Net is a convolutional neural network.

History. Pioneer of this problem is Gatys, and Stanford guys often refer to him. His last work is devoted to the transformation of style by retaining colors.

What about Prisma? It may use other architectures of neural network, but the concept is the same.

When I first time installed prisma I was surprised how fast they process all the data. Now Prisma developers claim that they have more than 20 millions active users and they still grow fast. It's insane

Hi, phenom. Neural networks require a lot of computational power to perform complicated mathematical algorithms. Thus prisma developers process images on their extremely powerful services that allow users to enjoy so fast service

nice post @krishtopa

Jumping on phone to grab Prisma right now... I see an album cover in the making! :-)

Hello, olverb! Try to play with the filters. Some of the them are suitable for portraits and other for views. You should Experiment!)

Oh... I certainly will! Thanks

This is fascinating. Is there a plan for a desktop version? I would be interested in buying it to experiment with using some of my large photographs - particularly portraits (there are a few in my posts on here).

@thecryptofiend they don't have desktop app, but they have convinient telegram bot with id @AIPrismaBot. I found it useful

Thanks:)

Hello, thecryptofriend. There is open source programs. You can look here: https://github.com/yusuketomoto/chainer-fast-neuralstyle

P.S.: nice black&white portraits!)

Thanks will have a look:)

👍fantastic article @krishtopa

Excellent Post Krishtopa!! I must admit, the calculus and the math are over my head, but I'm a big fan of A Scanner Darkly (and Richard Linklater - School of Rock, Dazed and Confused, the Before Trilogy and Boyhood - wow!)

Rotoscoping used to be hand based, but now is done by computers. This was the case in A Scanner Darkly. They used a certain software for it (I remember it being discussed in the Linkater documentary I saw on Netflix a few months ago)

However, can you imagine the same movie in the style of Monet or Picasso? Or changing the filter on-demand at various points in the movie? It's just simply incredible.

I had never heard of Prisma until today and i'm going to have to download it today!

Have a great day and thanks for your amazing second post!!

-bigedude

Hmm, It seems that different info provided in Ru an En versions of wikipedia. Ru version claims that each frame is processed manually but English page says they used interpolated rotoscoping, which involves the automatic drawing of intermediate frames. Obviously they might used third-party programs to avoid routine.

Good post, krishtopa. Do I understand correctly that training occurs once for a one Style Target? Or final image is the result of the training?

Thank you, puhoshville. Yes, once for a one Style Target.

Yes, this delightful app, I would even say innovative. However, I do not like it. I love natural photo.

Moreover, obtained through his photos look the same as after a similar standard filter Photoshop.

Maybe it's the lack of Prisma implementation. The most interesting thing is that algorithm "chooses" the most relevant filter. You just need to find example of style you want.

Nice post and very cool app but your image links are broken. This has happened to me several times. Go to edit, then html mode and make sure that there is http:// before the image location.

Yes, thank you! They fixed.

#academia #introduceyourself ??????????????

@anyx @cheetah

Hi @krishtopa, my background is mathematics and computer science, very interesting read. If you have time check out my wife's art blogs @opheliafu

Hello, @cryptofunk. Glad to see my colleagues. I think it will be interesting!

hey there am new here,but by reading through the steps of the steemit platform, i seems to find it fascinating, and with these brilliant ideas have got, it sure should be of benefits to me...with my help and help of the community we can do this.